The way developers write software is shifting faster than at any point since the jump from assembly to high-level languages. In May 2026, three distinct threads — spec-driven agentic workflows, local AI agent debuggers, and natural-language programming experiments — converged to illustrate just how far the tooling has moved in roughly 18 months.

The Death of Vibe Coding

Andrej Karpathy coined the term “vibe coding” in February 2025 to describe a freewheeling, prompt-and-accept approach to LLM-assisted programming. By early 2026, he was already walking it back, telling followers that the era is ending and that “agentic engineering” — orchestrating AI agents against detailed specifications with human oversight — is becoming the default professional workflow.

According to Mariya Mansurova writing in Towards Data Science, the shift has a clear logic: vibe coding trades quality for speed, while agentic engineering tries to claim both. Mansurova documented a 4.5-hour build of a working fitness app using LLM agents, structured around a written specification rather than ad-hoc prompting. The result, she argues, is software that holds up under scrutiny rather than collapsing when requirements change.

The term “agentic engineering” is gaining traction specifically because it carries two deliberate signals: agentic acknowledges that developers are no longer writing code directly most of the time — they’re directing agents that do — and engineering insists that skill, discipline, and craft still matter. The workflow is learnable, improvable, and has its own depth. That framing matters because it pushes back against the narrative that AI coding tools make expertise irrelevant.

What Spec-Driven Development Looks Like in Practice

The practical difference between vibe coding and spec-driven development comes down to where the thinking happens. In vibe coding, the developer improvises in the prompt. In spec-driven development, the developer writes a detailed specification first — covering architecture, data models, edge cases, and acceptance criteria — then hands that spec to an agent.

Mansurova’s fitness app build, detailed in Towards Data Science, illustrates the tradeoffs. The agent handled the bulk of implementation, but every meaningful decision — what data to store, how to structure state, what counts as a passing test — was defined in the spec before the agent touched a line of code. Human oversight remained active throughout, catching errors the agent introduced and redirecting when the implementation drifted from intent.

This workflow demands more upfront investment than vibe coding. Writing a thorough spec takes time. But it pays back in fewer hallucinated APIs, fewer architectural dead ends, and code that’s easier to audit. For professional developers building tools they’ll maintain, the spec-first approach is increasingly the default — not an optional extra.

Raindrop’s Workshop: Local Debugging for AI Agents

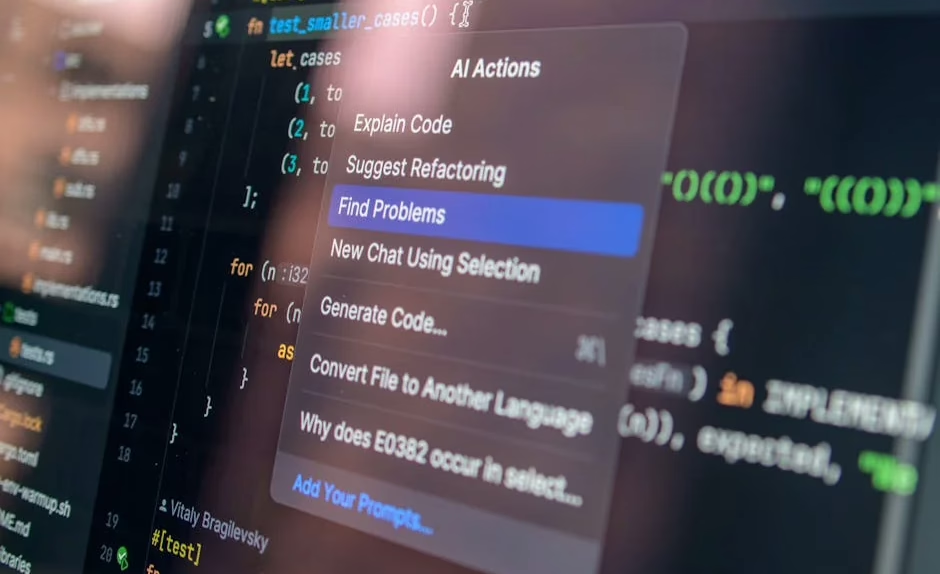

One of the practical problems with agentic workflows is visibility. When an agent makes dozens of tool calls and decisions autonomously, understanding what went wrong — and why — requires tracing through a chain of events that traditional debuggers weren’t built to handle.

Raindrop AI launched Workshop on May 14, 2026 to address exactly that gap. According to VentureBeat, Workshop is an open-source, MIT-licensed local daemon and UI that streams every token, tool call, and decision made by an AI agent to a local dashboard, typically hosted at `localhost:5899`. All telemetry is stored in a single SQLite `.db` file, keeping the memory footprint small.

Ben Hylak, Raindrop’s co-founder and CTO — previously an engineer at Apple and SpaceX — told VentureBeat via direct message that the local storage model was a deliberate privacy choice. Sending agent traces to external servers is a growing concern among developers working on proprietary codebases. Workshop keeps everything on-device.

Key capabilities include:

- Real-time token and tool-call streaming to a local dashboard

- Single `.db` file storage for all agent traces, queryable with standard SQL tooling

- Self-healing eval loop: coding agents like Claude Code can read traces, write evaluations against the codebase, and autonomously fix broken code

- Cross-platform support for macOS, Linux, and Windows

- One-line shell install with automatic PATH configuration for bash, zsh, and fish

- Build-from-source option via GitHub, using the Bun runtime

The self-healing eval loop is the standout feature. Rather than requiring a developer to manually inspect a trace and write a fix, Workshop enables the agent itself to read what went wrong and iterate. That closes the feedback loop significantly and reduces the manual overhead that makes agentic workflows feel fragile.

CodeSpeak: Coding in Plain English

While Workshop addresses the operational side of agentic development, a separate experiment pushes the abstraction question further: what if the programming language itself were plain English?

Towards Data Science covered CodeSpeak, currently in alpha preview, which Mansurova tested by migrating a 10,000+ line project into an AI-native workflow. The premise is that programming languages have always moved toward greater human readability — from binary to assembly, assembly to FORTRAN, FORTRAN to Python — and that natural language is the logical next step.

CodeSpeak sits at the far end of that abstraction spectrum. Rather than writing Python or JavaScript with AI assistance, the developer writes intent in plain English and the system generates and executes the underlying code. The alpha experience, per Mansurova’s account, is uneven — capable enough to be genuinely useful on well-defined tasks, brittle enough on complex logic to remind you it’s still early software.

The 10K-line migration surfaced both the promise and the limits. Routine, well-scoped operations translated cleanly. Nuanced architectural decisions — the kind that require understanding context accumulated over months of development — still required significant human intervention to get right. CodeSpeak is less a finished tool than a proof of concept that the direction is viable.

WebAssembly and Browser-Native Dev Environments

A parallel shift in developer tooling is happening at the infrastructure level. Luciano Abriata, writing in Towards Data Science, documented building, testing, and deploying a WebAssembly application entirely inside a web browser — no local installation required — using GitHub Codespaces and an in-browser Visual Studio Code instance.

The workflow uses Emscripten to compile C code to WebAssembly, with GitHub Codespaces providing the compute and port forwarding handling the preview. The significance for AI coding tools is indirect but real: as development environments move into the browser, the barrier to integrating AI assistants drops. There’s no local setup to conflict with, no version mismatch to debug, and no permission model to navigate. The IDE, the runtime, and the AI assistant can all live in the same hosted environment.

Abriata’s tutorial covers GitHub Codespaces, WASM compilation, HTML/JavaScript integration, and port forwarding — a stack that’s increasingly relevant as teams look for reproducible, shareable development environments that AI tools can operate inside without friction.

What This Means

The May 2026 snapshot of AI coding tools points to a field that’s maturing in specific, observable ways. Vibe coding — the anything-goes, prompt-until-it-works approach — is giving way to structured workflows where specifications precede implementation and human oversight is built into the process rather than bolted on afterward.

Tools like Raindrop’s Workshop are filling a real gap: agentic workflows generate far more intermediate state than traditional development, and developers need purpose-built tooling to inspect, evaluate, and correct that state. The self-healing eval loop, in particular, represents a meaningful step toward agents that can participate in their own quality assurance rather than requiring constant human intervention.

CodeSpeak’s natural-language approach remains experimental, but the direction it points — toward ever-higher abstraction — is consistent with how programming has always evolved. The question isn’t whether natural-language coding will become viable, but how long the transition takes and what skills remain distinctively human in the process.

For developers, the practical takeaway is concrete: invest in specification skills, not just prompting skills. The developers who will get the most from agentic tools are the ones who can write clear, precise, testable specs — because that’s what separates agentic engineering from expensive autocomplete.

FAQ

What is the difference between vibe coding and spec-driven development?

Vibe coding involves improvising prompts to an LLM and accepting whatever code it produces, prioritizing speed over structure. Spec-driven development requires writing a detailed specification — covering architecture, data models, and acceptance criteria — before engaging an AI agent, with human oversight maintained throughout the build.

What does Raindrop’s Workshop tool do?

Workshop is an open-source, MIT-licensed local debugger for AI agents that streams every token, tool call, and decision to a dashboard at `localhost:5899`, storing all traces in a single SQLite `.db` file. Its self-healing eval loop allows coding agents to read their own traces, write evaluations, and fix broken code autonomously without sending data to external servers.

Is CodeSpeak ready for production use?

CodeSpeak is currently in alpha preview and is not production-ready. Testing on a 10,000+ line project showed it handles well-defined, routine tasks reliably but struggles with complex architectural decisions that require accumulated project context, still requiring significant human intervention on nuanced logic.

Related news

- From Data Analyst to Data Engineer: My 12-Month Self-Study Roadmap – Towards Data Science

Sources

- Mixed Integer Goal Programming for Personalized Meal Optimization with User-Defined Serving Granularity – arXiv AI

- From Vibe Coding to Spec-Driven Development – Towards Data Science

- Developers can now debug and evaluate AI agents locally with Raindrop’s open source tool Workshop – VentureBeat

- Your First WebAssembly Program and Web App (Written, Tested, and Deployed Entirely in the Web Browser) – Towards Data Science

- I Let CodeSpeak Take Over My Repository – Towards Data Science