The way developers write software is changing faster than at any point since Python displaced C for scripting work. Across five distinct fronts — workflow philosophy, agent debugging, natural-language programming, browser-native development, and agentic code migration — new tools and methods released in May 2026 are redefining what a “coding assistant” actually means.

From Vibe Coding to Agentic Engineering

Andrej Karpathy coined the term “vibe coding” in February 2025 to describe the practice of prompting an LLM and accepting whatever it produced with minimal review. Just over a year later, Karpathy acknowledged that era is ending — replaced by what he calls “agentic engineering,” where developers orchestrate AI agents against detailed specifications rather than freestyle-prompting their way to a result.

Mariya Mansurova, writing for Towards Data Science, documented this shift in a 4.5-hour build of a working fitness app using LLM agents. Her account describes a workflow where the developer acts as an oversight layer — reviewing, steering, and correcting agents — rather than writing code directly. The distinction matters: vibe coding optimizes for speed of first draft; spec-driven development optimizes for correctness and maintainability of the final product.

The practical implication is that the skill set for AI-assisted development is becoming more engineering-like, not less. Writing a tight specification, decomposing a task into agent-sized chunks, and auditing agent output all require the same systematic thinking as traditional software design. The LLM handles syntax; the developer handles architecture and quality control.

Raindrop Launches Open Source Agent Debugger

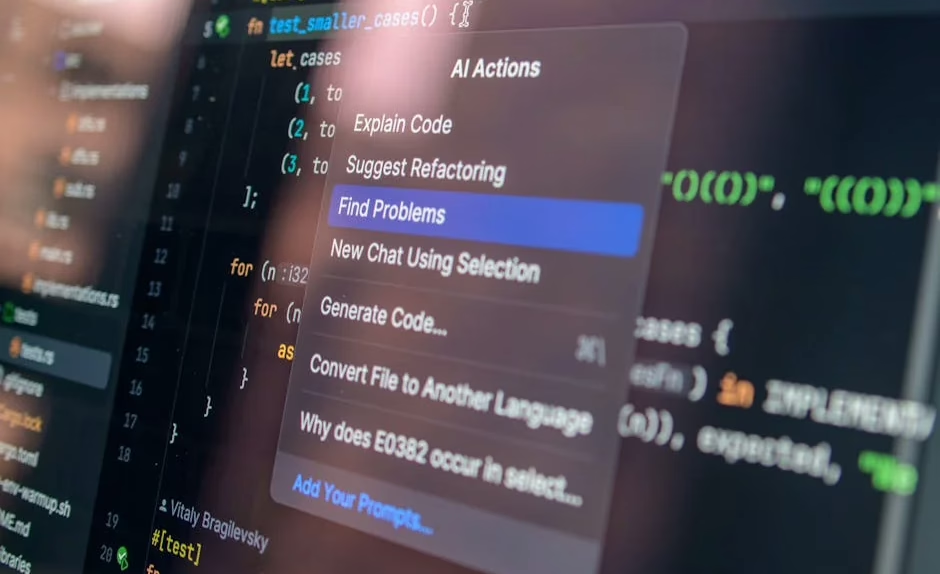

One of the clearest gaps in agentic development has been observability — specifically, the inability to see what an AI agent actually did during a run without shipping traces to an external server. Raindrop AI addressed this on May 14, 2026, with the release of Workshop, an open source, MIT-licensed local debugger built specifically for AI agents.

According to VentureBeat, Workshop runs as a local daemon and UI that streams every token, tool call, and decision to a dashboard at localhost:5899 in real time. All data is stored in a single SQLite `.db` file, keeping memory overhead low. Ben Hylak, Raindrop’s co-founder and CTO — previously an engineer at Apple and SpaceX — told VentureBeat via direct message that the single-file approach was a deliberate privacy choice, eliminating the need to route local traces through external servers.

Workshop’s standout capability is what Raindrop calls a self-healing eval loop: coding agents such as Claude Code can read their own traces, write evaluations against the codebase, and autonomously fix broken code. The tool is available for macOS, Linux, and Windows, installable via a single shell command that handles binary placement and PATH configuration for bash, zsh, and fish. Developers who prefer to build from source can do so via GitHub using the Bun runtime.

For teams running agents at scale, the real-time telemetry model eliminates the polling latency that plagues traditional log-based debugging — a meaningful improvement when an agent might make dozens of tool calls per task.

CodeSpeak Tests Natural-Language Programming

If agentic engineering represents one trajectory for AI-assisted coding, CodeSpeak represents a more radical bet: that programming languages themselves will eventually give way to plain English. Mansurova’s second article for Towards Data Science documents what happened when she migrated a 10,000+ line project into a CodeSpeak-native workflow, currently available in alpha preview.

The historical framing she offers is useful context. Programming languages have grown progressively more abstract over seven decades — from punch cards to assembly to FORTRAN to Python — with each layer reducing the distance between human intent and machine execution. CodeSpeak attempts to collapse that distance entirely by treating natural language as the source code.

Her findings are mixed in instructive ways. The tool handles well-scoped, isolated tasks competently, but larger refactors across a 10K-line codebase expose the limits of natural-language ambiguity. Without a formal specification, the agent must infer intent from prose — and inference errors compound across a large codebase in ways that a type-checker or compiler would catch immediately in a conventional language.

The experiment is less a product review than a stress test of the underlying idea. At this stage, CodeSpeak appears most viable as a high-level orchestration layer rather than a line-for-line replacement for typed code.

Browser-Native Development with WebAssembly and GitHub Codespaces

A separate thread in the developer tooling story involves removing local installation entirely. Luciano Abriata, writing for Towards Data Science, published a tutorial demonstrating how to write, compile, and deploy a WebAssembly application using only a web browser — specifically GitHub Codespaces running an online instance of Visual Studio Code.

The workflow compiles C code to WebAssembly via Emscripten, with port forwarding handling the local preview. No local toolchain installation is required. Abriata’s motivation was practical: he needed WASM ports of scientific C libraries (Gemmi and FreeSASA) for a molecular structure analysis platform, and found that the compilation pipeline was accessible entirely in-browser once he understood the tooling.

For AI coding tools, the relevance is infrastructural. As coding agents increasingly run in cloud environments rather than local IDEs, the distinction between “local” and “remote” development becomes less meaningful. GitHub Codespaces, Replit, and similar platforms are already the default environment for many AI-assisted workflows — Abriata’s tutorial is a concrete demonstration of how far that model extends, reaching down to compiled-language development.

Spec-Driven Workflows in Practice: Key Patterns

Across these sources, several concrete workflow patterns emerge for developers adopting spec-driven or agentic development:

- Write specifications before prompting. Mansurova’s fitness app build succeeded because she defined requirements explicitly before engaging agents, not after.

- Use local observability tools. Workshop’s localhost model keeps sensitive code traces off external servers while providing the same real-time visibility as cloud-based APM tools.

- Treat agents as junior engineers. The oversight model — review every significant agent output before merging — maps directly onto existing code review practices.

- Match tool to task scope. Natural-language tools like CodeSpeak handle isolated tasks well; larger refactors still benefit from typed languages and formal specifications.

- Leverage browser-native environments. For teams without consistent local setups, cloud IDEs with WASM support provide a reproducible baseline that AI agents can target reliably.

What This Means

The collective picture from May 2026 is that AI coding assistance is bifurcating. One branch — represented by Workshop, spec-driven workflows, and agentic engineering — is becoming more rigorous, not less. It borrows the structure of traditional software engineering and applies it to agent orchestration. The other branch — natural-language programming, vibe-adjacent tools — is still searching for the right scope of application.

For working developers, the practical takeaway is that the highest-value skill right now is not prompt fluency but specification quality. An agent given a precise, testable spec produces auditable, maintainable code. An agent given a vague prose description produces a first draft that requires as much rework as writing from scratch.

Raindrop’s Workshop addresses a real gap in the agentic toolchain — local, private, real-time observability — and its MIT license means it can be embedded in commercial workflows without licensing friction. That combination of open source, local-first, and privacy-preserving is likely to resonate with enterprise teams that have been hesitant to route proprietary code through external telemetry services.

The shift Karpathy describes — from vibe coding to agentic engineering — is not a retreat from AI assistance. It is a maturation of it.

FAQ

What is spec-driven development in AI coding?

Spec-driven development means writing a detailed specification of requirements before engaging an AI agent to generate code. According to Towards Data Science, this approach gives agents a precise target and allows developers to evaluate output against defined criteria rather than subjective impression.

What does Raindrop’s Workshop tool do?

Workshop is an open source, MIT-licensed local debugger for AI agents that streams every token, tool call, and decision to a dashboard at localhost:5899 in real time. According to VentureBeat, all data is stored in a single SQLite file, keeping traces private and off external servers.

Is vibe coding still useful for developers in 2026?

Andrej Karpathy, who coined the term, said in 2026 that the vibe coding era is ending for professional engineers. It remains useful for quick prototypes and isolated tasks, but production codebases increasingly require the oversight and specification discipline of agentic engineering.

Related news

- From Data Analyst to Data Engineer: My 12-Month Self-Study Roadmap – Towards Data Science

Sources

- Mixed Integer Goal Programming for Personalized Meal Optimization with User-Defined Serving Granularity – arXiv AI

- From Vibe Coding to Spec-Driven Development – Towards Data Science

- Developers can now debug and evaluate AI agents locally with Raindrop’s open source tool Workshop – VentureBeat

- Your First WebAssembly Program and Web App (Written, Tested, and Deployed Entirely in the Web Browser) – Towards Data Science

- I Let CodeSpeak Take Over My Repository – Towards Data Science