The way developers write software is shifting faster than at any point since the jump from assembly to compiled languages. From spec-driven agentic workflows to local agent debuggers and natural-language programming experiments, a cluster of new tools and practices released in May 2026 signals that AI-assisted development has matured well past the autocomplete era.

Vibe Coding Is Already Over

Andrej Karpathy coined the term “vibe coding” in February 2025 to describe the practice of describing intent to an LLM and accepting whatever code came back. By May 2026, he was already walking it back. In a post on X, Karpathy wrote that the vibe coding era is ending and that developers are entering the age of agentic engineering — orchestrating agents against detailed specifications with human oversight.

Mariya Mansurova, writing for Towards Data Science, documented this shift firsthand in a piece titled “From Vibe Coding to Spec-Driven Development.” She described building a working fitness app in 4.5 hours using LLM agents, but emphasized the discipline required: writing detailed specifications upfront, reviewing every agent decision, and treating the process as engineering rather than improvisation.

The preferred term among practitioners is now “agentic engineering” — “agentic” because developers orchestrate agents rather than writing code directly 99% of the time, and “engineering” to signal that craft, expertise, and rigor still apply. The goal, as Mansurova framed it, is to capture the productivity gains of agent-driven development without sacrificing software quality.

Raindrop Launches Workshop for Local Agent Debugging

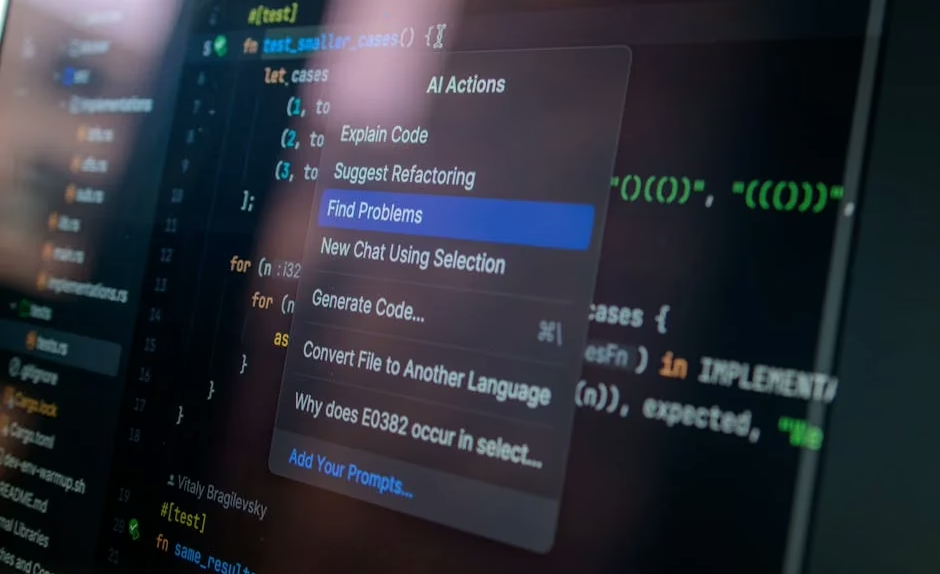

One of the concrete infrastructure gaps in agentic development — the inability to see what an AI agent is actually doing — got a direct answer on May 14, 2026. Observability startup Raindrop AI released Workshop, an open source, MIT-licensed local debugger and evaluation tool built specifically for AI agents.

According to VentureBeat, Workshop functions as a local daemon and UI that streams every token, tool call, and decision to a dashboard hosted at `localhost:5899` in real time. All telemetry is stored in a single SQLite `.db` file, keeping memory usage low and keeping data off external servers — a privacy concern that has grown as more teams run sensitive codebases through cloud-based agent tooling.

Ben Hylak, Raindrop’s co-founder and CTO and a former Apple and SpaceX engineer, told VentureBeat via direct message that the lightweight storage format was a deliberate design choice. Workshop is available for macOS, Linux, and Windows, installable via a single shell command that handles binary placement and PATH configuration for bash, zsh, and fish shells. Developers who prefer to build from source can do so via the GitHub repository using the Bun runtime.

The Self-Healing Eval Loop

Workshop’s headline feature is what Raindrop calls the “self-healing eval loop.” When a coding agent like Claude Code encounters a failure, Workshop captures the full trace of what went wrong. The agent can then read those traces, write evaluations against the codebase, and autonomously fix broken code — closing a feedback loop that previously required significant manual developer intervention.

This addresses a real friction point: agentic coding sessions can produce subtle failures that are hard to reproduce without a full record of every decision the agent made. Workshop provides that record locally, without requiring a SaaS subscription or sending production code to a third-party observability platform.

CodeSpeak Tests Natural-Language Programming

While most AI coding tools still operate within conventional programming languages, a more experimental approach is being tested in production. In a Towards Data Science piece published May 14, 2026, Mansurova described migrating a 10,000+ line project into CodeSpeak, a tool currently in alpha preview that lets developers write instructions in plain English rather than a formal programming language.

The experiment was framed against the broader arc of programming language history — from punch cards and assembly mnemonics through FORTRAN, C, and Python — with CodeSpeak representing a potential next step toward code written entirely in natural language. Mansurova’s account was candid about limitations: the alpha is rough, and managing a large existing codebase through a natural-language interface introduced its own category of ambiguity and error.

CodeSpeak is not a replacement for structured development today, but it represents the direction several teams are exploring: collapsing the gap between specification and implementation to the point where the two are the same document.

WebAssembly and Browser-Native Development

A separate but related thread in the May 2026 developer tooling conversation involves running the entire development environment inside the browser itself. Luciano Abriata, writing for Towards Data Science, published a hands-on guide to writing, testing, and deploying a WebAssembly program using GitHub Codespaces and the Emscripten compiler — with no local software installation required.

The workflow Abriata described compiles C code to WASM entirely within an online Visual Studio Code instance, using port forwarding to preview the running application. The tutorial covers GitHub, Codespaces, WebAssembly, C, HTML, and JavaScript as an integrated stack — relevant to AI developers who increasingly need to ship client-side inference or data-processing tools that run without a backend.

Browser-native development removes a class of environment configuration problems that have historically consumed significant developer time, and it pairs naturally with AI coding assistants that can generate boilerplate across unfamiliar language boundaries.

Optimization Research Reaches the Meal-Planning Edge Case

On the more academic end of the spectrum, a May 2026 arXiv preprint from researchers working on diet optimization illustrates how integer programming and goal programming techniques are being applied to personalized AI applications. The paper proposes Mixed Integer Goal Programming (MIGP) for meal planning, solving a longstanding problem where continuous-variable optimization produces impractical outputs like 1.7 eggs or 0.37 bananas.

The system was evaluated across 810 benchmark instances using 30 USDA foods and found strictly better solutions than goal programming with post-hoc rounding in 66% of cases, while maintaining 100% feasibility. Solve times stayed under 100 milliseconds for typical meal sizes using the open-source HiGHS solver. The implementation is available as an open-source Python module.

While not a coding tool in the traditional sense, the work demonstrates how optimization methods are being embedded in AI-powered applications — and how open-source tooling is enabling researchers to ship interactive, solver-backed tools alongside academic publications.

What This Means

The May 2026 snapshot of AI developer tooling shows an ecosystem that has moved past the hype phase and into infrastructure-building. The shift from vibe coding to spec-driven, agent-orchestrated development is not a marketing reframe — it reflects real changes in how professional developers are structuring their workflows, with more upfront specification, more agent oversight, and more tooling to inspect what agents actually did.

Raindrop’s Workshop is a direct response to the observability gap that agentic development created. As coding agents take on larger, more autonomous tasks, developers need the equivalent of a debugger — not just logs, but a structured, queryable record of every decision. The local-first, privacy-preserving design suggests the market has heard the concern about sending proprietary code to external services.

CodeSpeak and natural-language programming remain early experiments, but they are being tested against real codebases, not toy examples. The 10,000-line migration Mansurova documented is the kind of stress test that will determine whether natural-language development is viable at production scale.

For developers evaluating where to invest time in 2026, the clearest signal is this: the tools that are gaining traction are the ones that combine agent capability with developer control — not ones that remove the developer from the loop entirely.

FAQ

What is spec-driven development in AI coding?

Spec-driven development is an approach where developers write detailed specifications before engaging an LLM or coding agent to implement code. Unlike vibe coding — where a developer describes intent loosely and accepts the output — spec-driven development treats the specification as a contract that the agent is held to, with human review at each stage.

What does Raindrop’s Workshop tool actually do?

Workshop is an open source local debugger for AI agents that streams every token, tool call, and agent decision to a dashboard at `localhost:5899` in real time. All data is stored in a single SQLite file on the developer’s machine, avoiding the need to send agent traces to external servers. It also supports a self-healing eval loop where agents can read their own failure traces and attempt autonomous fixes.

Is CodeSpeak a replacement for Python or JavaScript?

Not currently. CodeSpeak is in alpha preview and allows developers to write program instructions in plain English rather than a formal language, but it is experimental. Mansurova’s test migrating a 10,000+ line project revealed real limitations in handling large, existing codebases through a natural-language interface at this stage of development.

Related news

Sources

- Mixed Integer Goal Programming for Personalized Meal Optimization with User-Defined Serving Granularity – arXiv AI

- From Vibe Coding to Spec-Driven Development – Towards Data Science

- Developers can now debug and evaluate AI agents locally with Raindrop’s open source tool Workshop – VentureBeat

- Your First WebAssembly Program and Web App (Written, Tested, and Deployed Entirely in the Web Browser) – Towards Data Science

- I Let CodeSpeak Take Over My Repository – Towards Data Science