Chain-of-thought reasoning has become the dominant technique for improving AI performance on math, logic, and multi-step problem-solving — but new research published in May 2026 shows that longer reasoning trajectories introduce a systematic bias that most evaluation pipelines ignore. At the same time, recursive reasoning architectures are redefining how models handle complex, long-context tasks, and foundational engineering concepts like tokenization and attention remain the substrate everything else runs on.

What Chain-of-Thought Reasoning Actually Does

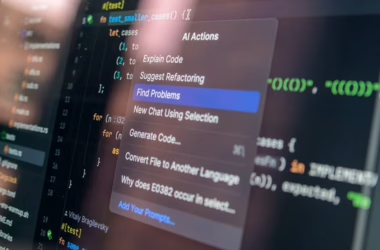

Chain-of-thought (CoT) prompting instructs a model to work through a problem step by step before producing a final answer. The intuition is straightforward: by externalizing intermediate reasoning, models avoid the kind of shallow pattern-matching that produces confident wrong answers. Reasoning-tuned models like DeepSeek-R1 take this further, training the model to generate extended internal reasoning trajectories before committing to a response.

According to TechCrunch’s AI glossary, these systems sit in a broader ecosystem of techniques — including reinforcement learning from human feedback (RLHF) and retrieval-augmented generation (RAG) — that collectively push models toward more reliable outputs. The goal across all of them is the same: reduce the gap between what a model produces and what a human expert would produce.

For mathematical reasoning specifically, CoT has shown measurable gains on benchmarks like MMLU, ARC-Challenge, and GPQA. But benchmark performance and real-world robustness are not the same thing, and a May 2026 paper on arXiv identifies a structural flaw in how reasoning models behave under pressure.

Longer Reasoning Trajectories Amplify Position Bias

A paper published on arXiv by researchers testing thirteen reasoning-mode configurations found that, within any reasoning-capable model, position bias in multiple-choice QA scales with the length of the reasoning trajectory. Twelve of the thirteen configurations showed a positive partial correlation between trajectory length and Position Bias Score (PBS) after controlling for accuracy, with correlations ranging from 0.11 to 0.41 (all p < 0.05).

Position bias means the model disproportionately favors answers placed in certain positions — option A, or option D — regardless of correctness. The researchers found this effect is not static: it grows as the reasoning chain gets longer. All twelve open-weight reasoning-mode configurations showed monotonically increasing PBS across length quartiles.

A truncation intervention provided causal evidence. When continuations were resumed from later points in the trajectory, models shifted toward position-preferred options at increasing rates — from 16% to 32% for R1-Qwen-7B across absolute-position buckets. The implication is that longer thinking doesn’t just fail to eliminate bias; it actively accumulates it.

At 671B parameters, DeepSeek-R1’s aggregate PBS collapsed to 0.019, but the length effect still appeared in the longest quartile (PBS = 0.071). The researchers concluded that accuracy gates the expression of length-driven bias rather than eliminating the underlying mechanism — meaning smaller models are more exposed, not immune.

Direct-Answer Bias vs. Length-Accumulated Bias

The paper draws a distinction between two separate phenomena. Direct-answer position bias — where a model without CoT favors certain answer positions — is strong in some models (Llama-Instruct-direct) and weak in others (Qwen-Instruct-direct), and is uncorrelated with trajectory length. CoT reasoning doesn’t eliminate this baseline bias; it replaces it with length-accumulated bias. The mechanism changes, but the problem persists.

The researchers propose a diagnostic toolkit — PBS, commitment change point, effective switching, and truncation probes — for auditing position bias in reasoning model evaluation pipelines.

Recursive Reasoning: A Different Approach to Multi-Step Problems

While CoT extends a single forward pass with intermediate steps, Recursive Language Models (RLMs) take a structurally different approach. According to a deep dive published in Towards Data Science, RLMs pass context by reference rather than replicating it at each step — a distinction that matters significantly for long-context tasks.

The author, Avishek Biswas, describes spending a month implementing RLMs and running benchmarks, and a tutorial video accompanies the written analysis. The core claim is that RLMs are currently winning long-context benchmarks because they avoid the context-bloat problem that affects ReAct, CodeAct, and vanilla subagent designs.

In a simple illustrative experiment, an RLM was asked to generate 50 fruit names and count the letter R in each, returning a dictionary. A more complex variant required a nested dictionary across three categories — fruits, countries, animals — each with 50 entries. The RLM handled both without the context degradation that flattens competing approaches on longer tasks.

The distinction from existing agentic designs comes down to one missing piece: context-by-reference rather than context-by-replication. Each recursive call doesn’t need to carry the full prior context in its input — it references a shared state, which scales more cleanly.

Formalizing When Recursive Reasoning Should Stop

A separate arXiv paper addresses a design gap that both CoT and recursive systems share: neither has a principled way to decide when to stop iterating. The paper proposes representing the reasoning state as an epistemic state graph — encoding extracted claims, evidential relations, open questions, and confidence weights — and introduces the concept of the order-gap.

The order-gap measures the distance between the states reached by expand-then-consolidate versus consolidate-then-expand. A small order-gap suggests the two orderings agree and further iteration is unlikely to improve the result. The paper provides a necessary and sufficient condition for the linearized order-gap to be non-degenerate near the fixed point, identifying when the criterion is informative rather than algebraically vacuous.

The framework applies to tree-of-thought reasoning, theorem proving, agent loops, and continual learning — essentially any system that alternates between gathering evidence and refining an accumulated understanding. The authors are explicit that this is a local condition, not a global convergence guarantee.

Engineering Foundations: What Reasoning Models Are Built On

Beneath the reasoning architectures sit the same building blocks that all LLMs depend on. A comprehensive guide published in Towards Data Science by Aliaksei Mikhailiuk maps the full stack — from tokenization and attention through fine-tuning, inference optimization, and evaluation — with particular relevance for engineers moving into LLM work from adjacent fields.

For reasoning models specifically, a few components are especially load-bearing:

- Tokenization: Reasoning trajectories can be very long. Tokenization choices affect both the cost of inference and the model’s ability to represent mathematical notation and symbolic logic accurately.

- Attention mechanisms: Multi-step reasoning depends on the model maintaining coherent reference to earlier steps. Attention window size and efficiency directly constrain how far back a reasoning chain can reach.

- Reinforcement learning from human feedback (RLHF): Reasoning-tuned models like DeepSeek-R1 use RL-based training to reward correct final answers, which shapes the character of the reasoning trajectories the model learns to produce.

- Evaluation: The position bias findings above are partly an evaluation problem — standard MCQ benchmarks don’t control for answer ordering, which contaminates results from reasoning models specifically.

Mikhailiuk’s guide also covers mixture-of-experts (MoE) architectures, which are relevant because several frontier reasoning models — including the 671B DeepSeek-R1 — use sparse MoE to activate only a subset of parameters per token, making large-scale reasoning tractable.

What This Means

The May 2026 research on position bias is a direct challenge to a common assumption: that longer reasoning is better reasoning. It isn’t, unconditionally. For models below frontier scale, extended CoT trajectories systematically drift toward position-preferred answers — a bias that standard benchmarks don’t catch because they rarely control for answer ordering.

This has practical consequences. Any evaluation pipeline using multiple-choice questions to assess reasoning model performance should be treated as suspect unless it randomizes answer positions across runs and computes PBS alongside accuracy. The diagnostic tools the arXiv paper proposes are not exotic — they’re straightforward audits that should be standard practice.

The RLM and epistemic-state-graph work points in a complementary direction: reasoning systems need better internal architecture, not just longer chains. Knowing when to stop, and how to pass state efficiently, matters as much as the reasoning steps themselves. The field is moving from “think longer” as a default toward more structured approaches that treat the reasoning process itself as an engineering problem with failure modes worth measuring.

For engineers building on top of reasoning models today, the practical takeaway is to treat CoT outputs as probabilistic rather than authoritative — especially on tasks with discrete answer choices — and to audit for position sensitivity before deploying in evaluation-heavy workflows.

FAQ

What is chain-of-thought reasoning in AI?

Chain-of-thought (CoT) reasoning is a technique where an AI model works through a problem step by step before producing a final answer, rather than answering directly. It was introduced to reduce errors on multi-step math, logic, and reasoning tasks by making intermediate steps explicit. Reasoning-tuned models like DeepSeek-R1 extend this by training the model to generate extended internal reasoning trajectories as part of their core behavior.

What is position bias in reasoning models?

Position bias is the tendency of a model to favor answers placed in certain positions — such as option A or option D — in multiple-choice questions, regardless of which answer is actually correct. A May 2026 arXiv study found that in reasoning-capable models, this bias grows with the length of the reasoning trajectory, meaning longer chains of thought make the problem worse rather than better in models below frontier scale.

How do recursive language models differ from standard chain-of-thought?

Standard chain-of-thought extends a single forward pass with intermediate reasoning steps, which means the full prior context must be carried through each step. Recursive Language Models (RLMs) pass context by reference rather than replicating it, which reduces context bloat on long tasks and allows the model to handle more complex, nested problems without degradation. According to analysis published in Towards Data Science in May 2026, this architectural difference is why RLMs are currently outperforming competing designs on long-context benchmarks.

Sources

- The Must-Know Topics for an LLM Engineer – Towards Data Science

- More Thinking, More Bias: Length-Driven Position Bias in Reasoning Models – arXiv AI

- So you’ve heard these AI terms and nodded along; let’s fix that – TechCrunch

- Recursive Language Models: An All-in-One Deep Dive – Towards Data Science

- State Representation and Termination for Recursive Reasoning Systems – arXiv AI