The European Commission announced plans in May 2026 to regulate “addictive design” features on platforms like TikTok and Instagram, with European Commission President Ursula von der Leyen confirming new legislation is expected before the end of the year. The move targets algorithmic recommendation systems and interface patterns that EU officials say are deliberately engineered to maximize children’s screen time. It represents the bloc’s most direct intervention into platform product design since the Digital Services Act took effect.

What the EU Is Targeting

According to CNBC, the Commission’s focus is specifically on “addictive design” — a category that includes infinite scroll, autoplay video, push notifications calibrated to re-engage lapsed users, and engagement-based content ranking that prioritizes time-on-app over user wellbeing. TikTok and Instagram are the named platforms, though any service with significant youth audiences would fall under the proposed rules.

Von der Leyen’s statement signals that the EU intends to move from general content moderation obligations — already addressed under the DSA — toward structural product requirements. That is a meaningful escalation: regulators would no longer just require platforms to remove harmful content after the fact, but to redesign the mechanics that surface it.

The EU is not acting in isolation. Governments in the UK, Australia, and several US states have introduced or passed legislation restricting minors’ access to social media or mandating age verification. The EU proposal, if enacted, would be among the most technically specific, targeting the design layer rather than access controls.

Forced Arbitration: A Parallel Consumer Rights Fight

While the EU moves on platform design, a separate legal debate is intensifying in the United States over how companies use terms of service to strip consumers of their right to sue collectively. Brendan Ballou, founder of the Public Integrity Project and author of When Companies Run the Courts, told The Verge that forced arbitration clauses — buried in nearly every consumer product or service agreement — require users to waive class-action rights as a condition of use.

The practical effect is significant. When a company causes harm at scale — a data breach affecting millions, a defective product, a deceptive billing practice — class-action suits are often the only mechanism that makes litigation economically viable for individual consumers. Forced arbitration eliminates that option, routing disputes into private proceedings where companies have structural advantages: they are repeat players before the same arbitrators, while consumers appear once.

Ballou’s book documents how these clauses have proliferated across sectors from financial services to healthcare to consumer technology. The legal mechanism is largely a US phenomenon; EU consumer protection law has historically been more restrictive about pre-dispute arbitration waivers, which is part of why platform accountability fights in Europe tend to play out through regulatory channels rather than the courts.

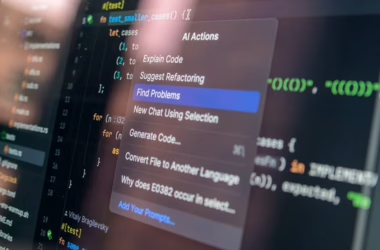

In-House Legal Teams Deploy AI for Compliance

As regulation grows more complex on both sides of the Atlantic, corporate legal departments are turning to AI to manage the compliance burden. The Financial Times reported on May 15, 2026, that Westpac’s legal team won an internal competition at the Australian bank by pitching an AI tool that extracts data from incident reports and compliance queries to identify trends and predict emerging regulatory risks.

Petra Stirling, director of operations, risk and transformation for Westpac Legal, told the FT that “predictive analytics is not normally the role that legal plays in corporate organisational risk and compliance management” — framing the tool as a departure from legal’s traditional reactive posture. The tool has been funded and is in early-stage implementation.

The Westpac case is one of several highlighted in the 2026 FT Innovative Lawyers Asia-Pacific report. Other examples include Australian firm Mallesons, which the FT described as making AI integral to legal practice delivery — using it to check draft documents against term sheets, produce compliance reports, and streamline merger notifications. Mallesons received scores of 7 for originality, 9 for leadership, and 9 for impact in the FT’s ranking.

The Compliance Stack Is Getting More Complicated

The convergence of new EU platform rules, evolving US arbitration law, and AI-specific regulatory frameworks is creating a compliance environment that legal teams describe as materially more demanding than five years ago.

For technology companies operating across jurisdictions, the challenge is layered:

- EU AI Act obligations are phased in through 2027, with high-risk system requirements already active for some categories

- DSA enforcement against very large online platforms has been ongoing since 2023, with the Commission opening formal proceedings against TikTok, Meta, and X

- Proposed addictive design rules would add product-level mandates on top of content moderation requirements

- US state-level legislation — including age verification laws in Arkansas, Louisiana, and Utah — creates a patchwork that differs from federal baseline rules

- Forced arbitration reform remains stalled in Congress despite bipartisan criticism of specific high-profile cases

Law firms serving technology clients are responding by building dedicated AI and platform regulation practices. The FT’s Asia-Pacific report noted that firms are also investing in knowledge management systems that track regulatory changes across multiple jurisdictions in near-real time — a task that was previously handled by teams of associates doing manual monitoring.

Cybersecurity Regulation Enters the Board Room

Regulatory pressure on technology companies is not limited to AI and platform design. Dark Reading’s 20th anniversary retrospective, published May 12, 2026, traces how the CISO role has expanded from technical network defense into board-level risk management, compliance, and corporate governance over two decades.

The piece documents how cybersecurity has become a regulatory matter — SEC disclosure rules now require public companies to report material cyber incidents within four business days, and the EU’s NIS2 directive imposes mandatory security standards and incident reporting on critical infrastructure operators. For technology companies, this means the legal and security functions are increasingly overlapping: a breach is simultaneously a technical incident, a regulatory disclosure event, and potential litigation trigger.

The CISO-as-compliance-officer dynamic is relevant to AI regulation as well. As the EU AI Act’s conformity assessment requirements take effect, companies will need to document how high-risk AI systems were built, tested, and monitored — a process that resembles the audit trails already required under financial and data protection regulations.

What This Means

The EU’s proposed crackdown on addictive design is the clearest signal yet that European regulators are willing to mandate product architecture changes, not just content policies. If enacted, it would force TikTok and Meta to make engineering decisions — not just policy decisions — to comply. That is a different kind of regulatory pressure than fines or content takedown orders.

The forced arbitration debate in the US reflects a structural asymmetry that technology companies have exploited for years: terms of service that consumers cannot meaningfully negotiate, combined with legal mechanisms that make individual redress impractical. Congressional action remains unlikely in the near term, which means the practical pressure will come from state attorneys general and the occasional high-profile case that generates enough public attention to embarrass a company into settlement.

For legal and compliance teams inside technology companies, the trajectory is clear: the regulatory surface area is expanding faster than most organizations have scaled their legal infrastructure. The firms and in-house teams investing in AI-assisted compliance monitoring now are building capacity for a regulatory environment that will be significantly more demanding by 2027, when the EU AI Act’s full requirements are in force.

FAQ

What is the EU’s proposed regulation on addictive social media design?

The European Commission, under President Ursula von der Leyen, announced in May 2026 that it plans to introduce rules targeting “addictive design” features on platforms like TikTok and Instagram — including infinite scroll, autoplay, and algorithmic ranking systems that prioritize engagement over user wellbeing. The legislation is expected to be introduced before the end of 2026 and would go beyond existing Digital Services Act requirements by mandating changes to product design, not just content moderation.

What is forced arbitration and why does it matter for tech consumers?

Forced arbitration clauses, embedded in most consumer terms of service agreements, require users to waive their right to join class-action lawsuits and instead resolve disputes through private arbitration. According to Brendan Ballou, author of When Companies Run the Courts, this gives companies a structural advantage because they appear repeatedly before the same arbitrators while individual consumers do not, and it makes collective legal action economically unviable for most consumers.

How are companies using AI to manage regulatory compliance?

Corporate legal teams are deploying AI tools to monitor regulatory changes across jurisdictions, flag emerging risks, and automate document review tasks. Westpac’s legal department, for example, built an AI system that extracts data from incident reports and compliance queries to predict regulatory risks — a capability the Financial Times highlighted in its 2026 FT Innovative Lawyers Asia-Pacific report as an example of legal teams moving from reactive to predictive compliance functions.

Sources

- How companies weaponize the terms of service against you – The Verge

- Business of law: case studies – Financial Times Tech

- In-house legal teams step up on AI strategies – Financial Times Tech

- 20 Leaders Who Built the CISO Era: 2 Decades of Change – Dark Reading

- EU to crack down on TikTok, Instagram’s ‘addictive design’ targeting kids on social media – CNBC Tech