- Natural language processing (NLP) is the subfield of AI that lets computers read, interpret, and generate human language.

- NLP has cycled through three broad eras: rule-based (1950s–1980s), statistical (1990s–2010s), and neural — now dominated by transformer-based large language models.

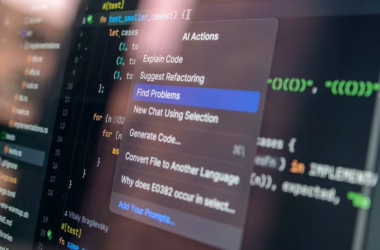

- Modern NLP applications include translation, chatbots, search ranking, summarization, sentiment analysis, voice assistants, and code generation.

- Language is hard for computers because meaning depends on context, grammar has exceptions, ambiguity is everywhere, and humans constantly bend the rules.

- The 2017 transformer architecture and the 2018 release of BERT triggered the current era, where a pre-trained language model can be adapted to most NLP tasks.

What makes language hard for machines?

Human language is optimized for human listeners, not for parsers. Ambiguity is ubiquitous — “the bank” can mean a financial institution or a riverbank, and only context tells you which. Sentences can be grammatically correct and still meaningless (“Colorless green ideas sleep furiously”, Chomsky’s famous example). Meaning shifts with tone, register, sarcasm, and cultural context. New words and slang appear constantly. Grammar has exceptions to every rule.

A task that feels trivial for a human — understanding “Can you pass the salt?” as a request, not a yes/no question — requires a machine to integrate syntax, semantics, pragmatics, and world knowledge simultaneously. NLP has spent seven decades figuring out how to get computers closer to that.

Three eras of NLP

Era 1: rule-based systems (1950s–1980s)

Early NLP treated language as a formal system to be parsed with hand-written grammars and dictionaries. Researchers built elaborate rule sets — morphological analyzers, syntactic parsers, semantic frames — in hopes of capturing language’s structure explicitly. The first machine-translation system was demoed in 1954. It worked on a tiny vocabulary. Scaling rule-based systems up to real text was a losing battle. The approach hit a ceiling by the late 1980s.

Era 2: statistical NLP (1990s–2010s)

The statistical era shifted the focus from writing rules to learning patterns from data. Models like hidden Markov models, conditional random fields, and n-gram language models dominated. Machine translation moved from rule-based to phrase-based statistical systems in the 2000s — Google Translate’s 2006 launch was a landmark. Feature engineering became a core skill: building the right input representations for classical ML models.

Era 3: neural NLP (2013–present)

Neural networks began displacing classical NLP models around 2013 with word embeddings like word2vec and GloVe. These techniques turned words into dense vectors where semantic similarity became geometric similarity. Recurrent networks (LSTMs) dominated mid-decade NLP — sequence-to-sequence translation, the 2016 Google Neural Machine Translation system, and countless downstream tasks.

Then came 2017 — the transformer architecture, covered in our transformers explainer. The following year, BERT from Google Research demonstrated that a single pre-trained transformer could be fine-tuned to dominate nearly every NLP benchmark. The pattern — pre-train on huge unlabelled corpora, then fine-tune for specific tasks — became the new default. Two years later, GPT-3 showed the same pattern worked at massively larger scale with even simpler deployment (just give it a prompt). Modern large language models are the culmination of this era.

The building blocks of NLP pipelines

Tokenization

Before a model can process text, the text has to be chopped into pieces — tokens. In modern NLP, tokens are typically subword units created by algorithms like byte-pair encoding (BPE) or WordPiece. “Tokenization” sounds mechanical, but it profoundly affects model behaviour — it determines vocabulary, affects non-English languages disproportionately, and even influences the model’s numerical reasoning. See our tokenization explainer for more.

Embeddings

Once you have tokens, you need to represent them as numbers. Embeddings are learned vectors where each token is a point in a high-dimensional space. Tokens with similar meanings end up geometrically close. “King” minus “man” plus “woman” ≈ “queen” — the famous word-vector analogy — is an emergent property of good embeddings.

Encoders and decoders

Most NLP architectures split into two halves. An encoder takes input text and produces a representation — useful for classification, retrieval, similarity. A decoder takes a representation (or a prompt) and produces output text — useful for generation, translation, summarization. A transformer can be encoder-only (BERT), decoder-only (GPT), or both (T5).

Common NLP tasks

- Text classification — sentiment analysis, topic labelling, spam detection.

- Named entity recognition — finding people, places, organizations in text.

- Machine translation — converting text between languages.

- Summarization — producing shorter versions of long documents.

- Question answering — extracting or generating answers from text.

- Text generation — open-ended writing, chat, creative composition.

- Information retrieval — matching queries to relevant documents.

- Speech recognition and synthesis — voice-to-text and text-to-voice.

Before 2018, each task had its own best model. Today, a single large language model with a good prompt often matches or beats task-specific systems. That universality is the biggest practical shift NLP has seen.

Where NLP still struggles

Modern NLP is astonishingly good at English and major world languages. It is much weaker on low-resource languages where training data is scarce. It struggles with precise factual accuracy (hallucinations remain common). It can be fooled by adversarial prompts. It has no understanding in any deep sense — it manipulates statistical patterns of tokens. Tasks that require long reasoning chains, careful arithmetic, or integration of real-time information outside the training data remain open challenges.

Frequently asked questions

Is a chatbot the same thing as NLP?

Not quite. NLP is the broad field of making computers work with language; chatbots are one application. Early chatbots (ELIZA in the 1960s, rule-based FAQ bots in the 2000s) used shallow techniques. Modern conversational assistants like ChatGPT and Claude are built on large language models — deeply neural NLP systems. Saying “a chatbot uses NLP” is correct, but NLP also powers translation, search, summarization, voice dictation, and many other non-chat applications.

What is the difference between NLP and natural language understanding (NLU)?

NLP covers everything language-related, including generation, translation, and mechanical parsing. NLU is a subset focused specifically on extracting meaning — intent recognition, entity extraction, semantic parsing. In commercial voice-assistant platforms like Alexa or Dialogflow, “NLU” is usually the component that maps spoken utterances to structured intents and slots, which the rest of the system then acts on.

Can a language model truly understand text?

This is a genuinely disputed question. Large language models produce outputs indistinguishable from human writing on many tasks, but they have no grounding in the world, no body, no sensory experience — they are pattern-matchers over statistical distributions of text. Some researchers argue this is sufficient for functional understanding; others insist that without embodiment or grounding, the systems are performing sophisticated mimicry. What is clear is that fluency and accuracy are separate axes — a model can sound coherent while being confidently wrong about facts.