AI-powered coding assistants like GitHub Copilot and Cursor are transforming software development workflows, but they’re simultaneously creating unprecedented security blind spots for enterprise teams. According to VentureBeat, developers are increasingly running AI models locally on devices, bypassing traditional network monitoring and data loss prevention (DLP) systems that security teams rely on to detect potential threats.

The shift from cloud-based AI services to on-device inference represents what security experts are calling “Shadow AI 2.0” – a new era where employees can run capable language models offline with no API calls and no obvious network signatures. This evolution fundamentally breaks the traditional CISO playbook that has governed AI usage for the past 18 months.

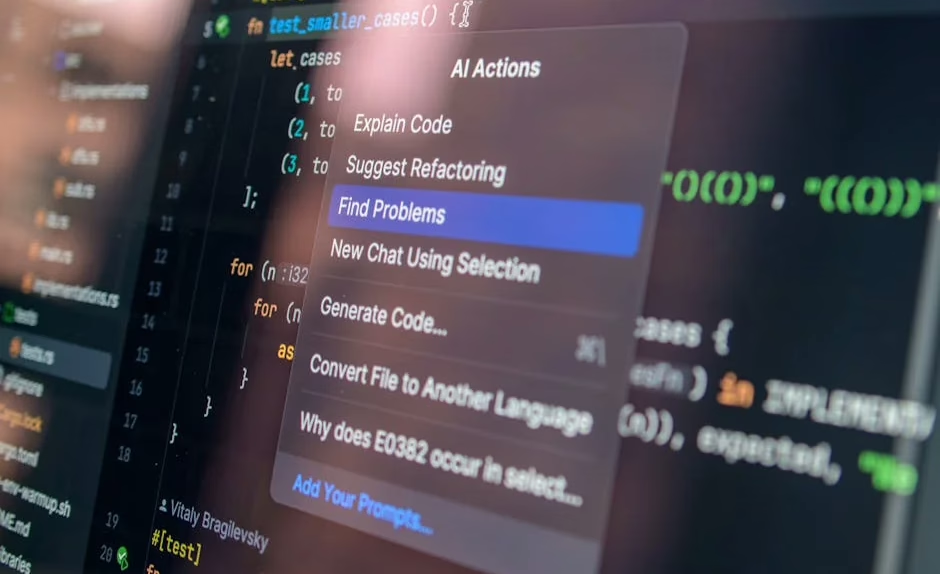

Local Inference Creates Security Visibility Gaps

Traditional security controls assumed AI interactions would involve external API calls that could be monitored, logged, and potentially blocked. However, modern hardware capabilities have made local AI inference increasingly practical for development teams.

Three key factors enable this shift:

• Enhanced consumer hardware: MacBook Pro with 64GB unified memory can run quantized 70B-class models at usable speeds

• Mainstream quantization: Model compression techniques now easily reduce model sizes for local deployment

• Optimized inference engines: Tools like Ollama and LM Studio make local model deployment accessible to non-experts

When inference happens locally, traditional DLP systems cannot observe the interaction. This creates a fundamental visibility problem – security teams cannot manage what they cannot see. The risk shifts from “data exfiltration to the cloud” to “unvetted inference inside the device.”

Attack Vectors Through Local AI Models

Local AI coding tools introduce several attack vectors that traditional security frameworks don’t address:

Model poisoning: Developers might unknowingly download compromised models from unofficial repositories, potentially injecting malicious code suggestions into their development workflow.

Data exposure through context: Even local models can inadvertently expose sensitive code, API keys, or business logic through their training context or conversation history stored locally.

Supply chain vulnerabilities: Third-party model repositories and inference engines may contain backdoors or vulnerabilities that compromise the entire development environment.

Enterprise-Scale Agentic Development Security Challenges

Enterprise teams are adopting spec-driven development to manage autonomous coding agents safely. The Kiro IDE team reduced feature development from two weeks to two days, while an AWS engineering team completed an 18-month rearchitecture project with six people in 76 days instead of the originally planned 30 developers.

However, this acceleration introduces significant security considerations:

Autonomous code generation risks: AI agents operating with minimal human oversight can introduce vulnerabilities faster than traditional code review processes can identify them.

Specification manipulation: If threat actors compromise the specifications that guide autonomous development, they could influence large portions of the codebase systematically.

Rapid deployment vulnerabilities: The compressed timeline from weeks to days may not allow sufficient time for thorough security testing and vulnerability assessment.

Threat Assessment Framework for AI Coding Tools

Security teams need new frameworks to assess AI coding tool risks:

High-risk scenarios:

• Direct integration with production systems

• Access to sensitive codebases or infrastructure configurations

• Autonomous deployment capabilities without human approval

Medium-risk scenarios:

• Development environment usage with proper sandboxing

• Code suggestion tools with human review requirements

• Limited access to non-critical development branches

Monitoring requirements:

• Code commit analysis for unusual patterns

• Dependency scanning for unexpected package inclusions

• Behavioral analysis of development workflow changes

Privacy Implications and Data Protection Strategies

AI coding assistants process vast amounts of proprietary code, potentially exposing intellectual property and sensitive business logic. The terminology around AI agents continues evolving, but the privacy implications remain consistent across implementations.

Critical privacy concerns include:

• Code fingerprinting: AI models may retain patterns from proprietary codebases that could be reconstructed or inferred

• Cross-contamination: Models trained on multiple organizations’ code might inadvertently suggest proprietary patterns from other companies

• Persistent context: Local conversation histories and code snippets may persist in unencrypted formats

Data Classification and Handling Protocols

Organizations must implement strict data classification protocols for AI coding tool usage:

Prohibited data types:

• Authentication credentials and API keys

• Customer personally identifiable information (PII)

• Proprietary algorithms and trade secrets

• Security configurations and vulnerability details

Approved usage scenarios:

• Open-source code development

• General programming pattern assistance

• Documentation and comment generation

• Non-sensitive utility function development

Defense Strategies and Security Recommendations

Security teams must adapt their strategies to address the unique challenges posed by AI coding tools. Traditional perimeter-based security models prove insufficient for managing local AI inference and autonomous development workflows.

Technical Security Controls

Endpoint monitoring enhancements:

• Deploy behavior-based detection for unusual model downloads or inference activity

• Monitor file system changes for AI model installations

• Track resource consumption patterns indicating local AI usage

Code repository security:

• Implement automated scanning for AI-generated code patterns

• Establish baseline metrics for code quality and security standards

• Deploy continuous integration security gates that account for AI-assisted development

Network segmentation:

• Isolate development environments with AI tools from production systems

• Implement zero-trust network access for AI-enhanced development workflows

• Monitor for unexpected data flows that might indicate model training or fine-tuning

Governance and Policy Framework

Effective AI coding tool governance requires clear policies that balance productivity gains with security requirements:

Approved tool registry: Maintain a whitelist of vetted AI coding tools with specific usage guidelines and security requirements.

Developer training programs: Educate development teams on secure AI tool usage, including recognition of potential security risks and proper data handling procedures.

Incident response procedures: Establish specific protocols for AI-related security incidents, including model compromise, data exposure, and autonomous system failures.

What This Means

The rapid adoption of AI coding tools represents a fundamental shift in enterprise software development that security teams cannot ignore. Traditional security models built around network perimeter defense and API monitoring prove inadequate for managing local AI inference and autonomous development workflows.

Organizations must evolve their security strategies to address this new reality. This includes implementing endpoint-based monitoring for local AI activity, establishing clear governance frameworks for AI tool usage, and developing incident response procedures specific to AI-related security events.

The enterprises that successfully navigate this transition will be those that proactively adapt their security postures while enabling developer productivity. Failure to address these emerging risks could result in significant security incidents, intellectual property exposure, and compliance violations.

FAQ

Q: How can security teams detect local AI model usage on developer machines?

A: Implement endpoint detection and response (EDR) solutions that monitor for AI inference patterns, unusual resource consumption, and model file downloads. Behavioral analysis can identify when developers are using local AI tools even without network traffic.

Q: What are the main data privacy risks with AI coding assistants?

A: Primary risks include code fingerprinting where AI models retain patterns from proprietary codebases, cross-contamination between different organizations’ code, and persistent storage of sensitive code snippets in conversation histories.

Q: Should organizations ban AI coding tools entirely?

A: Complete bans are typically counterproductive and may drive shadow usage. Instead, organizations should implement risk-based governance frameworks that allow approved AI tools with proper security controls, training, and monitoring in place.

For a side-by-side look at the flagship models in play, see our full 2026 AI model comparison.

Related news

- Coinbase, Binance seek Anthropic Mythos access as crypto firms brace for AI security threats – Crypto Briefing – Google News – AI Security

- Hack the AI agent: Build agentic AI security skills with the GitHub Secure Code Game – The GitHub Blog – Google News – AI Security

- OpenAI Launches GPT-5.4-Cyber with Expanded Access for Security Teams – The Hacker News