AI-powered coding assistants like GitHub Copilot and Cursor are fundamentally reshaping enterprise development workflows, but they’re also introducing critical security blind spots that traditional cybersecurity frameworks weren’t designed to handle. According to VentureBeat, the shift toward local AI inference and autonomous coding agents is creating “Shadow AI 2.0” scenarios where sensitive code generation happens entirely offline, bypassing established data loss prevention (DLP) controls.

The Local Inference Security Gap

The most immediate threat vector emerges from developers running large language models (LLMs) directly on their workstations. As VentureBeat reports, three technological convergences have made this practical: consumer-grade accelerators in laptops can now run quantized 70B-class models, mainstream quantization techniques compress models into manageable sizes, and the developer tooling ecosystem has matured around local deployment.

Critical security implications include:

- Invisible data flows: When inference happens locally, traditional DLP systems cannot monitor code inputs or generated outputs

- Unvetted model usage: Employees may download and use models from untrusted sources without IT oversight

- Code injection risks: Locally-run models may have been compromised or fine-tuned with malicious training data

- Compliance violations: Sensitive proprietary code may be processed by models without proper data governance

The traditional CISO playbook of controlling browser access and monitoring API calls becomes ineffective when the entire AI interaction occurs within the endpoint device. Security teams lose visibility into what code is being generated, what proprietary information is being processed, and whether the AI models themselves have been compromised.

Autonomous Coding Agents Amplify Attack Surface

The emergence of autonomous coding agents represents an escalation in threat complexity. According to VentureBeat, enterprise teams are now deploying agents that can complete months-long development projects in days, but this acceleration comes with significant security trade-offs.

Key threat vectors include:

Code Quality and Vulnerability Injection

Autonomous agents operating without proper specification frameworks may introduce subtle security vulnerabilities that bypass traditional code review processes. The “vibe coding” approach mentioned in industry reports creates a “surplus of slop” – low-quality code that may contain exploitable flaws.

Supply Chain Contamination

Agents that autonomously select and integrate dependencies create new supply chain attack opportunities. Malicious actors could potentially influence agent decision-making to introduce compromised libraries or packages.

Privilege Escalation Risks

Coding agents with broad repository access and deployment permissions represent high-value targets for attackers seeking to establish persistence in development environments.

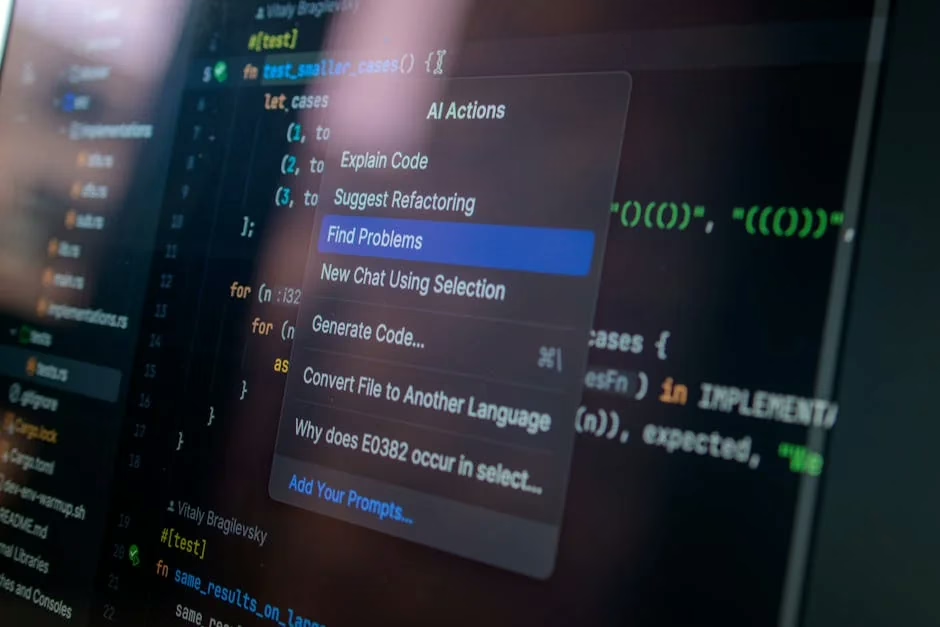

IDE Integration Security Weaknesses

Integrated Development Environment (IDE) plugins for AI coding tools create persistent security risks within developer workflows. These integrations often require extensive permissions to access codebases, project structures, and development secrets.

Primary attack vectors:

- Credential harvesting: Malicious or compromised AI tools could exfiltrate API keys, database credentials, and other secrets embedded in code

- Code exfiltration: Proprietary algorithms and business logic could be transmitted to external AI services

- Backdoor insertion: Compromised AI tools could inject subtle backdoors into production code

- Development environment compromise: IDE plugins with elevated permissions could serve as entry points for broader system compromise

The challenge is compounded by the fact that many AI coding tools operate as cloud services, meaning sensitive code data may be transmitted to and processed by external systems beyond organizational control.

Performance Degradation as Security Indicator

Recent reports of performance degradation in AI coding tools like Claude may signal more than just capacity management issues. According to VentureBeat, users are reporting that Claude Opus has become “less capable, less reliable and more wasteful with tokens” in recent weeks.

Security implications of model degradation:

- Increased error rates: Degraded models may produce more buggy code, creating additional attack surface

- Behavioral changes: Unexpected model behavior could indicate compromise or manipulation

- Resource exhaustion attacks: Attackers might deliberately trigger model degradation to force organizations toward less secure alternatives

- Trust erosion: Unreliable AI tools may lead developers to disable security features or bypass review processes

Security teams should monitor AI tool performance metrics as potential indicators of compromise or manipulation, particularly when degradation occurs suddenly or affects specific types of security-sensitive code generation.

Defense Strategies and Best Practices

Implementing effective security controls for AI coding tools requires a multi-layered approach that addresses both traditional and emerging threat vectors.

Essential security controls:

Network-Level Monitoring

- Deploy advanced network detection and response (NDR) solutions that can identify AI model downloads and local inference activities

- Implement DNS monitoring to track access to AI model repositories and services

- Use endpoint detection and response (EDR) tools to monitor for suspicious AI-related process execution

Code Security Integration

- Mandate static application security testing (SAST) for all AI-generated code

- Implement dynamic application security testing (DAST) in CI/CD pipelines

- Deploy software composition analysis (SCA) tools to validate AI-selected dependencies

- Require human security review for all AI-generated code before production deployment

Access Control and Governance

- Establish approved AI coding tool registries with security-vetted options

- Implement zero-trust principles for AI tool access to sensitive repositories

- Create data classification schemes that restrict AI processing of sensitive code

- Deploy privileged access management (PAM) for AI tools with elevated permissions

What This Means

The rapid adoption of AI coding tools represents a fundamental shift in enterprise attack surface that requires immediate attention from security leaders. Traditional perimeter-based security models are inadequate for addressing the risks posed by local AI inference and autonomous coding agents.

Organizations must develop new security frameworks that can provide visibility into AI-assisted development workflows while maintaining the productivity benefits these tools provide. This includes investing in endpoint security solutions capable of monitoring local AI activities, establishing governance frameworks for AI tool usage, and implementing security-by-design principles for AI-generated code.

The enterprises that successfully navigate this transition will be those that treat AI coding security as a strategic priority rather than an afterthought, implementing comprehensive controls before widespread adoption creates unmanageable risk exposure.

FAQ

How can security teams detect unauthorized local AI model usage?

Deploy endpoint detection and response (EDR) solutions that monitor for AI framework installations, large file downloads indicative of model weights, and GPU utilization patterns consistent with local inference. Network monitoring can also identify downloads from model repositories like Hugging Face.

What are the biggest security risks with AI coding assistants?

The primary risks include code injection vulnerabilities, credential exfiltration, supply chain contamination through malicious dependencies, and loss of visibility into development workflows when AI processing occurs locally rather than through monitored cloud APIs.

Should organizations ban AI coding tools entirely?

Complete bans are typically counterproductive and may drive shadow usage. Instead, organizations should establish approved tool registries, implement security controls for AI-generated code, and create governance frameworks that enable safe usage while maintaining security oversight.

Sources

For the broader 2026 landscape across research, industry, and policy, see our State of AI 2026 reference.