AI-powered coding assistants like GitHub Copilot and Cursor are transforming software development, but they’re introducing critical security vulnerabilities that most enterprises aren’t prepared to handle. According to VentureBeat, developers are increasingly running AI models locally on devices, creating a “Shadow AI 2.0” scenario where traditional security controls become ineffective. This shift from cloud-based to on-device inference represents a fundamental blind spot for Chief Information Security Officers (CISOs) managing enterprise code security.

The Rise of Unmanaged Local AI Inference

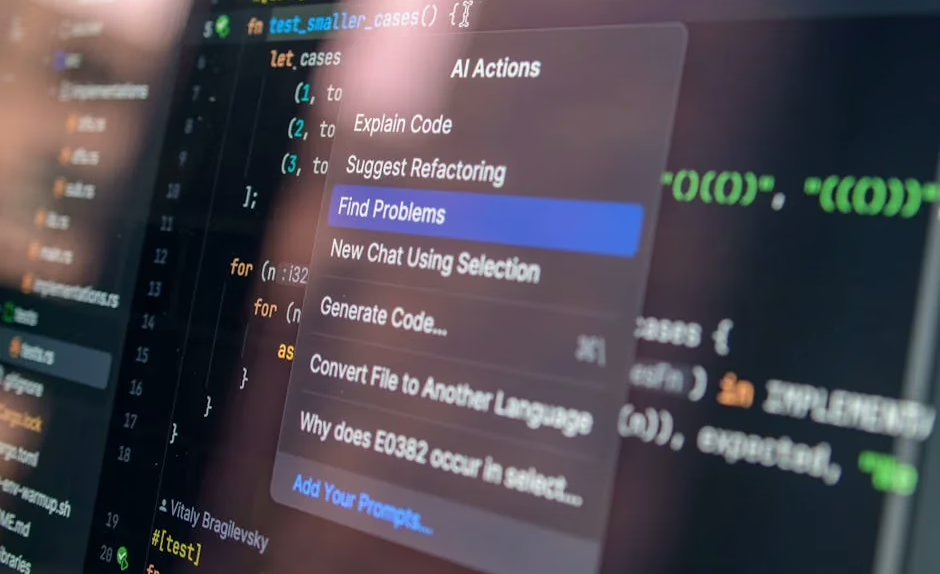

The security landscape for AI coding tools has dramatically shifted as developers move from cloud-based services to local model execution. Consumer-grade accelerators now enable MacBook Pros with 64GB unified memory to run quantized 70B-class models at usable speeds, making local inference practical for technical teams.

This transition creates three critical security gaps:

- Network visibility loss: Traditional data loss prevention (DLP) systems cannot monitor local AI interactions

- Policy enforcement breakdown: Cloud Access Security Broker (CASB) policies become ineffective for offline model usage

- Audit trail elimination: No API calls mean no logging of sensitive code or data processing

According to VentureBeat, security teams that previously controlled AI usage through browser monitoring and network traffic analysis now face “unvetted inference inside the device” as the primary enterprise risk. When inference happens locally, security teams lose the ability to observe, log, and control AI interactions with proprietary code.

Code Supply Chain Contamination Vectors

AI coding assistants introduce multiple attack vectors that can compromise the software supply chain. Spec-driven development, while improving code quality, creates new vulnerability surfaces when AI agents operate with elevated privileges and access to comprehensive system specifications.

Key contamination risks include:

- Training data poisoning: Models trained on compromised open-source repositories can inject malicious patterns

- Prompt injection attacks: Malicious comments or documentation can manipulate AI behavior during code generation

- Dependency confusion: AI tools may suggest vulnerable or malicious packages based on naming similarities

- Credential exposure: AI models processing code may inadvertently learn and reproduce API keys, passwords, or certificates

The emergence of “hyperagents” – self-improving AI systems that rewrite their own code – amplifies these risks exponentially. According to VentureBeat, these systems can “continuously rewrite and optimize their problem-solving logic,” creating dynamic attack surfaces that traditional security scanning cannot adequately assess.

Enterprise Blind Spots in AI Code Generation

CISOs face unprecedented challenges in maintaining visibility over AI-assisted development workflows. The shift to local inference has created what security experts term “Bring Your Own Model” (BYOM) scenarios, where employees download and run unvetted AI models without IT oversight.

Critical blind spots include:

- Model provenance tracking: No visibility into which AI models developers are using locally

- Data classification violations: Sensitive code being processed by unmanaged AI systems

- Compliance gap analysis: Inability to demonstrate regulatory compliance for AI-processed intellectual property

- Incident response limitations: Difficulty investigating security events involving local AI inference

VentureBeat reports that enterprise teams using agentic coding environments are completing projects in dramatically compressed timelines – from 18 months to 76 days in one AWS case study. While this productivity gain is significant, it often occurs without corresponding security control adaptations.

Threat Assessment Framework for AI Coding Tools

Security teams need structured approaches to evaluate AI coding tool risks. The STRIDE threat modeling framework adapted for AI coding reveals specific vulnerability categories:

Spoofing: AI models impersonating legitimate code patterns while introducing backdoors

Tampering: Malicious modification of AI-generated code during the development process

Repudiation: Difficulty attributing code authorship when AI assists in generation

Information Disclosure: Unintentional exposure of proprietary algorithms or business logic

Denial of Service: AI models consuming excessive computational resources or generating infinite loops

Elevation of Privilege: AI agents operating with unnecessary system permissions

Implementing continuous security assessment requires monitoring AI model behavior, validating generated code through automated security scanning, and maintaining audit trails for all AI-assisted development activities.

Defense Strategies and Security Controls

Effective protection against AI coding tool threats requires multi-layered security controls adapted for the unique challenges of AI-assisted development.

Endpoint Detection and Response (EDR) systems must be enhanced to monitor local AI model execution. This includes tracking resource consumption patterns, monitoring file system access by AI processes, and detecting unusual network behavior from development environments.

Code security scanning must be integrated directly into AI-assisted workflows:

- Static Application Security Testing (SAST) on all AI-generated code

- Software Composition Analysis (SCA) to validate AI-suggested dependencies

- Interactive Application Security Testing (IAST) during AI-assisted development cycles

- Container scanning for AI models and associated runtime environments

According to security best practices, organizations should implement zero-trust architecture for AI coding environments, requiring explicit verification for all AI model access to code repositories, documentation, and system specifications.

Privacy and Data Protection Implications

AI coding tools create significant privacy risks for enterprise intellectual property. Data residency concerns arise when proprietary code is processed by AI models, regardless of whether processing occurs locally or in the cloud.

Key privacy protection measures include:

- Data classification policies for code processed by AI systems

- Encryption at rest and in transit for all AI model interactions

- Access logging and monitoring for AI tool usage across development teams

- Data retention policies governing AI model training data and inference logs

Organizations must also consider regulatory compliance implications under frameworks like GDPR, CCPA, and industry-specific regulations. AI-generated code may inadvertently include personal data or create compliance violations if not properly governed.

What This Means

The rapid adoption of AI coding tools represents a fundamental shift in enterprise security risk profiles. Traditional perimeter-based security models are insufficient for managing AI-assisted development environments where inference increasingly occurs on local devices beyond network visibility.

Security teams must evolve their strategies to address the unique challenges of AI coding tools: implementing endpoint-focused monitoring, adapting code security scanning for AI-generated content, and developing governance frameworks for local AI model usage. The productivity benefits of AI coding assistants are undeniable, but they require corresponding investments in security infrastructure and processes.

The emergence of self-improving AI agents and hyperagents will further complicate this landscape, requiring continuous adaptation of security controls and threat assessment methodologies. Organizations that proactively address these challenges will be better positioned to safely harness the productivity benefits of AI-assisted development.

FAQ

Q: How can security teams monitor AI coding tools running locally on developer machines?

A: Implement enhanced EDR solutions that track AI model execution, monitor resource consumption patterns, and log file system access. Deploy endpoint agents that can identify and report on local AI model usage while maintaining developer productivity.

Q: What are the main security risks of AI-generated code in enterprise environments?

A: Primary risks include supply chain contamination through malicious training data, credential exposure in generated code, dependency confusion attacks, and loss of audit trails for code authorship. AI models may also inadvertently reproduce vulnerable code patterns from training data.

Q: How should organizations govern the use of AI coding assistants to maintain security compliance?

A: Establish clear policies for approved AI models, implement mandatory security scanning for all AI-generated code, maintain audit trails for AI tool usage, and ensure data classification policies cover AI-processed intellectual property. Regular security assessments of AI coding workflows are essential.

For the broader 2026 landscape across research, industry, and policy, see our State of AI 2026 reference.