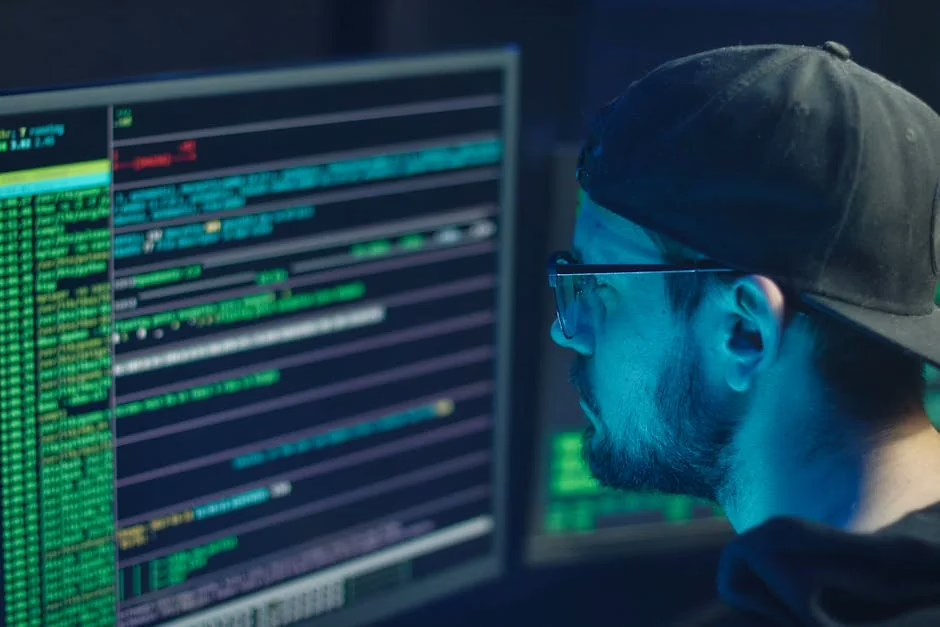

AI-powered coding assistants like GitHub Copilot, Cursor, and other IDE-integrated developer tools are transforming programming workflows, but they’re introducing significant security vulnerabilities that organizations must address. These tools process sensitive source code, intellectual property, and proprietary algorithms while maintaining persistent connections to external servers, creating new attack vectors that traditional security frameworks haven’t adequately addressed.

Code Injection and Poisoning Attacks

AI coding tools represent a prime target for supply chain attacks through training data manipulation and prompt injection vulnerabilities. Threat actors can exploit these systems through several attack methodologies:

Training Data Poisoning: Malicious actors can contribute vulnerable code patterns to public repositories that AI models use for training. When developers use tools like Copilot, these poisoned patterns may be suggested as “best practices,” introducing backdoors and security flaws into production systems.

Prompt Injection Attacks: Sophisticated attackers can craft malicious comments or documentation that manipulate AI coding assistants into generating vulnerable code. These attacks exploit the natural language processing capabilities of tools like Cursor to bypass security controls.

Model Extraction: Adversaries can reverse-engineer proprietary AI models by analyzing code suggestions, potentially exposing intellectual property and training methodologies. This represents a significant threat to organizations investing in custom AI development tools.

Defensive measures include implementing code review protocols specifically designed for AI-generated content, establishing baseline security scanning for all AI-suggested code, and maintaining strict version control with attribution tracking for AI-assisted commits.

Data Exfiltration and Privacy Vulnerabilities

AI coding tools create unprecedented data exposure risks by transmitting source code to external servers for processing. This data flow introduces multiple threat vectors that security teams must address:

Intellectual Property Leakage: When developers use cloud-based AI assistants, proprietary algorithms, business logic, and sensitive configurations are transmitted to third-party servers. Even with encryption in transit, this data may be stored, analyzed, or inadvertently exposed through data breaches.

Credential Harvesting: AI tools often process configuration files, environment variables, and embedded credentials. Malicious actors with access to AI service logs could extract API keys, database passwords, and authentication tokens.

Cross-Tenant Data Bleeding: Multi-tenant AI services risk exposing one organization’s code patterns to another through model contamination or inadequate data isolation. This creates potential for competitive intelligence gathering and trade secret theft.

Compliance Violations: Organizations in regulated industries face additional risks when AI tools process code containing personal data, financial information, or healthcare records, potentially violating GDPR, HIPAA, or PCI DSS requirements.

Mitigation strategies include implementing data loss prevention (DLP) policies for AI tool usage, establishing air-gapped development environments for sensitive projects, and conducting regular security assessments of AI service providers’ data handling practices.

IDE Integration Security Gaps

Integrated Development Environment (IDE) plugins and extensions for AI coding tools introduce privilege escalation risks and expand the attack surface of development environments:

Extension Vulnerabilities: AI coding extensions often require broad permissions to access file systems, network connections, and system resources. Compromised extensions can serve as persistence mechanisms for advanced persistent threats (APTs).

Man-in-the-Middle Attacks: IDE integrations that communicate with AI services over insecure channels are vulnerable to interception and manipulation. Attackers can modify code suggestions in real-time, introducing malicious functionality.

Local Storage Exploitation: AI tools cache code snippets, conversation histories, and model outputs locally. Inadequate encryption of these caches creates opportunities for data theft through endpoint compromise.

Authentication Bypass: Weak authentication mechanisms in AI tool integrations can allow unauthorized access to development environments and source code repositories.

Security controls should include endpoint detection and response (EDR) monitoring for AI tool activities, mandatory code signing for all IDE extensions, and implementation of zero-trust network architectures for development environments.

Vulnerability Introduction Through AI Suggestions

AI coding assistants can inadvertently introduce common vulnerability patterns into codebases, requiring specialized detection and remediation approaches:

SQL Injection Patterns: AI models trained on legacy code may suggest outdated database interaction patterns that are vulnerable to SQL injection attacks. These suggestions often appear syntactically correct but lack proper input sanitization.

Cross-Site Scripting (XSS) Vulnerabilities: Web development suggestions from AI tools frequently omit crucial output encoding and input validation, creating pathways for XSS exploitation.

Insecure Cryptographic Implementations: AI assistants may suggest deprecated encryption algorithms, weak key generation methods, or improper certificate validation, compromising application security.

Race Condition Vulnerabilities: Complex concurrency patterns suggested by AI tools often lack proper synchronization mechanisms, introducing time-of-check-time-of-use (TOCTOU) vulnerabilities.

Organizations should implement static application security testing (SAST) tools specifically configured to detect AI-generated vulnerability patterns, establish security-focused code review processes, and provide developer training on secure coding practices in AI-assisted environments.

Defense Strategies and Best Practices

Implementing comprehensive security measures for AI coding tools requires a multi-layered approach addressing both technical and organizational vulnerabilities:

Network Segmentation: Isolate development environments using AI tools from production networks and sensitive data repositories. Implement strict firewall rules and network access controls.

Code Provenance Tracking: Maintain detailed logs of AI-generated code suggestions, including timestamps, model versions, and prompt contexts. This enables rapid identification and remediation of compromised code.

Security Training Programs: Develop specialized training curricula addressing AI-specific security risks, including prompt injection recognition, secure prompt engineering, and AI-generated code validation techniques.

Incident Response Planning: Establish specific procedures for AI-related security incidents, including model compromise detection, data breach notification protocols, and contaminated code remediation workflows.

Vendor Risk Assessment: Conduct thorough security evaluations of AI coding tool providers, including penetration testing, compliance audits, and data handling assessments.

What This Means

The rapid adoption of AI coding tools like Copilot and Cursor represents both a significant productivity opportunity and a substantial security challenge for organizations. While these tools can accelerate development cycles and reduce coding errors, they also introduce novel attack vectors that traditional security measures aren’t designed to address.

Security teams must evolve their threat models to account for AI-specific vulnerabilities, implement specialized monitoring and detection capabilities, and establish governance frameworks for AI tool usage. The key is balancing innovation with security through risk-based approaches that don’t stifle developer productivity while maintaining robust protection against emerging threats.

Organizations that proactively address these security challenges will be better positioned to leverage AI coding tools safely and maintain competitive advantages in software development while protecting their intellectual property and sensitive data.

FAQ

Q: Are AI coding tools like Copilot safe for enterprise use?

A: AI coding tools can be used safely in enterprise environments with proper security controls, including data loss prevention policies, code review processes, and network segmentation. However, organizations must implement specialized security measures to address AI-specific risks.

Q: How can developers identify potentially vulnerable AI-generated code?

A: Developers should use static analysis security testing (SAST) tools, implement mandatory code reviews for AI-suggested code, and maintain awareness of common vulnerability patterns like SQL injection and XSS that AI tools may inadvertently suggest.

Q: What data privacy concerns exist with cloud-based AI coding assistants?

A: Cloud-based AI tools transmit source code to external servers, potentially exposing intellectual property, embedded credentials, and sensitive business logic. Organizations should evaluate data handling practices, implement DLP policies, and consider on-premises alternatives for sensitive projects.

Sources

- Victoria Li’s senior project: Exploring how AI processes information – Harvard School of Engineering and Applied Sciences – Google News – AI Tools

- Forget Gemini and Claude, this is the free game-changing AI tool you need to try on Google Pixel – Android Police – Google News – AI Tools

- Dealers demand more practical AI as generic tools fall short – Car Dealership Guy News – Google News – AI Tools