Microsoft launched MAI-Image-2-Efficient, a production-ready text-to-image model that delivers 41% cost reduction and 22% faster performance compared to its flagship version, according to VentureBeat. The model, available immediately through Microsoft Foundry and MAI Playground, processes text at $5 per million tokens and generates images at $19.50 per million tokens while achieving 4x greater throughput efficiency per GPU on NVIDIA H100 hardware.

This development reflects broader enterprise adoption trends where organizations are integrating vision-language models (VLMs) and multimodal capabilities to solve complex business problems. Recent research from Databricks demonstrates that multi-step agentic approaches outperform single-turn retrieval systems by 21% on hybrid data queries, highlighting the enterprise value of sophisticated multimodal architectures.

Enterprise Cost Optimization Through Efficient Multimodal Models

Microsoft’s pricing strategy with MAI-Image-2-Efficient addresses a critical enterprise concern: balancing AI capability with operational costs. The 41% price reduction from the flagship MAI-Image-2 model makes production deployments more economically viable for large-scale enterprise applications.

Key cost advantages include:

- Reduced token costs: Text processing at $5 per million tokens versus competitor pricing

- Improved GPU utilization: 4x greater throughput efficiency on H100 hardware

- Faster processing: 22% speed improvement reduces compute time requirements

- Lower latency: 40% better p50 latency compared to Google’s Gemini models

Enterprise IT leaders can now justify multimodal AI investments with clearer ROI calculations. The model’s integration across Microsoft’s ecosystem, including Copilot and Bing, provides immediate deployment pathways for organizations already invested in Microsoft infrastructure.

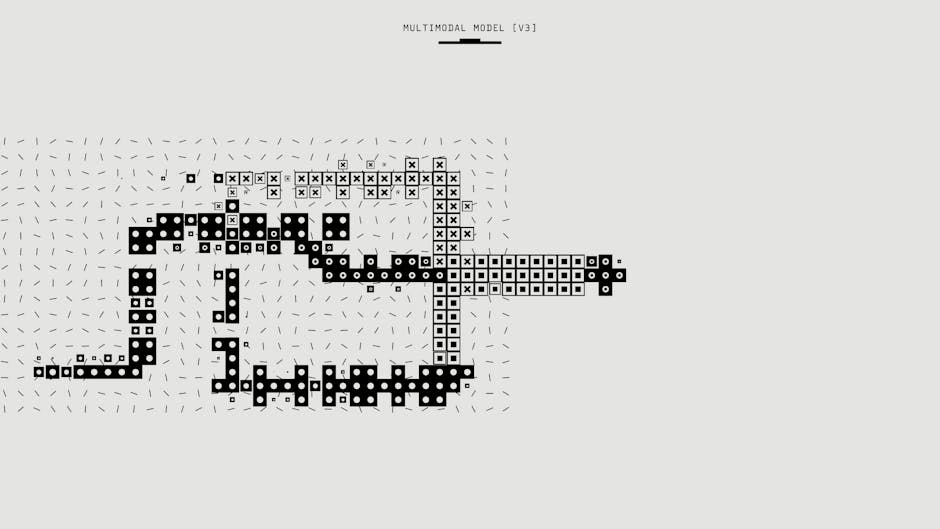

Architectural Considerations for Multimodal AI Integration

Successful enterprise deployment of multimodal AI requires careful architectural planning. Databricks research reveals that traditional retrieval-augmented generation (RAG) systems fail when queries combine structured data with unstructured content, such as sales figures alongside customer reviews.

Critical integration factors:

- Hybrid data handling: Multi-step agents excel at combining SQL databases with document repositories

- Scalability requirements: Single-turn RAG cannot handle complex enterprise knowledge tasks

- Infrastructure dependencies: GPU clusters must support both vision and language processing workloads

- API integration: RESTful endpoints for seamless integration with existing enterprise systems

Michael Bendersky, research director at Databricks, explains: “RAG works, but it doesn’t scale. If you want to make your agent even better, and you want to understand why you have declining sales, now you have to help the agent see the tables and look at the sales data.”

Security and Compliance Framework for Vision-Language Models

Enterprise adoption of multimodal AI introduces new security considerations beyond traditional text-based models. Organizations must address data privacy concerns when processing images, videos, and audio alongside sensitive business documents.

Enterprise security requirements:

- Data encryption: End-to-end encryption for multimodal content in transit and at rest

- Access controls: Role-based permissions for different modality types

- Audit trails: Comprehensive logging of multimodal AI interactions

- Compliance alignment: GDPR, HIPAA, and industry-specific regulations for visual data

Microsoft’s approach through Azure Foundry provides enterprise-grade security controls, including private endpoint connectivity and customer-managed encryption keys. This infrastructure foundation enables organizations to deploy multimodal capabilities while maintaining regulatory compliance.

Performance Benchmarking and Model Selection

The Stanford AI Index 2026 reveals that AI model performance continues improving despite predictions of development plateaus. Enterprise teams need standardized benchmarking approaches to evaluate multimodal AI solutions.

Evaluation criteria for enterprise deployment:

- Latency requirements: Real-time versus batch processing needs

- Accuracy thresholds: Task-specific performance requirements

- Resource consumption: GPU memory and compute requirements

- Integration complexity: API compatibility and deployment overhead

Databricks’ KARLBench evaluation framework provides enterprise-focused benchmarks for hybrid data scenarios. Organizations should establish baseline performance metrics before implementing multimodal AI to measure improvement and ROI effectively.

Infrastructure Scaling for Multimodal Workloads

Global AI data centers now consume 29.6 gigawatts of power, equivalent to New York state’s peak demand, according to the Stanford AI Index. Enterprise organizations must plan infrastructure capacity carefully to support multimodal AI workloads without overwhelming existing systems.

Scaling considerations:

- GPU cluster management: Dedicated resources for vision and language processing

- Storage requirements: High-performance storage for image, video, and audio datasets

- Network bandwidth: Sufficient capacity for multimodal data transfer

- Power consumption: Energy-efficient deployment strategies

Microsoft’s efficiency improvements with MAI-Image-2-Efficient demonstrate how optimized models can reduce infrastructure demands while maintaining performance. Organizations should prioritize efficient models to minimize operational overhead and environmental impact.

What This Means

Multimodal AI represents a maturation point for enterprise artificial intelligence, moving beyond experimental implementations to production-ready solutions. Microsoft’s cost-effective image generation model and Databricks’ hybrid data processing research indicate that organizations can now deploy sophisticated multimodal capabilities with predictable costs and measurable outcomes.

Enterprise IT leaders should evaluate multimodal AI opportunities within existing infrastructure constraints while planning for increased compute and storage requirements. The technology’s ability to process diverse data types simultaneously opens new possibilities for business intelligence, customer service automation, and content generation workflows.

Successful implementation requires coordinated planning across security, infrastructure, and application development teams to ensure seamless integration with existing enterprise systems while maintaining compliance and performance standards.

FAQ

What are the primary cost benefits of multimodal AI for enterprises?

Multimodal AI reduces operational costs through improved automation efficiency, with models like Microsoft’s MAI-Image-2-Efficient offering 41% cost reduction and 4x better GPU utilization compared to previous generations.

How do multimodal AI systems handle enterprise security requirements?

Enterprise multimodal AI platforms provide end-to-end encryption, role-based access controls, comprehensive audit trails, and compliance frameworks for GDPR, HIPAA, and industry-specific regulations across all data modalities.

What infrastructure changes are needed for multimodal AI deployment?

Organizations need GPU clusters optimized for both vision and language processing, high-performance storage for diverse data types, sufficient network bandwidth for multimodal transfers, and energy-efficient deployment strategies to manage increased power consumption.

Further Reading

- Rachel Lorraine departs Yum! Brands for VP, Enterprise Insights and Analytics role at Whataburger – Retail Technology Innovation Hub – Google News – Tech Innovation

- Anthropic’s Claude Managed Agents gives enterprises a new one-stop shop but raises vendor ‘lock-in’ risk – VentureBeat

- Public-facing AI tools could yield more efficiency gains for states, report says – Route Fifty – Google News – AI Tools

Sources

For a side-by-side look at the flagship models in play, see our full 2026 AI model comparison.