Microsoft launched MAI-Image-2-Efficient this week, delivering production-ready text-to-image generation at 41% lower cost than its flagship model. According to Microsoft’s announcement, the new model processes images 22% faster while achieving 4x greater throughput efficiency per GPU on NVIDIA H100 hardware.

The release marks Microsoft’s fastest AI model turnaround yet, signaling the company’s commitment to building an independent AI stack beyond OpenAI partnerships. Priced at $5 per million text tokens and $19.50 per million image tokens, the model undercuts the flagship MAI-Image-2’s $33 pricing tier significantly.

Technical Architecture and Performance Metrics

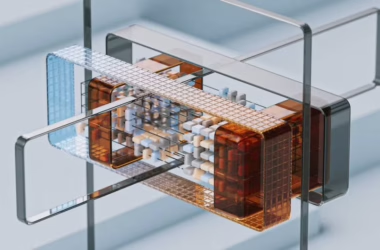

MAI-Image-2-Efficient demonstrates sophisticated optimization techniques that maintain quality while reducing computational overhead. The model achieves 4x greater throughput efficiency on NVIDIA H100 GPUs at 1024×1024 resolution, a critical benchmark for enterprise deployment scenarios.

Microsoft’s engineering team implemented several key architectural improvements:

- Optimized inference pipeline reducing latency by 22%

- Enhanced GPU utilization through improved memory management

- Streamlined neural network layers maintaining visual fidelity

- Advanced quantization techniques reducing model size without quality loss

The model outperforms Google’s competing offerings by an average of 40% on p50 latency benchmarks, specifically beating Gemini 3.1 Flash, Gemini 3.1 Flash Image, and Gemini 3 Pro Image. This performance advantage stems from Microsoft’s focus on inference optimization rather than pure parameter scaling.

Enterprise Deployment and Integration Strategy

Microsoft’s two-model strategy reflects broader industry trends toward specialized AI offerings. The company now provides enterprises with clear options: flagship quality for premium applications or efficient processing for high-volume workflows.

The model launches immediately across Microsoft Foundry and MAI Playground with no waitlist restrictions. This immediate availability contrasts with typical AI model releases that often include gradual rollouts or access limitations.

Integration extends beyond standalone services. According to VentureBeat’s coverage, the model rolls out across Copilot and Bing, with additional product surfaces following. This comprehensive integration demonstrates Microsoft’s commitment to embedding advanced AI capabilities throughout its ecosystem.

Competitive Landscape and Market Positioning

Microsoft’s pricing strategy directly challenges hyperscaler competitors while establishing clear performance benchmarks. The 41% cost reduction addresses enterprise concerns about AI deployment expenses, particularly for high-volume image generation workflows.

Meanwhile, other major players continue advancing their offerings. Anthropic announced Claude Managed Agents, focusing on enterprise orchestration rather than image generation. This platform embeds orchestration logic directly in the AI model layer, potentially creating vendor lock-in scenarios but simplifying deployment complexity.

The competitive dynamics highlight different strategic approaches:

- Microsoft: Cost optimization and ecosystem integration

- Anthropic: Enterprise orchestration and workflow automation

- Google: Continued focus on multimodal capabilities across Gemini variants

Technical Challenges and Data Drift Considerations

Enterprise AI deployments face ongoing challenges beyond initial model performance. VentureBeat’s analysis of data drift reveals critical considerations for production AI systems.

Data drift occurs when statistical properties of input data change over time, potentially degrading model accuracy. For image generation models like MAI-Image-2-Efficient, this manifests through:

- Evolving visual trends affecting training data relevance

- Changing user prompt patterns requiring model adaptation

- Hardware optimization needs as GPU architectures advance

- Security considerations as adversaries develop new attack vectors

Microsoft’s rapid release cycle suggests proactive approaches to addressing these challenges through continuous model refinement and optimization.

Open Source Developments and Alternative Approaches

The broader AI landscape includes significant open-source contributions alongside proprietary releases. LightOnOCR-2-1B from Hugging Face demonstrates alternative approaches to specialized AI tasks.

This 1-billion parameter model focuses on optical character recognition (OCR) with end-to-end document processing capabilities. Released under Apache 2.0 licensing, it enables:

- Clean text extraction from PDF documents

- Bounding box detection for embedded figures

- Community fine-tuning for domain-specific applications

- Layout-oriented workflows without multi-stage pipelines

These open-source initiatives provide enterprises with alternatives to proprietary solutions while advancing collective AI research capabilities.

What This Means

Microsoft’s MAI-Image-2-Efficient release signals a maturation phase in enterprise AI deployment. The focus on cost optimization and performance efficiency reflects real-world deployment constraints rather than pure capability advancement.

This trend toward specialized, optimized models suggests the industry is moving beyond the “bigger is better” paradigm toward practical, deployment-ready solutions. Enterprises benefit from clearer cost structures and performance guarantees, enabling more predictable AI integration planning.

The competitive landscape increasingly emphasizes ecosystem integration over standalone model capabilities. Microsoft’s comprehensive platform approach, Anthropic’s orchestration focus, and open-source alternatives create diverse options for enterprise AI strategies.

FAQ

What makes MAI-Image-2-Efficient different from the flagship model?

The efficient variant delivers 41% lower costs and 22% faster processing while maintaining production-quality output through optimized neural network architecture and improved GPU utilization.

How does Microsoft’s pricing compare to competitors?

At $19.50 per million image tokens, MAI-Image-2-Efficient undercuts the flagship model’s $33 pricing while outperforming Google’s Gemini variants by 40% on latency benchmarks.

What enterprise integration options are available?

The model launches immediately in Microsoft Foundry and MAI Playground, with rollouts across Copilot and Bing, plus additional product integrations planned for comprehensive ecosystem coverage.

Further Reading

Sources

For a side-by-side look at the flagship models in play, see our full 2026 AI model comparison.