AI models are achieving breakthrough benchmark scores while facing unexpected performance challenges, according to new data from Stanford’s 2026 AI Index and recent industry developments. Microsoft launched MAI-Image-2-Efficient with 41% cost reduction and 22% speed improvements, while researchers introduced LABBench2 with nearly 1,900 scientific tasks that prove significantly more challenging than previous tests.

However, the picture isn’t entirely rosy. Users are increasingly reporting performance degradation in Anthropic’s Claude models, with complaints spreading across GitHub, Reddit, and social media about reduced reasoning capabilities and increased hallucinations.

Record-Breaking Performance Meets Real-World Challenges

The latest benchmark results reveal a technology sector moving at unprecedented speed. According to MIT Technology Review, AI adoption is outpacing the personal computer and internet adoption rates, with companies generating revenue faster than any previous technology boom.

Key performance milestones include:

- Microsoft’s new image model achieving 4x greater throughput efficiency per GPU

- 40% faster performance compared to Google’s Gemini models on latency benchmarks

- US and China achieving near-parity in AI model performance rankings

Yet this rapid progress comes with substantial infrastructure costs. AI data centers now consume 29.6 gigawatts of power—enough to run New York state at peak demand. OpenAI’s GPT-4o alone uses enough water annually to meet the drinking needs of 12 million people.

Scientific Benchmarks Reveal Persistent Gaps

The introduction of LABBench2 represents a significant evolution in measuring AI capabilities for scientific research. According to arXiv research, this new benchmark comprises nearly 1,900 tasks designed to measure real-world scientific capabilities rather than just knowledge retention.

LABBench2 findings show:

- Model accuracy dropped 26-46% across subtasks compared to the original LAB-Bench

- Current frontier models still have substantial room for improvement in scientific tasks

- The benchmark shift from rote knowledge to meaningful work performance reveals capability gaps

This benchmark evolution reflects the industry’s recognition that measuring AI progress requires more sophisticated testing beyond traditional metrics. The focus has shifted from “can an AI system perform a task” to “how well does it perform complex, multi-step scientific work.”

User Experience Concerns Challenge Benchmark Success

While benchmark scores climb higher, real-world user experiences tell a more complex story. VentureBeat reports growing complaints about Claude’s performance degradation, with users describing what they term “AI shrinkflation”—paying the same price for seemingly weaker performance.

User complaints include:

- Reduced sustained reasoning capabilities

- Increased tendency to abandon tasks midway

- More frequent hallucinations and contradictions

- Less reliable coding assistance

Anthropric employees have denied intentionally degrading models for capacity management, but the company has acknowledged changes to usage limits and reasoning defaults. This disconnect between benchmark performance and user perception highlights the challenge of translating test scores into consistent real-world experiences.

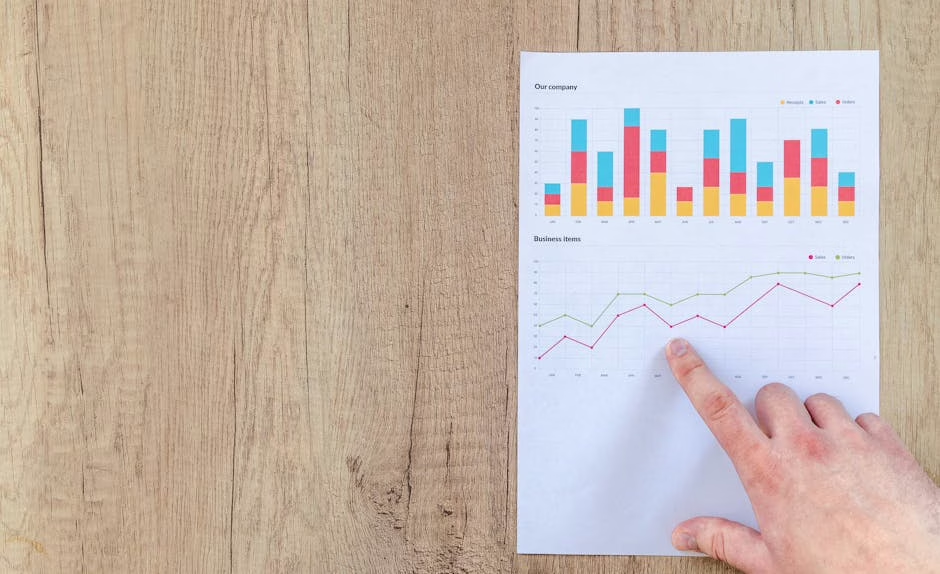

Microsoft’s Efficiency Focus Sets New Standards

Microsoft’s launch of MAI-Image-2-Efficient demonstrates how companies are optimizing for practical deployment rather than just peak performance. According to VentureBeat, the new model delivers flagship-quality results at significantly reduced costs and improved speed.

Efficiency improvements include:

- 41% price reduction compared to MAI-Image-2

- 22% faster processing speed

- 4x greater throughput efficiency per GPU on NVIDIA H100 hardware

- Immediate availability with no waitlist

This two-model strategy—offering both flagship and efficient variants—reflects the industry’s recognition that different use cases require different performance-cost trade-offs. For many everyday applications, the efficient model’s performance proves sufficient while delivering substantial cost savings.

Humanization Benchmarks Address Detection Concerns

A fascinating development in benchmark evolution comes from research into agent humanization. The “Turing Test on Screen” benchmark, detailed in arXiv research, addresses a critical but often overlooked aspect of AI performance: how human-like AI agents appear when interacting with digital interfaces.

Key insights include:

- Vanilla language model agents are easily detectable due to unnatural interaction patterns

- Agent Humanization Benchmark (AHB) quantifies the trade-off between imitability and utility

- Successful humanization can be achieved without sacrificing task performance

This research highlights how AI systems must evolve beyond pure capability metrics to consider behavioral authenticity, especially as digital platforms implement anti-bot measures.

What This Means

The current state of AI benchmarks reveals a technology sector experiencing rapid advancement alongside growing complexity. While models achieve impressive scores on standardized tests, the gap between benchmark performance and consistent user experience remains significant.

For everyday users, these developments suggest both promise and caution. Microsoft’s efficiency improvements point toward more accessible AI tools, while new scientific benchmarks indicate continued progress in specialized domains. However, reports of performance degradation in established models highlight the importance of sustained quality alongside innovation.

The emergence of humanization benchmarks signals AI’s evolution toward more sophisticated deployment scenarios. As AI agents become more prevalent in digital environments, their ability to operate seamlessly without detection becomes increasingly important for practical applications.

FAQ

Q: Why are AI benchmark scores improving while users report worse performance?

A: Benchmark tests measure specific capabilities under controlled conditions, while user experiences involve complex, real-world scenarios. Performance degradation often results from capacity management, model updates, or changes in usage limits rather than core capability reductions.

Q: How do efficiency improvements like Microsoft’s MAI-Image-2-Efficient affect regular users?

A: Efficiency improvements translate to faster response times and lower costs for AI-powered applications. This makes AI tools more accessible for everyday use while maintaining quality that meets most practical needs.

Q: What makes LABBench2 more challenging than previous scientific AI benchmarks?

A: LABBench2 focuses on real-world scientific task performance rather than knowledge recall. It requires multi-step reasoning, sustained attention, and practical problem-solving skills that better reflect actual research work.

For a side-by-side look at the flagship models in play, see our full 2026 AI model comparison.