- LLMs operate on tokens — subword units produced by chopping text according to a fixed vocabulary. They do not see raw characters or complete words directly.

- Modern tokenizers use byte-pair encoding (BPE), WordPiece, or SentencePiece algorithms to build vocabularies of 30,000 to 200,000 tokens.

- Common English words are typically single tokens; rare words, technical terms, and non-English text are split across multiple tokens.

- Tokenization choices affect API costs, context-window usage, model performance on languages besides English, and even simple tasks like arithmetic and spelling.

- Tools like OpenAI’s tokenizer playground let you see exactly how your text will be split.

Why tokens instead of characters or words?

Two extremes. Character-level models have tiny vocabularies (256 for byte-level) and very long sequences for any real text. Word-level models have huge vocabularies — you need a token for every word, every plural, every tense, every typo — and still fail on unseen words.

Subword tokens split the difference. Common words stay whole, rare words get broken into smaller pieces. “Transformer” might be one token; “antidisestablishmentarianism” gets split across four or five. This gives a finite vocabulary that can represent any text, at reasonable sequence lengths.

How byte-pair encoding works

Byte-pair encoding (BPE) is the dominant tokenization algorithm. The training procedure:

- Start with a vocabulary of individual bytes or characters.

- Count the most frequent adjacent pair in the training corpus.

- Merge that pair into a single new token. Add it to the vocabulary.

- Repeat until you reach the target vocabulary size.

The result is a vocabulary where common character sequences — “th”, “ing”, “tion”, ” the”, ” a” — become single tokens, while rare sequences remain broken down. GPT-4’s tokenizer (cl100k_base) has about 100,000 tokens; GPT-4o (o200k_base) has about 200,000 and handles non-English text more efficiently. Modern tokenizers operate on bytes rather than characters, which lets them handle any Unicode text without special cases.

WordPiece and SentencePiece

WordPiece, used by BERT, differs slightly in the scoring function used to pick merges. SentencePiece, common in T5 and LLaMA, treats whitespace as just another character and trains directly on raw text. All three approaches produce qualitatively similar vocabularies; differences matter mostly at the margins.

Seeing tokens in action

Consider the sentence “The transformer architecture changed AI.”

In GPT-4’s tokenizer, this is 7 tokens: “The”, ” transformer”, ” architecture”, ” changed”, ” AI”, “.”. Leading spaces are part of each token (that is why ” transformer” differs from “transformer” at the start of a sentence).

Now consider “Photosynthesis produces glucose.” — 7 tokens in GPT-4o, but 9 in the older GPT-2 tokenizer, because “photosynthesis” was not common enough to merit a single token in the older vocabulary.

For languages other than English, the disparity grows sharply. The same Mandarin, Arabic, or Hindi sentence typically requires 2-4 times as many tokens as the equivalent English. For pricing and context-window purposes, non-English users pay more per concept. For more on context limits, see our context windows explainer.

Why tokenization affects model behaviour

Cost and throughput

API pricing is per-token. A French-language deployment may cost 30-50% more per user than English. A system handling a lot of code (heavy on rare identifiers) can tokenize less efficiently than the same token count of natural prose.

Arithmetic

Numbers are tokenized awkwardly. “12345” might be a single token or three, depending on training frequency. Splitting numbers into irregular pieces makes arithmetic harder for the model to learn. This is why LLMs famously struggle with long multiplication — the token representation obscures the per-digit structure.

Spelling and character-level tasks

Because the model never sees individual characters (just tokens), tasks like “reverse this string”, “count the vowels”, or “rhyme with X” are harder than they seem. The model has to reason about character content it cannot directly see.

Language fairness

Tokenizers are trained predominantly on English web text, so English tokens compactly represent English meanings. A speaker of Swahili or Tamil gets worse compression, higher cost, and slightly degraded model quality due to less efficient representation. Recent models (GPT-4o, Claude Sonnet, Gemini) have invested in more multilingual tokenizers, narrowing but not closing the gap.

Special tokens

Beyond the vocabulary words, tokenizers include special tokens for structural signals — start-of-sequence, end-of-sequence, role turns (system/user/assistant in chat formats), tool-call boundaries, and image or audio placeholders for multimodal models. The chat template that wraps your messages before sending them to the model is made of these special tokens.

Practical implications for engineers

Count tokens, not words

When estimating costs or context usage, use the actual tokenizer for your model. A rough English rule of thumb is 0.75 tokens per word or 1 token per 4 characters, but this varies by language and content type. Providers ship libraries (OpenAI’s `tiktoken`, Hugging Face `tokenizers`) that give exact counts.

Prompt design within token budgets

Every byte in your system prompt or retrieved context consumes tokens. Pruning verbose instructions, compressing retrieved documents, and using structured tokens efficiently can materially reduce per-query cost.

Fine-tuning and tokenizer compatibility

A fine-tuned model inherits its base model’s tokenizer. You cannot swap tokenizers between a pre-trained model and its fine-tune without retraining. This matters if you are moving to a new base model — re-evaluate your prompts and costs under the new tokenizer.

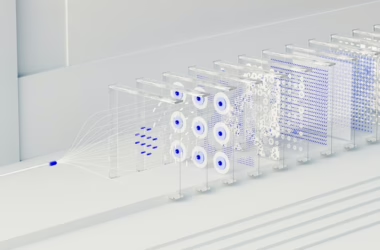

Tokenization and transformers

Once text is tokenized, each token is mapped to an embedding vector — a dense numerical representation. These embeddings are the actual input to the transformer’s attention layers. Everything the model does operates on these vectors, and the original text is only reconstructed at the very end by mapping output probabilities back to tokens. See our transformers explainer for how the architecture consumes these embeddings.

Frequently asked questions

How many tokens is this article?

An English article of about 1,500 words typically tokenizes to 1,900 to 2,100 tokens in modern tokenizers like GPT-4o’s or Claude’s. Our large language models coverage explains how this affects pricing and context window usage. You can count exactly using OpenAI’s tiktoken library or by pasting into the OpenAI tokenizer playground.

Why does the same text cost different amounts across providers?

Each provider trained their own tokenizer, and vocabulary choices differ. The same English paragraph might tokenize to 500 tokens for OpenAI’s tokenizer, 520 for Anthropic’s, and 480 for Google’s. Pricing differs per provider too, so comparing total cost requires comparing (tokens × price) across providers, not just token counts or per-token prices alone.

Can I use a custom tokenizer for my domain?

Not easily with proprietary APIs — you inherit the provider’s tokenizer. With open-weights models (Llama, Mistral, Qwen), you can in principle retrain the tokenizer, but that requires also retraining the model, which is expensive. More practical is tokenizer-aware prompt engineering: avoid unusual formatting, use standard spellings, and prefer tokenizer-efficient structures when latency and cost matter.