The European Union’s Artificial Intelligence Act officially entered its enforcement phase this year, establishing the world’s first comprehensive AI regulatory framework as governments worldwide grapple with balancing innovation and safety. Meanwhile, the United States faces mounting pressure to address surveillance overreach and prediction market manipulation, while cybersecurity experts warn that autonomous AI agents are creating new attack vectors faster than governance can adapt.

EU AI Act Sets Global Precedent for AI Governance

The EU AI Act represents a watershed moment in technology regulation, introducing risk-based classifications that will fundamentally reshape how AI systems are developed and deployed globally. The legislation categorizes AI applications into four risk levels: minimal, limited, high, and unacceptable risk, with increasingly stringent compliance requirements.

High-risk AI systems now face mandatory conformity assessments, quality management systems, and human oversight requirements. These include AI used in critical infrastructure, employment decisions, law enforcement, and educational settings. Companies must demonstrate transparency, accuracy, and robustness before market entry.

The Act’s extraterritorial reach means that any AI system affecting EU citizens falls under its jurisdiction, creating a “Brussels Effect” where global tech companies must adopt EU standards worldwide. This regulatory approach prioritizes algorithmic accountability and bias prevention, requiring companies to conduct impact assessments and implement safeguards against discriminatory outcomes.

Non-compliance carries severe penalties of up to 7% of global annual revenue, making the EU AI Act one of the most financially consequential technology regulations ever implemented.

US Surveillance Laws Face Bipartisan Reform Push

As the EU advances AI regulation, the United States confronts its own governance challenges around surveillance technology and data privacy. Section 702 of the Foreign Intelligence Surveillance Act (FISA) faces renewed scrutiny as lawmakers debate whether to extend warrantless surveillance powers without reform.

The bipartisan Government Surveillance Reform Act, introduced by Senators Ron Wyden (D-OR) and Mike Lee (R-UT), seeks to curtail government surveillance programs that have collected “unfathomable amounts of information” on Americans communicating with overseas contacts. Constitutional privacy protections are at stake as intelligence agencies sweep up domestic communications without individualized warrants.

This legislative battle reflects broader concerns about government accountability in the digital age. Privacy advocates argue that existing surveillance authorities, originally designed for traditional intelligence gathering, are inadequate for regulating AI-powered surveillance systems that can analyze vast datasets in real-time.

The outcome will significantly impact how AI surveillance technologies are deployed by federal agencies, setting precedents for facial recognition, predictive policing, and automated threat detection systems.

Prediction Markets Face Insider Trading Crackdowns

State governments are taking proactive steps to prevent corruption in emerging prediction markets, with New York, California, and Illinois banning government employees from using insider information for trading. Governor Kathy Hochul’s executive order explicitly prohibits state workers from leveraging “nonpublic information obtained in the course of their official duties” on platforms like Kalshi and Polymarket.

Market integrity concerns have intensified as prediction markets gain mainstream adoption for forecasting political events, policy outcomes, and economic indicators. The potential for government insiders to profit from privileged information raises fundamental questions about democratic fairness and public trust.

Congress is considering federal legislation to address these concerns, including bills that would bar elected officials from participating in prediction markets entirely. The White House has warned executive branch staff against trading on these platforms, recognizing the ethical implications of betting on events they may influence.

These regulatory responses highlight the challenge of governing emerging technologies that blur traditional boundaries between gambling, financial markets, and democratic participation.

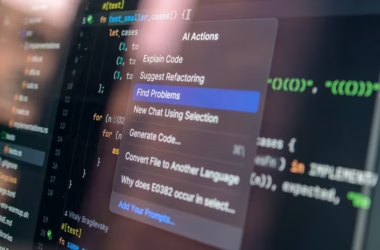

Autonomous AI Agents Create New Security Vulnerabilities

The rapid deployment of autonomous AI agents in cybersecurity operations is outpacing governance frameworks designed to prevent misuse. According to VentureBeat, adversaries have already compromised AI security tools at over 90 organizations in 2025, stealing credentials and cryptocurrency through malicious prompt injection.

The next generation of autonomous SOC (Security Operations Center) agents poses even greater risks, as they possess write access to critical infrastructure including firewalls and identity management systems. Unlike previous compromised tools that could only read data, these agents can modify security policies and quarantine systems using their own privileged credentials.

Cisco’s AgenticOps for Security and Ivanti’s Continuous Compliance platform represent this new category of autonomous security tools. While these systems promise machine-speed threat response, they also create attack vectors where compromised agents can rewrite infrastructure without adversaries directly touching the network.

The OWASP Agentic Top 10 framework documents emerging risks in AI agent deployment, emphasizing the critical need for robust approval gates, policy enforcement, and data validation controls built into platforms from launch.

Legal Experts Warn of Constitutional Crisis Acceleration

Legal analysts are raising alarms about the pace of constitutional challenges emerging from rapid technological deployment. YouTube legal commentator Devin Stone, who reaches over 500,000 viewers per episode, describes the current environment as experiencing “multiple Watergates per week” due to the intersection of technology and governance failures.

The convergence of AI surveillance capabilities, autonomous decision-making systems, and inadequate regulatory frameworks creates unprecedented challenges for democratic accountability. Traditional legal processes struggle to keep pace with algorithmic decision-making that affects millions of citizens in real-time.

Transparency requirements embedded in the EU AI Act represent one approach to maintaining human oversight over automated systems. However, critics argue that transparency alone is insufficient without corresponding enforcement mechanisms and technical standards for algorithmic auditing.

The risk of normalizing “unprecedented political behavior” extends beyond individual scandals to encompass systemic challenges in governing AI systems that operate at scales and speeds beyond human comprehension.

What This Means

The global regulatory landscape for AI is fragmenting into distinct approaches that will shape technological development for decades. The EU’s comprehensive framework prioritizes safety and rights protection, potentially slowing innovation but establishing robust safeguards. The US approach remains piecemeal, addressing specific sectors like surveillance and financial markets while lacking overarching AI governance.

This regulatory divergence creates compliance challenges for global technology companies while potentially advantaging regions with clearer frameworks. Organizations deploying AI systems must navigate multiple jurisdictions with conflicting requirements, driving up costs but potentially improving overall system safety and accountability.

The emergence of autonomous AI agents capable of modifying critical infrastructure represents a inflection point where reactive regulation may be insufficient. Proactive governance frameworks that anticipate technological capabilities rather than responding to incidents will become essential for maintaining democratic oversight of AI systems.

FAQ

Q: When does the EU AI Act fully take effect?

A: The EU AI Act entered enforcement in 2024, with different provisions taking effect on rolling timelines through 2026. High-risk AI systems face immediate compliance requirements, while general-purpose AI models have extended implementation periods.

Q: How do US surveillance laws affect AI development?

A: FISA Section 702 and related surveillance authorities provide legal frameworks for AI-powered intelligence gathering, but proposed reforms could limit warrantless data collection that trains government AI systems, potentially affecting their capabilities and accuracy.

Q: What makes autonomous AI agents more dangerous than previous AI tools?

A: Unlike earlier AI tools that only read data, autonomous agents have write access to critical systems like firewalls and identity management, meaning a compromised agent can modify infrastructure and security policies without human intervention or detection by traditional security tools.