Enterprise adoption of multimodal AI systems has reached 88% as organizations integrate vision-language models, video AI, and speech processing capabilities into production workflows. However, according to Stanford HAI’s ninth annual AI Index report, frontier models are still failing roughly one in three production attempts on structured benchmarks, creating significant operational challenges for IT decision-makers.

This performance inconsistency, dubbed the “jagged frontier” by researchers, represents the defining challenge for enterprise AI deployment in 2026. While models can achieve breakthrough performance on complex tasks like winning gold medals at mathematical competitions, they still struggle with basic functions like reliably telling time.

Vision-Language Models Lead Enterprise Integration

The latest generation of multimodal AI models demonstrates substantial improvements in enterprise-relevant capabilities. Leading models including Claude Opus 4.7, GPT-5.2, and Qwen3.5 achieved scores between 62.9% and 70.2% on τ-bench, which tests real-world agent performance involving user interaction and external API calls.

Key performance metrics for enterprise deployment:

- MMLU-Pro scores: Above 87% on multi-step reasoning across 12,000 human-reviewed questions

- GAIA benchmark: Model accuracy rose from 20% to 74.5% for general AI assistant tasks

- SWE-bench Verified: Agent performance increased from 60% to significantly higher levels in software engineering tasks

- HLE improvement: 30% advancement in one year on Humanity’s Last Exam across specialized domains

According to VentureBeat, Anthropic’s Claude Opus 4.7 currently leads the GDPVal-AA knowledge work evaluation with an Elo score of 1753, surpassing GPT-5.4 (1674) and Gemini 3.1 Pro (1314). However, the competitive landscape remains tight, with different models excelling in specific domains like agentic search and multilingual processing.

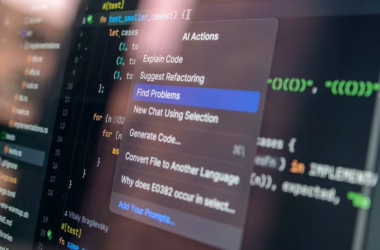

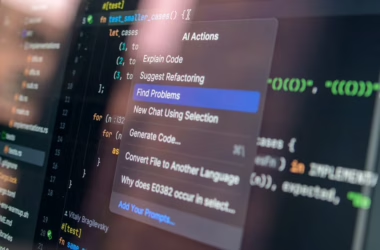

Video and Image AI Transform Creative Workflows

Adobe’s launch of the Firefly AI Assistant represents a significant advancement in multimodal creative AI, enabling complex, multi-step workflows across the entire Creative Cloud suite from a single conversational interface. The system can orchestrate tasks spanning Photoshop, Premiere Pro, and Illustrator through natural language commands.

“We want creators to tell us the destination and let the Firefly assistant — with its deep understanding of all the Adobe professional tools and generative tools — bring the tools to you right in the conversation,” Alexandru Costin, Vice President of AI & Innovation at Adobe, explained to VentureBeat.

Enterprise video and image AI capabilities:

- Cross-application workflow automation: Single interface controlling multiple creative applications

- Third-party model integration: Support for Kling 3.0 video models and other AI engines

- Cloud-native collaboration: Frame.io Drive virtual filesystem for distributed teams

- Production-ready quality: Maintained across automated multi-step processes

Meanwhile, Microsoft’s MAI-Image-2-Efficient addresses enterprise cost concerns with a 41% price reduction compared to flagship models while delivering 22% faster performance and 4x greater throughput efficiency per GPU on NVIDIA H100 hardware.

Enterprise Architecture and Integration Challenges

The rapid advancement of multimodal AI capabilities creates significant infrastructure and integration challenges for enterprise IT teams. According to the MIT Technology Review analysis of the Stanford AI Index, AI data centers worldwide now consume 29.6 gigawatts of power—equivalent to running the entire state of New York at peak demand.

Critical enterprise considerations:

- Power and infrastructure scaling: Data center power requirements growing exponentially

- Water consumption: OpenAI’s GPT-4o alone may exceed drinking water needs of 12 million people annually

- Supply chain risks: TSMC fabricates almost every leading AI chip, creating single points of failure

- Geopolitical factors: US and China nearly tied in AI model performance, affecting strategic planning

The fragmented nature of multimodal AI capabilities requires careful architectural planning. Organizations must balance performance requirements with cost optimization while ensuring reliable integration across existing enterprise systems. The “jagged frontier” phenomenon means that even high-performing models may fail unexpectedly on seemingly simple tasks, requiring robust error handling and fallback mechanisms.

Cost Optimization and Performance Scaling

Enterprise deployment of multimodal AI systems requires careful attention to cost-performance optimization. Microsoft’s two-model strategy with MAI-Image-2-Efficient demonstrates how hyperscale providers are addressing enterprise budget constraints while maintaining production-quality outputs.

Cost optimization strategies:

- Tiered model selection: Choosing appropriate model complexity for specific use cases

- GPU efficiency optimization: 4x throughput improvements on standardized hardware

- Latency improvements: 40% better p50 latency compared to competing models

- Token pricing models: $5 per million text input tokens, $19.50 per million image outputs

The competitive landscape between major providers (Microsoft, Google, OpenAI, Anthropic) is driving rapid price reductions and performance improvements. However, enterprises must evaluate total cost of ownership including infrastructure, training, and operational overhead rather than focusing solely on per-token pricing.

For video and image processing workflows, the integration of multiple AI engines within platforms like Adobe’s Firefly allows organizations to optimize for specific tasks while maintaining unified management interfaces. This approach reduces the complexity of managing multiple vendor relationships while providing flexibility in model selection.

Security, Compliance, and Risk Management

The enterprise adoption of multimodal AI introduces complex security and compliance considerations that IT leaders must address. Anthropic’s decision to restrict its most powerful model, Mythos, to select enterprise partners for cybersecurity testing highlights the potential security implications of advanced AI capabilities.

Key risk management areas:

- Model reliability: One-third failure rate on structured benchmarks requires robust error handling

- Data governance: Multimodal processing involves sensitive image, video, and audio data

- Audit capabilities: Increasing difficulty in auditing frontier model decisions and outputs

- Vendor dependency: Concentration risk with limited suppliers for advanced capabilities

The “jagged frontier” phenomenon creates particular challenges for compliance-sensitive industries where unpredictable failures could have regulatory implications. Organizations must implement comprehensive testing frameworks and maintain human oversight for critical business processes.

Additionally, the rapid pace of model updates and capability improvements requires agile governance frameworks that can adapt to new features while maintaining security standards. The tight competition between providers means that model capabilities and limitations can change significantly within short timeframes.

What This Means

The current state of multimodal AI presents both significant opportunities and operational challenges for enterprise organizations. While adoption rates have reached 88% and capabilities continue advancing rapidly, the persistent reliability gaps require careful implementation strategies.

IT decision-makers should focus on hybrid approaches that leverage multimodal AI for appropriate use cases while maintaining human oversight for critical processes. The competitive dynamics between major providers are driving both innovation and cost reductions, but organizations must balance performance requirements with infrastructure costs and security considerations.

The enterprise multimodal AI market is transitioning from experimental deployments to production-critical systems, requiring mature operational practices and risk management frameworks. Success will depend on organizations’ ability to navigate the “jagged frontier” while capturing the productivity benefits of advanced AI capabilities.

FAQ

What is the current reliability rate for enterprise multimodal AI systems?

Frontier AI models are failing approximately one in three production attempts on structured benchmarks, according to Stanford HAI’s 2026 AI Index report. This represents the primary operational challenge for enterprise deployments.

How much do enterprise multimodal AI implementations cost?

Costs vary significantly by provider and model complexity. Microsoft’s MAI-Image-2-Efficient offers enterprise pricing at $5 per million text input tokens and $19.50 per million image outputs, representing a 41% reduction from flagship model pricing.

What are the main infrastructure requirements for multimodal AI deployment?

Enterprise deployments require substantial power and compute resources, with global AI data centers consuming 29.6 gigawatts. Organizations also need robust error handling systems, GPU optimization for throughput efficiency, and careful vendor risk management due to supply chain concentration.