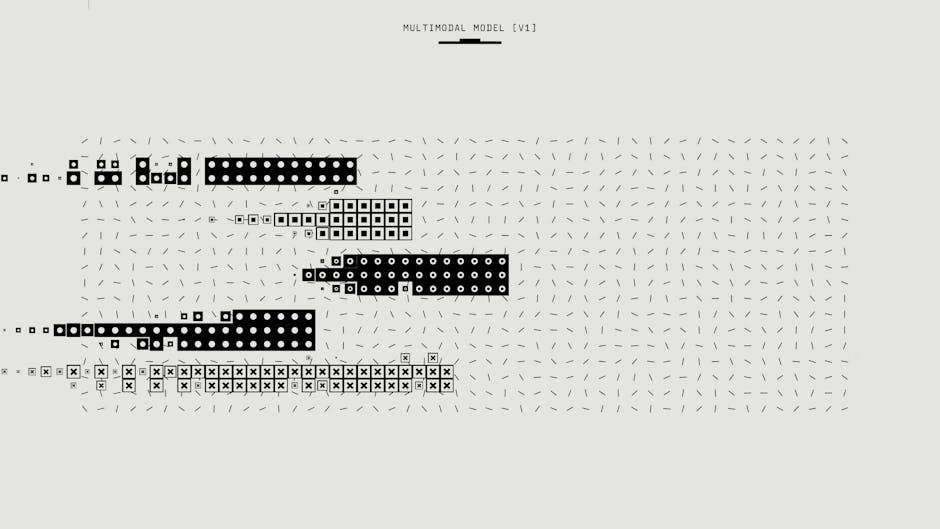

Multimodal AI Transforms Enterprise Operations at Scale

Enterprise organizations are rapidly deploying multimodal artificial intelligence systems that combine vision, language, and audio processing capabilities to automate complex workflows across industries. According to Google’s latest enterprise AI report, over 1,302 real-world generative AI use cases are now in production across leading organizations, with multimodal applications representing the fastest-growing segment of enterprise AI adoption.

Google’s recent launch of Deep Research and Deep Research Max agents demonstrates the maturation of multimodal enterprise AI, enabling organizations to process both web data and proprietary information through a single API call while generating native charts and infographics. Meanwhile, OpenAI’s ChatGPT Images 2.0 introduces advanced visual generation capabilities that can produce multilingual text, infographics, and complex visual content with enterprise-grade accuracy.

Vision-Language Models Reshape Enterprise Workflows

Vision-language models (VLMs) are becoming critical infrastructure for enterprise operations, particularly in industries requiring document processing, quality control, and visual analysis. These systems can simultaneously process textual instructions and visual inputs, enabling automated workflows that previously required human intervention.

Key enterprise applications include:

- Document automation: Processing invoices, contracts, and compliance documents with combined text and visual analysis

- Quality assurance: Real-time inspection of manufacturing outputs using computer vision integrated with natural language reporting

- Customer service: Automated analysis of product images submitted by customers with natural language problem resolution

- Compliance monitoring: Simultaneous analysis of visual evidence and regulatory text requirements

The integration of vision and language capabilities reduces the need for separate AI systems, simplifying enterprise architecture while improving accuracy. Organizations report up to 40% reduction in processing time for document-heavy workflows when implementing multimodal solutions compared to single-modal approaches.

Clinical and Scientific Applications Drive Adoption

Multimodal AI is proving particularly valuable in regulated industries where precision and compliance are paramount. Research from arXiv demonstrates sophisticated applications in clinical trial management, where automated systems can detect dosing errors in unstructured clinical narratives using comprehensive multi-modal feature engineering.

The clinical AI system combines 3,451 features spanning traditional NLP, dense semantic embeddings, and transformer-based medical pattern recognition to achieve 0.8725 test ROC-AUC performance. This level of accuracy meets enterprise requirements for safety-critical applications while processing over 42,000 clinical trial narratives.

MIT researchers are extending multimodal capabilities into engineering applications, developing “digital twins” that mirror physical systems through combined visual, textual, and sensor data processing. These systems enable real-time prediction and control of complex industrial processes, from combustion systems to aerospace component optimization.

Enterprise Integration and Scalability Considerations

Successful enterprise deployment of multimodal AI requires careful attention to integration architecture and scalability requirements. Organizations must address several key technical considerations:

Infrastructure Requirements:

- High-bandwidth data pipelines capable of processing multiple data types simultaneously

- GPU clusters optimized for both computer vision and natural language processing workloads

- Storage systems designed for mixed-media datasets with appropriate access patterns

Integration Architecture:

- API-first design enabling seamless connection to existing enterprise systems

- Support for Model Context Protocol (MCP) for third-party data source integration

- Containerized deployment models supporting both cloud and on-premises environments

Performance Optimization:

- Feature selection techniques that balance model performance with computational efficiency

- Ensemble approaches that improve reliability while maintaining acceptable latency

- Caching strategies for frequently accessed multimodal content

Google’s Deep Research agents exemplify enterprise-ready architecture by providing unified API access to both web and proprietary data sources, eliminating the complexity of managing multiple AI services for different data types.

Security and Compliance Framework

Multimodal AI systems present unique security challenges that enterprise IT teams must address through comprehensive governance frameworks. The combination of visual and textual data processing creates additional attack vectors and privacy considerations.

Data Protection Requirements:

- End-to-end encryption for all data types in transit and at rest

- Role-based access controls that account for different sensitivity levels across modalities

- Audit trails that track both input data types and generated outputs

- Compliance with industry-specific regulations (HIPAA, SOX, GDPR)

Model Security:

- Adversarial testing across all input modalities to identify potential vulnerabilities

- Regular model validation to detect drift or bias in multimodal outputs

- Secure model deployment pipelines with version control and rollback capabilities

- Monitoring systems that detect anomalous behavior across different data types

Organizations implementing multimodal AI must also consider intellectual property protection, particularly when processing proprietary visual content alongside textual data. Hybrid cloud deployments are becoming common, allowing sensitive visual data to remain on-premises while leveraging cloud-based language processing capabilities.

Cost Optimization and ROI Metrics

Enterprise adoption of multimodal AI requires careful cost analysis and ROI measurement frameworks. Organizations report significant variations in implementation costs depending on use case complexity and data volume requirements.

Cost Factors:

- Computational overhead for processing multiple data types simultaneously

- Data storage and bandwidth requirements for high-resolution visual content

- Training costs for custom models adapted to specific enterprise domains

- Integration and change management expenses

ROI Measurement:

- Reduction in manual processing time for document-intensive workflows

- Improved accuracy rates leading to decreased error correction costs

- Enhanced customer experience metrics through faster, more accurate responses

- Compliance cost reduction through automated monitoring and reporting

Early enterprise adopters report 20-50% cost reduction in document processing workflows and 30-60% improvement in quality control accuracy when implementing comprehensive multimodal solutions.

https://x.com/sundarpichai/status/2046627545333080316

What This Means

The rapid advancement of multimodal AI capabilities represents a fundamental shift in enterprise automation strategy. Organizations can now deploy unified AI systems that process diverse data types through single interfaces, reducing complexity while improving performance. The combination of improved accuracy, reduced integration overhead, and comprehensive enterprise features makes multimodal AI a strategic priority for IT leaders.

However, successful implementation requires careful attention to infrastructure scalability, security frameworks, and change management processes. Organizations should prioritize use cases with clear ROI metrics and established compliance requirements while building internal capabilities for managing multimodal AI systems at scale.

The emergence of enterprise-ready multimodal platforms from major cloud providers signals the technology’s transition from experimental to production-critical status, making strategic planning and pilot program development essential for maintaining competitive advantage.

FAQ

Q: What are the primary infrastructure requirements for deploying multimodal AI in enterprise environments?

A: Enterprise multimodal AI requires high-bandwidth data pipelines, GPU clusters optimized for mixed workloads, unified storage systems for different data types, and API-first integration architecture supporting both cloud and on-premises deployment models.

Q: How do organizations measure ROI for multimodal AI implementations?

A: Key ROI metrics include reduction in manual processing time (20-50% typical), improved accuracy rates, enhanced customer experience scores, and compliance cost reduction. Organizations should establish baseline measurements before implementation and track improvements across all affected workflows.

Q: What security considerations are unique to multimodal AI systems?

A: Multimodal AI requires end-to-end encryption across all data types, role-based access controls accounting for different sensitivity levels, comprehensive audit trails, adversarial testing across input modalities, and secure model deployment pipelines with version control capabilities.