Key takeaways

- AI customer service has evolved from keyword-matching FAQ bots to LLM-powered virtual agents that can understand complex queries, access company knowledge bases, and execute actions.

- Klarna reported its AI assistant handling the equivalent work of 700 full-time agents within months of launch, though specific productivity claims vary by study.

- The winning deployment pattern pairs AI for routine queries (password resets, order status, simple troubleshooting) with human escalation for complex or emotional cases.

- Quality depends heavily on RAG over accurate, up-to-date knowledge bases, clear escalation logic, and ongoing quality monitoring.

- Poor AI customer service frustrates users more than no AI — badly-deployed bots drive customers away.

Three generations of customer-service AI

Generation 1: scripted chatbots

Rule-based decision trees. “Did you mean refund or cancellation?” They handled a narrow set of intents with keyword matching. Useful for deflecting simple FAQs but infamous for dead-ends — the chatbot couldn’t handle anything off-script and would loop back to “Please visit our Help Center”.

Generation 2: intent-classification bots

Platforms like Dialogflow, Amazon Lex, and IBM Watson Assistant used NLP to classify queries into intents and extract entities. Better than pure keyword matching but required extensive per-intent training data. Each new topic meant new training data, new utterance variants, new testing.

Generation 3: LLM-powered virtual agents

Post-ChatGPT, customer-service AI has shifted to large language models. LLMs understand intent without per-intent training, handle multi-turn conversation naturally, and can be grounded in a company’s knowledge base via retrieval-augmented generation. See our large language models and rag primers for the underlying machinery.

What modern AI agents can do

- Answer product questions using RAG over documentation, FAQs, and policy pages.

- Troubleshoot common issues — reset connections, verify settings, run diagnostics.

- Execute account actions — update shipping addresses, pause subscriptions, issue refunds (within configured limits).

- Handle multi-step conversations — remember context across turns, clarify ambiguities.

- Escalate intelligently — recognize when a case exceeds their capability and hand off with context.

- Work in many languages — a single LLM handles most customer-facing languages; earlier systems needed per-language builds.

Quantified impact

Klarna publicly reported in 2024 that its AI assistant handled 2.3 million conversations, equivalent to 700 full-time agents, with customer-satisfaction scores similar to human agents. Intercom, Zendesk, and ServiceNow report comparable productivity gains in enterprise deployments. Specific numbers depend heavily on query mix and measurement methodology — “deflection rate” (fraction of queries resolved without human involvement) is the key metric and typically lands in the 40-80% range for well-deployed systems.

Customer-satisfaction impact is mixed. Well-built AI agents match or beat human baselines on simple queries. Badly-built ones frustrate users — the “press or say 0 to speak to a human” button exists because early AI systems drove it there. For the broader industry picture, see our ai industry coverage.

The escalation problem

The hard question in every deployment is: when should the AI give up and hand off to a human? Too aggressive escalation wastes human time on things the AI could handle. Too lazy escalation frustrates users stuck with an AI that can’t help.

Common signals for escalation: the model flagging low confidence, user explicit request (“I want a human”), sentiment analysis detecting frustration, repetition (user asking the same thing three times), or account-level triggers (high-value customer, sensitive account status). Good deployments monitor escalation rates and tune thresholds continuously.

The quality dimension

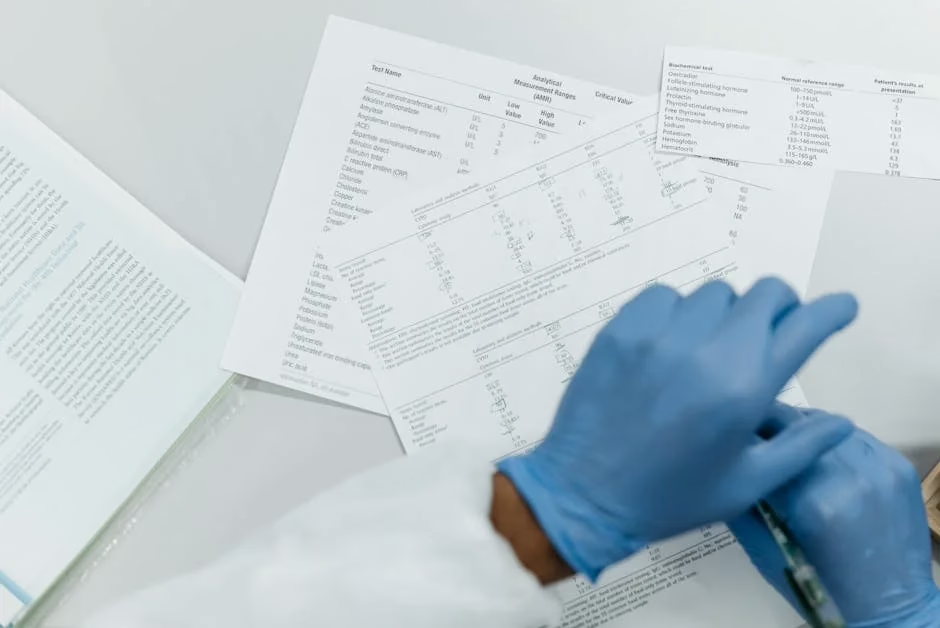

Customer service is where hallucination hurts most. A wrong answer about a refund policy can cause real user harm and lead to chargebacks, social-media complaints, and regulatory issues. Deployed systems apply multiple layers of defense:

- RAG with high-quality, up-to-date knowledge bases.

- Strict instruction templates that tell the model to decline when information isn’t available.

- Policy guardrails — don’t commit to things outside the agent’s authority (large refunds, legal promises).

- Ongoing review of conversation samples to catch quality issues.

- Automated evaluation against test suites.

Deployment patterns

Messaging-first

Deploy on web chat, in-app chat, WhatsApp, SMS, Facebook Messenger. Lightweight integration, async, easy to escalate. Intercom, Zendesk, Dixa, Gorgias, and Kustomer all ship chat-first AI features.

Voice channels

Voice is harder — speech recognition, turn-taking, latency, background noise. Voice AI has matured through 2024-2025 with products from Replicant, PolyAI, Retell AI, Vonage, Twilio, and custom deployments. Still less mature than text; wait for it to land cleanly in your specific use case before scaling.

Email handling

Parse incoming emails, classify intent, draft responses, route to appropriate queues. Less real-time than chat — errors are easier to catch before sending. Common for B2B customer support where queries are complex and response time less urgent.

Agent assist (copilot mode)

Instead of replacing human agents, AI suggests responses, summarizes conversation history, flags similar past cases, and drafts follow-up emails. This is often more palatable to customer-service orgs than full automation, particularly for complex accounts.

Common failure modes

Outdated knowledge bases

The AI is only as accurate as its sources. Knowledge bases that haven’t been updated after product changes produce confidently wrong answers. Maintaining the knowledge base is often more work than maintaining the AI.

No handoff context

User explains the problem to the AI, then is transferred to a human who asks them to start over. The user is rightly furious. Good deployments pass conversation history and extracted context to the human agent.

“Helpful” but off-policy commitments

The AI promises a refund, a credit, or a feature that isn’t actually authorized. Policy guardrails and tool-use restrictions (the AI can say things but can’t promise specific actions without verification) address this.

One-size-fits-all responses

A canned AI response to every complaint signals “we don’t care”. Good systems adapt tone to context and route emotional or high-stakes cases to humans fast.

Workforce implications

Customer-service jobs are genuinely being reshaped. Entry-level tier-1 support roles are shrinking at companies that have deployed effective AI. Tier-2 and tier-3 roles (complex cases, escalations, quality review) are stable or growing. New roles have emerged — AI trainers, knowledge-base curators, conversation designers.

Industry analyses vary on net employment impact. Klarna publicly announced hiring pauses in customer-service tied to AI productivity gains. Other companies report redeploying rather than reducing headcount. The trajectory is clear — customer-service work is shifting toward supervisory and complex-case roles — but the scale of displacement remains an open empirical question.

Frequently asked questions

Do customers actually want to talk to AI?

For simple transactional queries — “where is my order?”, “reset my password” — customers generally prefer fast AI over slow human support. For emotional, ambiguous, or high-stakes issues, they want humans. The gap between these modes is what good AI deployments respect; the failure mode is pushing AI into cases where humans are needed.

How long before AI handles all customer service?

Not in the foreseeable future. Complex cases — complaints about service quality, negotiating with upset customers, unusual account situations, legal or medical contexts — will remain human work. A realistic 5-year projection has AI handling 70-90% of tier-1 volume at well-deployed organizations, with humans focused on the higher-complexity remainder. Fully replacing customer service with AI is neither technically feasible nor desirable for most businesses.

Can I build an AI customer service agent myself?

Yes, and the tools are approachable. SaaS platforms (Intercom Fin, Zendesk AI, Salesforce Einstein, Kustomer) package this for non-technical teams. Developer frameworks (LangChain, LlamaIndex, Inkeep, Rasa with LLMs) support custom builds. Start with a clear scope (what queries should it handle?), a clean knowledge base, and a careful escalation path. Scope creep is the most common source of poor outcomes.