Major AI research laboratories have achieved significant milestones in 2026 toward artificial general intelligence (AGI), with breakthrough developments in reasoning, planning, and multimodal capabilities. Anthropic released Claude Design powered by their most capable vision model Claude Opus 4.7, while researchers at University of Wisconsin-Madison and Stanford University introduced Train-to-Test scaling laws that optimize inference-time reasoning. These advances demonstrate substantial progress in creating AI systems with general-purpose capabilities that can operate across diverse domains.

Advanced Reasoning Through Optimized Scaling Laws

The Train-to-Test (T²) scaling framework represents a fundamental breakthrough in how we approach AGI development. According to VentureBeat, researchers discovered that training smaller models on vastly more data and using saved computational overhead for multiple inference samples yields superior performance on complex reasoning tasks.

This methodology challenges conventional scaling approaches by jointly optimizing three critical parameters:

- Model parameter size: Smaller than traditional guidelines suggest

- Training data volume: Substantially larger datasets

- Test-time inference samples: Multiple reasoning attempts per query

The implications for AGI research are profound. Rather than pursuing ever-larger models, this approach demonstrates that reasoning capability emerges from strategic compute allocation between training and inference phases. The framework proves particularly effective for complex problem-solving tasks that require multi-step reasoning—a core component of general intelligence.

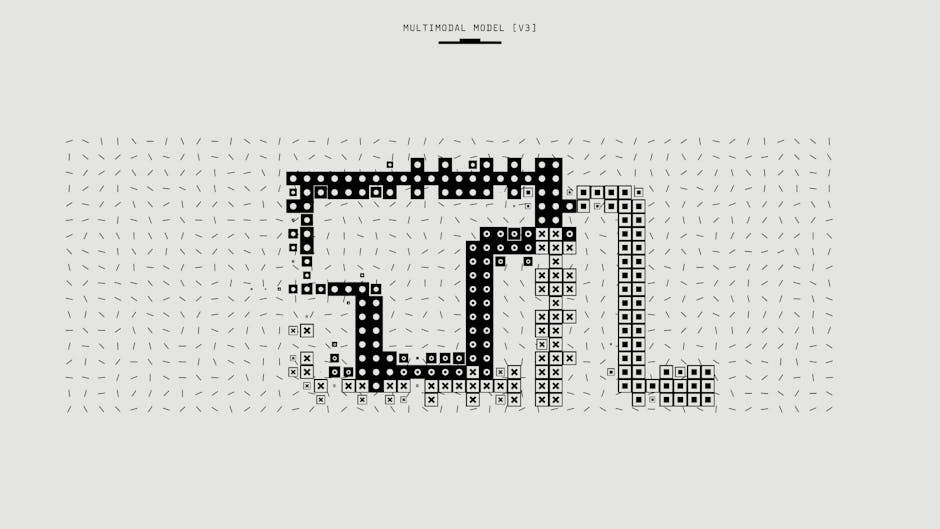

Multimodal AGI Capabilities in Production Systems

Anthropic’s Claude Design launch marks a critical milestone in AGI development by demonstrating sophisticated multimodal reasoning that bridges language understanding and visual creation. Powered by Claude Opus 4.7, the system can generate polished visual work, interactive prototypes, and marketing materials from conversational prompts.

This capability represents several AGI-relevant breakthroughs:

- Cross-modal reasoning: Converting linguistic concepts into visual representations

- Planning and execution: Breaking down complex design tasks into executable steps

- Iterative refinement: Incorporating feedback to improve outputs

According to VentureBeat, Anthropic achieved roughly $20 billion in annualized revenue by early 2026, demonstrating that advanced AGI capabilities can scale commercially. The system’s ability to understand design intent, apply aesthetic principles, and generate production-ready assets showcases the kind of general problem-solving capability that defines AGI progress.

Infrastructure Transformation for Agent-Based Systems

Salesforce’s Headless 360 initiative represents a paradigm shift toward AGI-native enterprise infrastructure. The platform exposes over 100 tools and capabilities as APIs, MCP tools, and CLI commands, enabling AI agents to operate entire business systems without graphical interfaces.

This architectural transformation addresses a fundamental AGI requirement: seamless integration with existing systems. Key technical achievements include:

- Complete API exposure: Every platform capability accessible programmatically

- Agent-first design: Built for autonomous operation rather than human interaction

- Tool interoperability: Standardized interfaces for diverse business functions

The initiative demonstrates how general-purpose AI agents can manage complex enterprise workflows. By removing UI dependencies, Salesforce has created an environment where AGI systems can reason about business processes, plan multi-step operations, and execute tasks across integrated platforms—core capabilities for artificial general intelligence.

Embodied Intelligence and Learning Systems

Robotics research has experienced a $6.1 billion investment surge in 2025, representing a four-fold increase from 2024, according to MIT Technology Review. This funding reflects growing confidence in embodied AGI systems that can interact with physical environments.

Modern robotic learning approaches have evolved from rule-based programming to simulation-based training and real-world adaptation. Key developments include:

- Digital twin environments: High-fidelity simulations for skill acquisition

- Transfer learning: Applying simulated knowledge to real-world scenarios

- Adaptive behavior: Real-time adjustment to environmental variations

These advances represent critical progress toward AGI systems that can generalize across physical and digital domains. The ability to learn complex manipulation tasks, adapt to new environments, and integrate sensory feedback demonstrates the kind of flexible intelligence that characterizes artificial general intelligence.

Integration of Reasoning and Planning Capabilities

The convergence of advanced reasoning, multimodal understanding, and autonomous operation represents a significant milestone in AGI development. Current systems demonstrate sophisticated planning capabilities that break down complex objectives into executable sub-tasks.

Key technical achievements include:

- Hierarchical task decomposition: Breaking complex goals into manageable components

- Context-aware decision making: Adapting strategies based on environmental feedback

- Multi-step reasoning chains: Maintaining logical consistency across extended inference sequences

These capabilities, demonstrated across language models, design systems, and robotic platforms, suggest that general intelligence emerges from the integration of specialized reasoning modules rather than monolithic architectures.

What This Means

These developments collectively represent the most significant progress toward AGI in recent years. The combination of optimized scaling laws, multimodal reasoning, agent-native infrastructure, and embodied intelligence creates a foundation for general-purpose AI systems.

The technical breakthroughs demonstrate that AGI development benefits from distributed capability development across multiple domains rather than pursuing single, monolithic solutions. Train-to-Test scaling shows that reasoning can be optimized through strategic compute allocation, while Claude Design proves that sophisticated multimodal understanding is achievable at scale.

For the broader AI community, these milestones indicate that AGI timeline estimates may be accelerating. The rapid commercial deployment of advanced capabilities, combined with substantial investment in embodied systems, suggests that general-purpose AI systems could emerge sooner than previously anticipated.

FAQ

What makes Train-to-Test scaling different from traditional approaches?

Train-to-Test jointly optimizes training parameters and inference-time compute allocation, proving that smaller models with more training data and multiple reasoning samples outperform larger models on complex tasks.

How does Claude Design advance AGI capabilities?

Claude Design demonstrates sophisticated multimodal reasoning by converting conversational prompts into polished visual designs, showcasing cross-modal understanding and planning capabilities essential for general intelligence.

Why is Salesforce’s Headless 360 significant for AGI development?

Headless 360 creates AGI-native infrastructure by exposing all platform capabilities as programmatic interfaces, enabling autonomous agents to operate complex business systems without human interface dependencies.