Artificial General Intelligence (AGI) research achieved significant milestones in 2026, with major laboratories demonstrating unprecedented capabilities in reasoning, planning, and autonomous task execution. Anthropic’s Claude Opus 4.7 now leads knowledge work evaluations with an Elo score of 1753, while novel architectures like Object-Oriented World Modeling (OOWM) are restructuring how AI systems approach embodied reasoning and planning tasks.

Frontier Model Performance Reaches New Heights

The competition between leading AGI research laboratories intensified dramatically in 2026. According to VentureBeat, Anthropic’s Claude Opus 4.7 currently outperforms OpenAI’s GPT-5.4 (Elo score: 1674) and Google’s Gemini 3.1 Pro (Elo score: 1314) on the GDPVal-AA knowledge work evaluation benchmark.

However, the performance landscape reveals what researchers call the “jagged frontier” – models excel in some domains while struggling in others. GPT-5.4 maintains superiority in agentic search tasks (89.3% vs 79.3%), while Gemini 3.1 Pro leads in multilingual Q&A and terminal-based coding.

The Stanford HAI AI Index report documents remarkable progress across multiple benchmarks:

- 30% improvement on Humanity’s Last Exam (HLE) in just one year

- Above 87% accuracy on MMLU-Pro multi-step reasoning tasks

- 62.9% to 70.2% performance range on τ-bench real-world agent tasks

- GAIA benchmark scores rising from 20% to 74.5%

Object-Oriented World Modeling: Structured AGI Reasoning

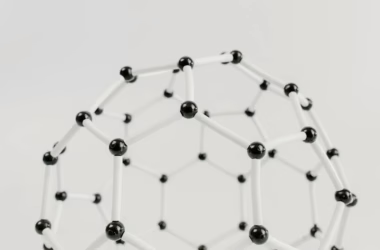

A breakthrough in AGI architecture emerged from researchers who developed Object-Oriented World Modeling (OOWM), a framework that addresses fundamental limitations in current reasoning approaches. According to arXiv research, traditional Chain-of-Thought prompting relies on linear natural language that “fails to explicitly represent the state-space, object hierarchies, and causal dependencies required for robust robotic planning.”

OOWM redefines world models as explicit symbolic tuples: W = ⟨S, T⟩, where S represents environmental state and T represents transition logic. The architecture leverages:

Technical Implementation

- Unified Modeling Language (UML) for structured representation

- Class Diagrams for grounding visual perception into object hierarchies

- Activity Diagrams for operationalizing planning into executable control flows

- Three-stage training pipeline combining Supervised Fine-Tuning with Group Relative Policy Optimization

Evaluations on the MRoom-30k benchmark demonstrate that OOWM “significantly outperforms unstructured textual baselines in planning coherence, execution success, and structural fidelity,” establishing a new paradigm for structured embodied reasoning.

Enterprise AGI Deployment Challenges Persist

Despite impressive benchmark performance, real-world AGI deployment faces significant reliability challenges. Stanford HAI research reveals that frontier models are failing roughly one in three production attempts on structured benchmarks.

This performance inconsistency represents what researchers term the “jagged frontier” – the boundary where AI systems excel then suddenly fail. As Stanford HAI researchers note: “AI models can win a gold medal at the International Mathematical Olympiad but still can’t reliably tell time.”

Enterprise adoption statistics for 2026:

- 88% adoption rate across enterprise organizations

- One-third failure rate on structured production tasks

- Increasing difficulty in model auditing and reliability assessment

The gap between capability demonstrations and production reliability remains the defining operational challenge for IT leaders implementing AGI systems.

Autonomous Agent Capabilities Expand

AGI research increasingly focuses on autonomous agent development, with systems now executing complex multi-step workflows without continuous human supervision. Companies like Traza demonstrate practical applications, deploying AI agents that autonomously handle vendor outreach, request-for-quote generation, order tracking, and invoice processing.

According to VentureBeat, Traza’s approach represents a shift from recommendation-based AI to fully autonomous execution: “AI agents that don’t just recommend procurement actions — they execute them autonomously.”

Key autonomous capabilities emerging in 2026:

- Multi-step workflow execution without human intervention

- Tool-use integration across enterprise software systems

- Long-horizon planning for complex business processes

- Adaptive decision-making based on environmental feedback

Regulatory Challenges Shape AGI Development

AGI development increasingly intersects with regulatory frameworks, particularly around safety protocols and deployment restrictions. Wired reports that political figures like Alex Bores, who cosponsored New York’s RAISE Act, advocate for “rigorous AI regulation” requiring major AI firms to implement and publish safety protocols.

The RAISE Act, which became law in 2025, mandates:

- Published safety protocols for major AI models

- Implementation guardrails for deployment

- Transparency requirements for model capabilities

This regulatory landscape influences how AGI research laboratories approach model development and release strategies, with some organizations restricting access to their most powerful systems pending safety evaluations.

What This Means

The AGI research milestones of 2026 represent a critical inflection point where theoretical capabilities increasingly translate into practical applications. The emergence of structured reasoning frameworks like OOWM addresses fundamental limitations in current approaches, while benchmark performance continues advancing across multiple domains.

However, the persistent reliability gap between demonstration and production deployment highlights the technical challenges remaining before true AGI deployment. The “jagged frontier” phenomenon suggests that current architectures may require fundamental architectural innovations rather than incremental scaling to achieve consistent, reliable general intelligence.

The intersection of rapid capability advancement with regulatory frameworks indicates that AGI development will increasingly balance technical innovation with safety considerations and societal impact assessments.

FAQ

What makes Object-Oriented World Modeling different from traditional AI reasoning?

OOWM structures reasoning through explicit symbolic representations rather than linear natural language, using UML diagrams to represent object hierarchies and causal dependencies for more robust planning.

Why are frontier models failing in production despite high benchmark scores?

Models exhibit “jagged frontier” performance – excelling in some domains while failing unpredictably in others, creating reliability challenges when deployed in real-world enterprise workflows.

How do current AGI capabilities compare to human-level performance?

While models achieve superhuman performance on specific benchmarks like mathematical competitions, they still struggle with basic tasks like time-telling, indicating significant gaps before achieving true general intelligence.

For a side-by-side look at the flagship models in play, see our full 2026 AI model comparison.