Sakana AI on Tuesday released research detailing its “RL Conductor,” a 7-billion parameter language model trained via reinforcement learning to automatically orchestrate multiple frontier LLMs including GPT-5, Claude Sonnet 4, and Gemini 2.5 Pro. According to VentureBeat, the system achieves state-of-the-art results on reasoning and coding benchmarks while using fewer API calls than manual multi-agent pipelines.

The RL Conductor serves as the backbone of Fugu, Sakana AI’s commercial multi-agent orchestration service. The model dynamically analyzes inputs, distributes tasks among worker LLMs, and coordinates responses without requiring hardcoded workflows that break when query patterns shift.

Breaking Beyond Manual Agent Frameworks

Current agentic AI systems rely heavily on manually designed workflows like LangChain pipelines, which create bottlenecks in production environments. Yujin Tang, co-author of the research paper published on arXiv, told VentureBeat that these frameworks “fall short because they are inherently rigid and constrained.”

The core problem emerges when targeting domains with large user bases and heterogeneous demands. “While using frameworks with hard-coded pipelines like LangChain and Mixture-of-Agents can work well for specific use cases,” Tang explained, “in production, an inherent bottleneck arises when targeting domains with large user bases with very heterogeneous demands.”

Tang noted that achieving “real-world generalization in such heterogeneous applications inherently necessitates going beyond human-hardcoded designs.” The RL Conductor addresses this by learning optimal coordination strategies through reinforcement learning rather than relying on predetermined rules.

How RL Conductor Works

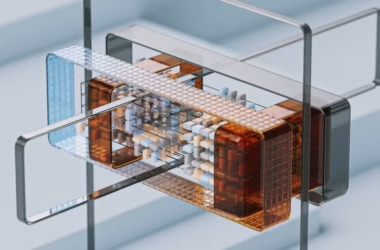

The RL Conductor operates as a meta-model that sits above a pool of worker LLMs. When receiving a query, it analyzes the input requirements and determines which models are best suited for different aspects of the task. The system then distributes work among the selected models and coordinates their outputs to produce a final response.

This approach allows the system to leverage the specific strengths of different models. For complex reasoning tasks, it might route portions to GPT-5, while directing coding-specific queries to models optimized for programming tasks. The coordination happens dynamically based on the input characteristics rather than following preset rules.

The reinforcement learning training process taught the conductor to optimize for both performance and efficiency. The model learns to minimize API calls while maximizing output quality, resulting in cost savings compared to traditional multi-agent approaches that might query multiple models unnecessarily.

Performance Benchmarks and Results

According to Sakana AI’s research, the RL Conductor outperforms individual frontier models including GPT-5 and Claude Sonnet 4 on difficult reasoning and coding benchmarks. The system also surpasses expensive human-designed multi-agent pipelines while requiring fewer API calls.

The performance gains come from the conductor’s ability to route queries to the most appropriate models and coordinate their responses effectively. Rather than using a single model for all tasks or querying multiple models redundantly, the system optimizes resource allocation based on task requirements.

The cost efficiency represents a significant advantage for production deployments. Traditional multi-agent systems often make unnecessary API calls or use expensive models for simple tasks. The RL Conductor’s learned coordination reduces these inefficiencies while maintaining or improving output quality.

Commercial Implementation Through Fugu

Sakana AI has commercialized the RL Conductor technology through Fugu, its multi-agent orchestration service. This represents a shift from research prototype to production-ready system capable of handling real-world workloads.

The commercial implementation addresses the scalability challenges that plague manual agentic frameworks. Instead of requiring teams to design and maintain complex coordination logic, Fugu provides an automated solution that adapts to changing query patterns.

For enterprises dealing with diverse AI workloads, this approach offers both performance and operational benefits. Teams can leverage multiple frontier models without building custom orchestration infrastructure or managing the complexity of multi-model coordination.

Industry Context and Implications

The RL Conductor research addresses a growing challenge in enterprise AI deployment. As organizations adopt multiple LLMs for different use cases, the complexity of coordinating these models becomes a significant operational burden.

Current solutions require substantial engineering effort to build and maintain custom pipelines. These systems often break when user behavior changes or new models become available. The RL Conductor’s learned coordination offers a more adaptive approach that can evolve with changing requirements.

The research also highlights the potential for smaller, specialized models to add significant value in AI systems. Rather than always scaling model size, the 7B conductor demonstrates how targeted training can enable sophisticated coordination capabilities.

What This Means

Sakana AI’s RL Conductor represents a practical approach to multi-model coordination that addresses real production challenges. The system’s ability to outperform individual frontier models while reducing API costs suggests that learned orchestration could become a standard component of enterprise AI infrastructure.

The research validates the concept that specialized coordination models can extract more value from existing LLMs than manual frameworks. This approach could accelerate enterprise AI adoption by reducing the engineering complexity required to deploy sophisticated multi-model systems.

For the broader AI industry, the work demonstrates how reinforcement learning can solve coordination problems that resist traditional engineering approaches. As model ecosystems become more diverse, automated orchestration may become essential for practical deployment.

FAQ

What makes RL Conductor different from existing multi-agent frameworks?

RL Conductor uses reinforcement learning to automatically coordinate multiple LLMs, while existing frameworks like LangChain require manual pipeline design. This allows it to adapt to changing query patterns without breaking, unlike hardcoded systems.

How does a 7B model outperform much larger frontier models?

The RL Conductor doesn’t compete directly with frontier models but orchestrates them effectively. It analyzes tasks and routes work to the most appropriate models, then coordinates their outputs, achieving better results than any single model working alone.

Is Fugu available for commercial use now?

Yes, Sakana AI has commercialized the RL Conductor technology through Fugu, their multi-agent orchestration service. The system is designed to handle production workloads and enterprise AI coordination requirements.