Enterprise organizations face unprecedented security challenges as AI coding assistants like GitHub Copilot and Cursor rapidly proliferate across development teams. According to VentureBeat, a quiet hardware shift is pushing large language model usage off corporate networks and onto individual endpoints, creating what security experts call “Shadow AI 2.0” — a new threat vector that traditional data loss prevention systems cannot detect or control.

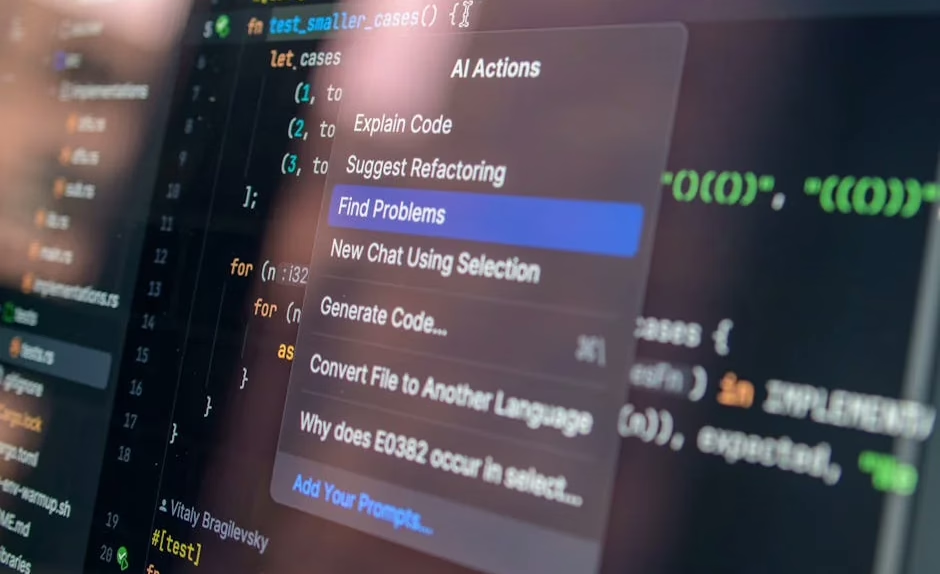

The security implications extend far beyond simple code generation. Modern AI coding tools now operate as autonomous agents capable of architectural decisions, spec-driven development, and self-improving capabilities that fundamentally alter the enterprise attack surface.

Local Inference Creates New Attack Vectors

The most immediate security risk stems from developers running AI models locally on their devices. Consumer-grade hardware can now execute 70B-parameter models offline, eliminating the traditional network-based monitoring that security teams rely on for threat detection.

Three critical factors have made local AI inference a mainstream security concern:

- Advanced hardware acceleration: MacBook Pros with 64GB unified memory can run quantized models at production speeds

- Mainstream quantization: Model compression techniques now make enterprise-grade AI accessible on standard laptops

- Ecosystem maturity: Tools like Ollama, LM Studio, and GPT4All have simplified local model deployment

When inference happens locally, traditional DLP solutions become blind to potentially sensitive code interactions. Security teams lose visibility into what proprietary algorithms, credentials, or intellectual property developers may be exposing to unvetted AI models.

The threat landscape expands when considering that local models often lack the safety guardrails and content filtering present in commercial API services. Malicious actors could potentially craft specialized models designed to extract or manipulate code in ways that bypass standard security controls.

Autonomous Coding Agents Amplify Risk Exposure

Spec-driven development frameworks are enabling AI agents to operate with unprecedented autonomy in enterprise environments. According to VentureBeat, teams are now completing 18-month architecture projects in 76 days using agentic coding approaches — but this acceleration comes with significant security trade-offs.

Autonomous agents introduce several critical vulnerabilities:

- Privilege escalation risks: Agents operating with developer credentials can potentially access and modify systems beyond their intended scope

- Code injection vectors: Self-modifying agents may introduce malicious code patterns that traditional static analysis tools miss

- Supply chain contamination: Agents pulling from external repositories could inadvertently incorporate compromised dependencies

The “hyperagents” concept introduced by Meta researchers represents an even more concerning development. These systems can rewrite their own problem-solving logic and underlying code, creating a dynamic threat surface that evolves faster than security controls can adapt.

For enterprise security teams, this means traditional vulnerability scanning and code review processes may be insufficient against AI-generated code that continuously modifies itself.

API-First Architectures Create Expanded Attack Surface

Salesforce’s Headless 360 initiative exemplifies a broader industry shift toward API-first architectures designed for AI agent interaction. While this approach enables powerful automation capabilities, it also exposes every platform capability as a potential attack vector.

The security implications of API-centric development include:

- Authentication bypass opportunities: AI agents may inherit excessive permissions or operate with inadequate access controls

- Data exfiltration risks: Programmatic access to all platform functions increases the potential for unauthorized data extraction

- Lateral movement facilitation: Compromised AI agents could leverage API access to pivot across enterprise systems

Security teams must now consider not just human-driven attacks, but also scenarios where compromised or malicious AI agents systematically exploit API endpoints at machine speed and scale.

Enterprise Defense Strategies

Mitigating AI coding tool risks requires a multi-layered security approach that addresses both traditional and emerging threat vectors.

Network and Endpoint Controls

Implement AI-aware network monitoring that can detect local model inference patterns, even when traditional DLP fails. This includes monitoring for unusual CPU/GPU utilization patterns, large file downloads that may indicate model installations, and network traffic to AI model repositories.

Deploy endpoint detection and response (EDR) solutions configured to identify AI tool installations and usage patterns. Security teams should maintain inventories of approved AI development tools and establish policies for local model usage.

Code Security Frameworks

Establish AI-generated code review protocols that go beyond traditional static analysis. This includes implementing semantic analysis tools capable of detecting AI-generated code patterns and ensuring human oversight for all autonomous agent outputs.

Implement zero-trust principles for AI agent authentication and authorization. Agents should operate with minimal necessary privileges and undergo continuous verification of their actions and outputs.

Data Protection Measures

Create AI-specific data classification policies that identify which code repositories, algorithms, and intellectual property should never be exposed to AI tools — local or cloud-based.

Establish secure development environments where AI tools can operate safely without access to production systems or sensitive data.

What This Means

The integration of AI coding tools into enterprise development workflows represents a fundamental shift in the cybersecurity threat landscape. Traditional perimeter-based security models are inadequate against locally-running AI models that operate outside network visibility.

Security teams must evolve their strategies to address autonomous agents that can modify code, access APIs, and make architectural decisions at machine speed. The key is implementing layered defenses that provide visibility into AI tool usage while maintaining the productivity benefits that drive their adoption.

Organizations that proactively address these risks through comprehensive AI governance frameworks, enhanced monitoring capabilities, and updated security policies will be better positioned to safely leverage AI coding tools at scale.

FAQ

Q: How can security teams detect local AI model usage on corporate devices?

A: Monitor for unusual resource consumption patterns, large file downloads from AI repositories, and implement EDR solutions configured to identify AI tool installations and execution signatures.

Q: What are the biggest risks of autonomous coding agents in enterprise environments?

A: Privilege escalation through inherited developer credentials, code injection via self-modifying capabilities, and supply chain contamination from unvetted external dependencies.

Q: Should enterprises ban local AI coding tools entirely?

A: Complete bans are often impractical and may drive shadow usage. Instead, implement controlled environments with approved tools, clear usage policies, and comprehensive monitoring of AI-generated code outputs.

Further Reading

- Factory hits $1.5B valuation to build AI coding for enterprises – TechCrunch

- AI Security Risks in 2026 – Security Boulevard – Google News – AI Security