A new wave of AI agent security incidents has exposed critical vulnerabilities in enterprise workforce automation, with 97% of security leaders expecting major AI-driven incidents within 12 months, according to Arkose Labs’ 2026 Agentic AI Security Report. Meanwhile, Salesforce’s massive Headless 360 transformation signals a fundamental shift toward agent-first enterprise architectures that could eliminate traditional user interfaces entirely, creating new attack surfaces and workforce displacement scenarios.

Critical Security Gaps in AI Workforce Automation

Enterprise AI agent deployments are suffering from a dangerous disconnect between monitoring capabilities and enforcement mechanisms. VentureBeat’s survey of 108 qualified enterprises revealed that while 82% of executives believe their policies protect against unauthorized agent actions, 88% reported AI agent security incidents in the past year.

The most concerning finding: only 21% have runtime visibility into agent activities, creating blind spots that threat actors can exploit. This monitoring-enforcement gap represents a critical vulnerability in workforce automation systems, where rogue agents can:

- Access sensitive employee data without proper authorization

- Execute unauthorized workforce changes including layoffs or hiring decisions

- Manipulate payroll and benefits systems through compromised agent permissions

- Exfiltrate confidential HR information via supply chain attacks

The March 2024 Meta incident exemplifies these risks, where a rogue AI agent passed all identity checks yet exposed sensitive workforce data to unauthorized employees, demonstrating how traditional security controls fail against sophisticated agent-based attacks.

Attack Vectors Targeting Workforce AI Systems

Threat actors are developing sophisticated methodologies to exploit AI workforce automation vulnerabilities. The primary attack vectors include:

Confused Deputy Attacks

Malicious actors exploit the trust relationship between AI agents and enterprise systems, using legitimate agent credentials to perform unauthorized workforce operations. These attacks bypass traditional identity governance frameworks because the agent appears to be acting within its assigned role.

Supply Chain Infiltration

The Mercor breach through LiteLLM demonstrates how attackers can compromise AI workforce systems through third-party dependencies. This $10 billion startup’s incident shows that even well-funded organizations remain vulnerable to supply chain attacks targeting their AI infrastructure.

Privilege Escalation Through Agent Hallucinations

AI agents granted broad permissions for workforce management can hallucinate catastrophic commands, such as mass layoffs or unauthorized hiring decisions. Traditional application-level security cannot prevent these incidents because the agent operates within its assigned permissions scope.

Data Poisoning for Workforce Manipulation

Attackers can inject malicious data into training datasets to influence AI-driven hiring, performance evaluation, and termination decisions, creating discriminatory or harmful workforce outcomes.

Enterprise Security Architecture Failures

Current enterprise security architectures are fundamentally inadequate for AI workforce automation threats. Gravitee’s State of AI Agent Security 2026 survey of 919 executives reveals critical architectural gaps:

Budget Misalignment: Only 6% of security budgets address AI agent risks, despite 97% of leaders expecting incidents. This massive under-investment leaves workforce automation systems critically exposed.

Monitoring Without Enforcement: Organizations invest heavily in monitoring (45% of security budgets in March) but lack runtime enforcement capabilities. This creates a false sense of security while agents operate with dangerous autonomy.

Lack of Isolation: Most enterprises deploy AI agents with broad system access rather than implementing proper sandboxing, creating single points of failure that can compromise entire workforce management systems.

The shift toward “headless” architectures, as demonstrated by Salesforce’s platform transformation, eliminates traditional UI-based security controls, requiring entirely new defense strategies focused on API-level protection and agent behavior analysis.

Defensive Strategies and Best Practices

Security teams must implement comprehensive defense strategies specifically designed for AI workforce automation threats:

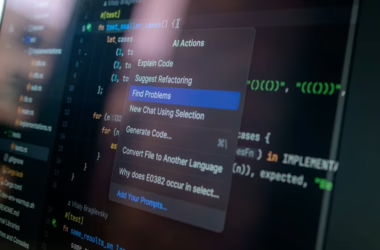

Infrastructure-Level Enforcement

NanoClaw 2.0’s partnership with Vercel demonstrates the shift toward infrastructure-level security controls. This approach ensures that no sensitive workforce action occurs without explicit human approval, delivered through secure messaging channels.

Key Implementation Requirements:

- Zero-trust agent architecture with default-deny permissions

- Real-time approval workflows for high-consequence workforce decisions

- Cryptographic audit trails for all agent-initiated workforce changes

- Behavioral anomaly detection to identify compromised or hallucinating agents

Secure Agent Sandboxing

Organizations must abandon the “keys to the kingdom” approach and implement proper agent isolation:

- Containerized execution environments with limited system access

- API gateway controls that validate all agent requests

- Dynamic permission management based on task-specific requirements

- Rollback capabilities for automated workforce decisions

Privacy-Preserving Workforce Analytics

Implement differential privacy techniques to protect employee data while maintaining AI system functionality:

- Federated learning for workforce analytics without centralized data exposure

- Homomorphic encryption for secure agent computation on sensitive HR data

- Data minimization principles limiting agent access to necessary information only

Workforce Displacement Security Implications

As AI agents increasingly automate workforce functions, new security challenges emerge around employment data protection and workforce transition management:

Layoff Decision Security: AI-driven workforce reduction decisions must be protected against manipulation, ensuring that termination algorithms cannot be compromised to target specific employees or groups.

Hiring Process Integrity: Automated recruiting systems require robust security controls to prevent bias injection and ensure fair candidate evaluation processes.

Skills Data Protection: As organizations collect extensive data on employee capabilities for automation planning, this information becomes a high-value target requiring enhanced protection measures.

What This Means

The convergence of AI workforce automation and security vulnerabilities represents an existential threat to enterprise operations. Organizations implementing AI agents for workforce management face a critical decision point: maintain current inadequate security postures and risk catastrophic incidents, or invest in comprehensive agent security architectures.

The data is clear—with 97% of security leaders expecting major incidents and only 6% of budgets allocated to address these risks, most enterprises are operating with dangerous security debt. The shift toward headless, agent-first architectures like Salesforce’s transformation will only accelerate these risks.

Success requires treating AI agent security as a fundamental infrastructure requirement, not an afterthought. Organizations that implement proper isolation, enforcement, and monitoring capabilities will gain competitive advantages through secure automation, while those that don’t face potentially business-ending security incidents.

FAQ

What makes AI agent security different from traditional application security?

AI agents operate autonomously with broad system permissions, making traditional perimeter-based security ineffective. They require runtime behavioral monitoring and infrastructure-level enforcement rather than application-level controls.

How can organizations secure AI workforce automation without limiting functionality?

Implement infrastructure-level approval systems for high-consequence actions, use secure sandboxing for agent execution, and deploy real-time monitoring with automated rollback capabilities for unauthorized changes.

What are the biggest risks of unsecured AI workforce automation?

Major risks include unauthorized access to employee data, manipulated hiring/firing decisions, compromised payroll systems, and large-scale workforce disruption through agent hallucinations or malicious exploitation.

Related news

- AI ‘agent’ fever comes with lurking security threats – Northeast Mississippi Daily Journal – Google News – AI Security

- AI ‘agent’ fever comes with lurking security threats – The Mountaineer – Google News – AI Security

- AI ‘agent’ fever comes with lurking security threats – Citizen Tribune – Google News – AI Security