AI-powered coding assistants like GitHub Copilot, Cursor, and Claude Code are fundamentally reshaping how developers write software, but new research reveals that traditional productivity metrics fail to capture their true impact. According to TechCrunch, while these tools achieve 80-90% initial code acceptance rates, real-world productivity gains are far more complex than surface-level measurements suggest.

Developer productivity analytics firm Waydev, which tracks over 10,000 software engineers across 50 companies, found that AI-generated code requires significantly more revision cycles than human-written code. CEO Alex Circei reports that while initial acceptance rates appear impressive, the actual long-term acceptance rate drops to just 10-30% of generated code after accounting for necessary revisions and refactoring.

The Architecture Behind Modern AI Coding Assistants

Modern AI coding tools leverage sophisticated transformer architectures trained on massive codebases to understand programming patterns and context. GitHub Copilot utilizes OpenAI’s Codex model, which builds upon GPT-3’s foundation with specialized training on billions of lines of public code repositories.

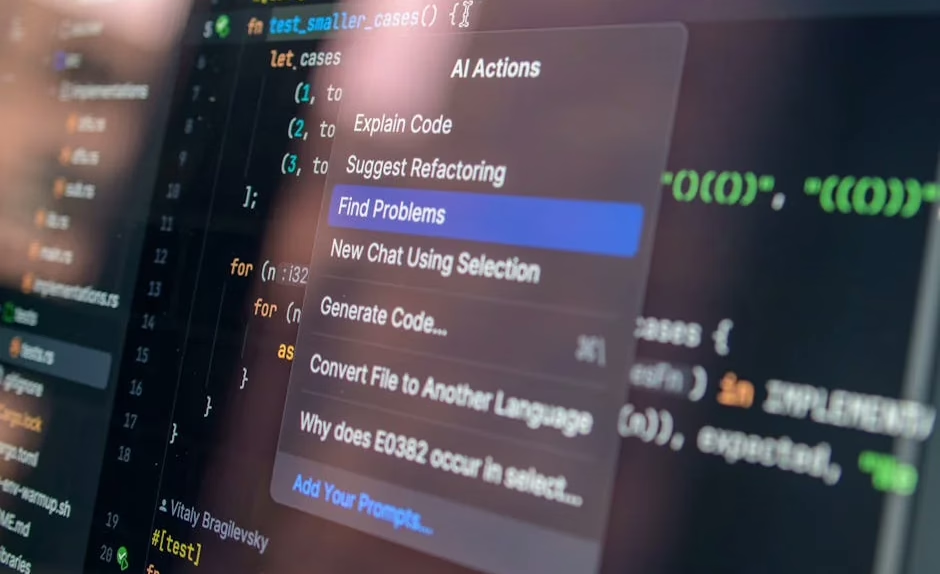

Cursor IDE represents a new generation of AI-native development environments, integrating large language models directly into the coding workflow rather than operating as simple autocomplete tools. The platform employs context-aware reasoning to understand entire codebases, enabling more sophisticated code generation and refactoring capabilities.

These systems use retrieval-augmented generation (RAG) techniques to access relevant code snippets and documentation during inference, significantly improving the contextual accuracy of generated code. The underlying neural networks process code as sequences of tokens, applying attention mechanisms to understand relationships between variables, functions, and architectural patterns.

Beyond Token Consumption: Measuring Real Developer Impact

The emergence of “tokenmaxxing” culture in Silicon Valley—where developers compete for larger AI processing budgets—highlights a fundamental misunderstanding of productivity optimization. Token consumption represents an input metric, not an output measure of software quality or developer efficiency.

Waydev’s analytics platform reveals critical insights about AI coding tool effectiveness:

- Initial acceptance rates: 80-90% of AI-generated code is initially approved

- Revision frequency: AI-assisted code requires 2-3x more subsequent modifications

- Long-term retention: Only 10-30% of generated code remains unchanged after weeks

- Debug cycles: Increased time spent identifying and fixing AI-introduced bugs

These findings suggest that while AI tools accelerate initial code production, they may introduce technical debt that requires additional engineering effort to resolve.

Enterprise Integration and Platform Evolution

Major technology companies are rapidly integrating AI capabilities into their development platforms. Salesforce’s Headless 360 initiative represents a paradigm shift toward AI-first software architecture, exposing every platform capability as APIs and CLI commands that AI agents can operate programmatically.

This architectural transformation enables AI coding assistants to:

- Directly manipulate enterprise software without graphical interfaces

- Generate integration code for complex business logic

- Automate deployment pipelines through natural language commands

- Orchestrate multi-service workflows across distributed systems

The initiative ships over 100 new tools and skills immediately available to developers, marking a decisive shift from human-operated interfaces to agent-accessible infrastructure.

Advanced AI Models Expanding Beyond Code Generation

Anthropic’s Claude Design demonstrates how AI capabilities are expanding beyond traditional coding into visual design and prototyping. Powered by Claude Opus 4.7, the tool transforms conversational prompts into polished visual work, including interactive prototypes and marketing collateral.

This expansion into the application layer represents a significant technical advancement:

- Multi-modal understanding: Processing both textual requirements and visual design principles

- Context-aware generation: Understanding brand guidelines and design systems

- Interactive prototyping: Creating functional user interfaces from natural language descriptions

- Real-time iteration: Enabling fine-grained editing through conversational feedback

The underlying vision model demonstrates sophisticated understanding of design principles, layout composition, and user experience patterns, extending far beyond traditional code completion capabilities.

Performance Optimization and Model Efficiency

As AI coding tools scale across enterprise environments, computational efficiency becomes increasingly critical. Modern implementations employ several optimization techniques:

Model Quantization: Reducing model precision while maintaining performance enables deployment on local development machines rather than requiring cloud inference for every code suggestion.

Caching Mechanisms: Storing frequently accessed code patterns and API documentation reduces inference latency and improves response times for common programming tasks.

Incremental Learning: Some platforms implement continuous learning from developer feedback, adapting to specific coding styles and organizational patterns over time.

These optimizations are essential for maintaining developer workflow efficiency, as even minor latency increases can significantly impact the coding experience.

What This Means

The evolution of AI coding tools represents a fundamental shift in software development methodology, but success requires moving beyond simple adoption metrics toward comprehensive productivity analysis. While these tools demonstrate impressive capabilities in code generation and design automation, their true value lies in augmenting human creativity rather than replacing developer judgment.

Organizations implementing AI coding assistants should focus on measuring long-term code quality, maintainability, and developer satisfaction rather than token consumption or initial acceptance rates. The most effective implementations will likely combine AI-generated code with robust code review processes and architectural oversight.

As these tools continue advancing toward more sophisticated reasoning capabilities, the developer role will evolve toward higher-level system design and AI orchestration, requiring new skills in prompt engineering and AI tool management.

FAQ

How accurate are AI coding tools like Copilot and Cursor?

Initial acceptance rates reach 80-90%, but long-term code retention drops to 10-30% after accounting for necessary revisions and debugging cycles.

What makes modern AI coding assistants different from simple autocomplete?

They use transformer architectures with context-aware reasoning, understanding entire codebases and employing retrieval-augmented generation for more sophisticated code creation.

Should organizations measure developer productivity by AI token usage?

No, token consumption is an input metric that doesn’t reflect code quality or real productivity. Focus on long-term code maintainability and developer satisfaction instead.