The rapid proliferation of open-source artificial intelligence models like Meta’s Llama and Mistral AI has fundamentally shifted the landscape of AI accessibility, creating unprecedented opportunities for innovation while simultaneously raising critical ethical questions about democratization, accountability, and societal impact. As these powerful models become increasingly available for local deployment and fine-tuning through platforms like Hugging Face, organizations and individuals worldwide are grappling with the complex implications of unrestricted AI access.

This transformation represents more than a technical advancement—it signals a paradigmatic shift toward decentralized AI development that challenges traditional governance models and forces society to confront fundamental questions about who should control artificial intelligence and how we can ensure its responsible use.

The Democratization Dilemma: Access Versus Control

The open-source AI movement embodies a profound tension between democratization and control. When Meta released Llama and other organizations followed suit with models like Mistral, they effectively transferred powerful AI capabilities from centralized corporate entities to a distributed global community. This shift has enabled researchers, developers, and organizations worldwide to access cutting-edge AI technology without the financial barriers or corporate gatekeeping that previously limited such access.

However, this democratization comes with significant ethical trade-offs. Unlike proprietary models that can be updated, monitored, or restricted by their creators, open-source models exist independently once released. This permanence means that even if creators later discover harmful capabilities or unintended consequences, they cannot retroactively control how the models are used or modified.

The implications extend beyond technical considerations to fundamental questions of digital equity and power distribution. While open-source models can level the playing field for smaller organizations and developing nations, they also risk amplifying existing inequalities if access to computational resources, technical expertise, or fine-tuning capabilities remains concentrated among privileged groups.

Local Inference and the Governance Gap

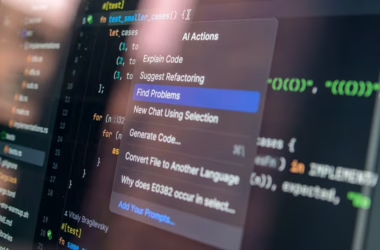

The emergence of local AI inference capabilities has created what security experts are calling “Shadow AI 2.0,” fundamentally challenging traditional approaches to AI governance and oversight. According to VentureBeat, employees are increasingly running capable models locally on laptops, offline, with no API calls and no obvious network signature, making it nearly impossible for organizations to monitor or control AI usage.

This shift represents a critical blind spot for both corporate governance and broader societal oversight mechanisms. Traditional data loss prevention systems and monitoring tools become ineffective when AI processing occurs entirely within local devices. The result is a growing disconnect between policy frameworks designed around centralized AI services and the reality of distributed, unmonitorable AI deployment.

The governance gap extends beyond corporate environments to broader questions of regulatory oversight. How can societies ensure responsible AI use when the technology operates invisibly within personal devices? What accountability mechanisms can function in an environment where AI processing leaves no digital footprint? These questions highlight the need for new approaches to AI governance that account for the decentralized nature of open-source deployment.

Bias, Fairness, and Representation Challenges

Open-source AI models inherit and potentially amplify the biases present in their training data, but the distributed nature of their deployment creates unique challenges for addressing these issues. Unlike centralized AI services where bias mitigation can be implemented at the platform level, open-source models place the responsibility for bias detection and correction on individual users and organizations.

This distributed responsibility model raises critical questions about capacity and incentives. Do all users have the technical expertise to identify and address bias in their AI implementations? Are there sufficient incentives for organizations to invest in bias mitigation when using freely available models? The evidence suggests that many deployments occur without adequate consideration of fairness implications.

Furthermore, the fine-tuning capabilities that make open-source models so powerful also create new vectors for introducing bias. When organizations customize models for specific use cases, they may inadvertently encode discriminatory patterns or reinforce existing inequalities. The lack of standardized bias assessment tools and practices for fine-tuned models compounds these challenges.

Transparency Paradox in Open Development

While open-source AI models are often celebrated for their transparency—users can inspect model weights, architectures, and training methodologies—this technical transparency doesn’t automatically translate into meaningful accountability or understanding of societal impact. The complexity of large language models means that even with full access to model parameters, predicting behavior or identifying potential harms remains extremely challenging.

This creates what researchers call the “transparency paradox”: maximum technical openness may not lead to maximum societal understanding or control. The ability to examine model weights doesn’t necessarily provide insight into how the model will behave in specific contexts or what unintended consequences might emerge from widespread deployment.

Moreover, the rapid pace of open-source AI development often outstrips the capacity for thorough safety and impact assessment. While proprietary AI companies face commercial incentives to conduct extensive testing before release, open-source projects may prioritize rapid iteration and community feedback over comprehensive pre-deployment evaluation.

Economic and Labor Market Implications

The widespread availability of open-source AI models is reshaping economic relationships and labor market dynamics in ways that demand careful ethical consideration. By removing cost barriers to AI access, these models enable smaller organizations and individual developers to compete with larger corporations, potentially democratizing innovation and economic opportunity.

However, this same accessibility may accelerate job displacement and economic disruption, particularly affecting workers in routine cognitive tasks. The distributed nature of open-source AI deployment makes it difficult to track or mitigate these impacts through traditional policy mechanisms. Unlike centralized AI services that can be regulated or taxed to fund transition programs, open-source models operate beyond such interventions.

The economic implications extend to global power dynamics, as open-source models can reduce dependence on AI services from dominant technology companies. This shift may benefit smaller nations and organizations but also raises questions about the sustainability of AI research and development if traditional revenue models are disrupted.

Regulatory and Policy Considerations

Current regulatory frameworks struggle to address the unique challenges posed by open-source AI models. Traditional approaches to technology regulation often assume centralized control points where compliance can be monitored and enforced. Open-source models challenge this assumption by distributing control across countless individual deployments.

Policymakers face difficult questions about how to balance innovation benefits with risk mitigation. Should there be restrictions on releasing certain types of AI models? How can societies ensure accountability when AI capabilities are distributed beyond traditional regulatory reach? What new governance mechanisms are needed for the age of decentralized AI?

The international dimension adds further complexity, as open-source models can cross borders instantly and operate beyond any single jurisdiction’s control. This reality necessitates new forms of international cooperation and coordination that may be difficult to achieve given varying national interests and regulatory philosophies.

What This Means

The rise of open-source AI models represents a fundamental shift in how society relates to artificial intelligence, moving from a model of centralized control to distributed responsibility. This transition offers significant benefits in terms of innovation, accessibility, and democratic participation in AI development, but it also creates new ethical challenges that existing governance frameworks are ill-equipped to address.

The path forward requires developing new approaches to AI ethics that account for the realities of decentralized deployment while preserving the benefits of open development. This may involve creating new forms of community-driven governance, developing better tools for bias detection and mitigation in distributed environments, and fostering international cooperation on AI safety standards.

Ultimately, the success of open-source AI will depend not just on technical capabilities but on our collective ability to develop ethical frameworks and governance mechanisms that can operate effectively in a decentralized world.

FAQ

What makes open-source AI models different from proprietary ones in terms of ethical considerations?

Open-source AI models cannot be controlled or updated by their creators once released, making it impossible to retroactively address discovered harms or implement safety improvements across all deployments. This creates unique challenges for accountability and governance.

How do local AI deployments affect traditional approaches to AI oversight?

Local AI inference operates without network signatures or centralized monitoring points, making it invisible to traditional data loss prevention systems and regulatory oversight mechanisms designed for cloud-based AI services.

What are the main bias and fairness challenges with open-source AI models?

Open-source models place responsibility for bias detection and mitigation on individual users and organizations, many of whom may lack the expertise or incentives to adequately address these issues, potentially leading to widespread deployment of biased AI systems.

Sources

For the broader 2026 landscape across research, industry, and policy, see our State of AI 2026 reference.