Open source AI models have fundamentally altered the enterprise AI landscape, with Meta’s Llama series and Mistral’s offerings leading a paradigm shift toward local deployment and customizable inference. According to Hugging Face’s latest documentation, organizations are increasingly adopting fine-tuning methodologies to customize these models for specific use cases, while VentureBeat reports that local inference capabilities are creating new security challenges for enterprise IT teams.

The proliferation of open-source alternatives to proprietary models like GPT-4 and Claude has democratized access to large language model capabilities, enabling organizations to deploy AI without the ongoing API costs and data privacy concerns associated with cloud-based solutions.

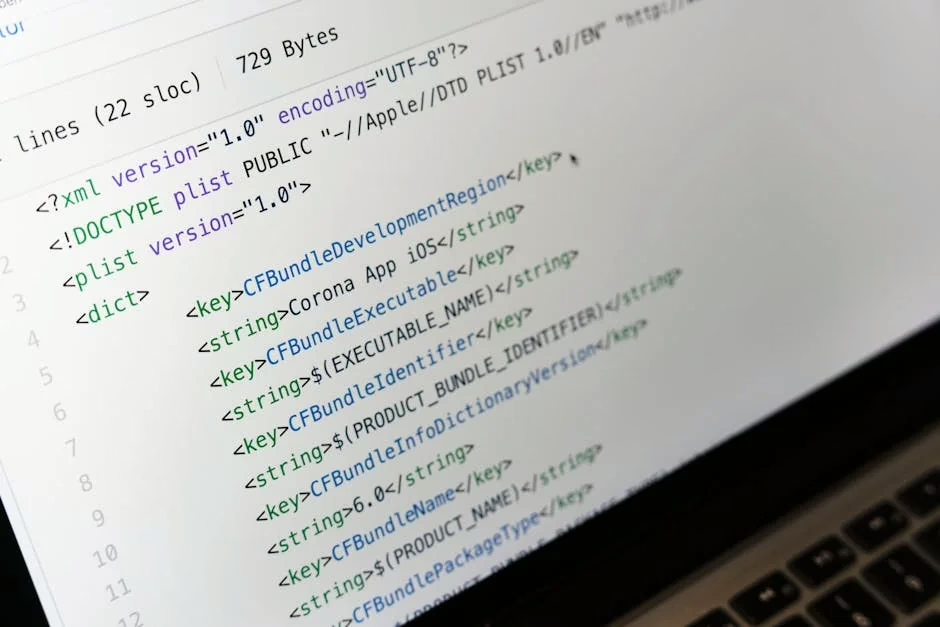

Technical Architecture Driving Local Deployment

The technical feasibility of local AI deployment has reached a critical inflection point. Consumer-grade hardware acceleration now supports quantized 70B-parameter models on high-end laptops, particularly MacBook Pros with 64GB unified memory. This represents a dramatic shift from the multi-GPU server requirements of just two years ago.

Quantization techniques have become mainstream, enabling model compression that maintains performance while reducing computational overhead. These optimizations allow organizations to run sophisticated language models entirely offline, eliminating the network signatures that traditional security monitoring relies upon.

The emergence of what industry analysts term “bring your own model” (BYOM) deployments creates new operational paradigms. Unlike cloud-based API calls that generate observable network traffic, local inference operates entirely within the endpoint device, challenging existing data loss prevention (DLP) frameworks.

Meta’s Llama Series: Open Source Leadership

Meta’s Llama architecture has established itself as the de facto standard for open-source large language models. The transformer-based architecture incorporates several key innovations:

- RMSNorm normalization replacing traditional LayerNorm for improved training stability

- SwiGLU activation functions enhancing model expressiveness

- Rotary positional embeddings (RoPE) enabling better handling of sequence positions

The Llama model family spans from 7B to 70B parameters, with each variant optimized for different deployment scenarios. The 7B models target edge deployment and mobile applications, while 70B variants provide near-GPT-4 performance for enterprise workloads.

Fine-tuning methodologies for Llama models have been extensively documented through Hugging Face’s comprehensive guides, enabling organizations to adapt pre-trained weights for domain-specific applications without requiring massive computational resources.

Mistral’s Technical Innovation

Mistral AI has introduced several architectural innovations that distinguish their models from traditional transformer implementations. Their sliding window attention mechanism reduces computational complexity while maintaining long-range dependencies, enabling more efficient inference on resource-constrained hardware.

The mixture of experts (MoE) architecture in Mistral’s larger models selectively activates subsets of parameters based on input characteristics, dramatically improving inference efficiency. This approach allows models to maintain high capability while reducing per-token computational costs.

Mistral’s Apache 2.0 licensing provides unrestricted commercial usage rights, contrasting with Meta’s custom license restrictions. This licensing approach has accelerated enterprise adoption, particularly in sectors with strict intellectual property requirements.

Security Implications of Distributed AI

The shift toward local AI inference creates unprecedented security challenges for enterprise environments. Traditional cloud access security broker (CASB) policies become ineffective when model inference occurs entirely within endpoint devices.

Shadow AI 2.0 represents the evolution of unauthorized AI usage beyond simple web interface access. Employees can now run sophisticated language models without generating detectable network signatures, creating blind spots in traditional security monitoring.

Key security considerations include:

- Data exfiltration through model outputs without network-based detection

- Unvetted model weights potentially containing malicious code or biased training data

- Compliance violations when regulated data is processed through unmanaged AI systems

Organizations must develop new governance frameworks that account for endpoint-based AI processing rather than relying solely on network perimeter security.

Performance Optimization and Fine-Tuning

Fine-tuning open source models requires understanding several technical parameters that significantly impact performance. Learning rate scheduling must be carefully calibrated to prevent catastrophic forgetting while enabling task-specific adaptation.

Parameter-efficient fine-tuning (PEFT) techniques like LoRA (Low-Rank Adaptation) enable organizations to customize models without modifying the entire parameter set. This approach reduces computational requirements while maintaining model performance across diverse tasks.

The choice of optimization algorithms significantly impacts training efficiency. AdamW remains the standard for most applications, while newer optimizers like Lion show promise for specific architectural configurations.

Evaluation methodologies must account for domain-specific performance metrics beyond traditional benchmarks. Organizations should develop custom evaluation suites that reflect their specific use cases and performance requirements.

What This Means

The maturation of open source AI models represents a fundamental shift in enterprise AI strategy. Organizations can now deploy sophisticated language model capabilities without the ongoing costs and privacy concerns associated with cloud-based solutions. However, this transition requires new technical expertise in model deployment, security frameworks, and performance optimization.

The democratization of AI capabilities through open source models accelerates innovation while creating new operational challenges. Enterprise IT teams must develop competencies in local model deployment, quantization techniques, and endpoint security monitoring to effectively leverage these technologies.

Furthermore, the technical accessibility of advanced AI capabilities enables smaller organizations to compete with larger enterprises that previously held advantages through superior cloud AI budgets. This leveling effect will likely accelerate AI adoption across diverse industry sectors.

FAQ

What hardware requirements are needed for local LLM deployment?

Modern laptops with 32-64GB RAM can run quantized 7B-13B parameter models effectively, while 70B models require 64GB+ unified memory or distributed GPU setups for optimal performance.

How do open source models compare to proprietary alternatives in performance?

Latest Llama and Mistral models achieve 85-95% of GPT-4 performance on most benchmarks while offering complete customization control and elimination of ongoing API costs.

What are the key security considerations for enterprise open source AI deployment?

Primary concerns include undetectable data processing, model weight integrity verification, compliance with data governance policies, and endpoint monitoring for unauthorized AI usage.

Related news

- Agentic coding at enterprise scale demands spec-driven development – VentureBeat

- Gemma 4: Byte for byte, the most capable open models – DeepMind Blog

- Microsoft is working on yet another OpenClaw-like agent – TechCrunch

Readers new to the underlying architecture can start with, see how large language models actually work.