Open-source AI models have fundamentally transformed how enterprises approach artificial intelligence deployment in 2026, with platforms like Meta’s Llama and Mistral leading a shift toward customizable, cost-effective solutions. According to recent Hugging Face research, organizations are increasingly fine-tuning smaller models rather than relying solely on massive proprietary systems, while Stanford and University of Wisconsin studies demonstrate that train-to-test scaling can deliver superior performance at dramatically lower costs.

This transformation comes as enterprise security challenges mount, with VentureBeat surveys revealing that 88% of organizations experienced AI agent security incidents in the past year, driving demand for transparent, auditable open-source alternatives.

The Rise of Practical Fine-Tuning

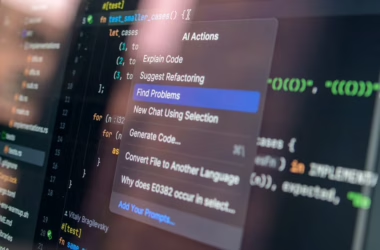

The democratization of AI model customization has reached a tipping point. What once required massive computational resources and specialized expertise is now accessible through platforms like Hugging Face, which has streamlined the fine-tuning process for large language models using PyTorch.

Key advantages of fine-tuning include:

- Cost efficiency: Training smaller, specialized models costs significantly less than licensing large proprietary systems

- Performance optimization: Models tailored to specific tasks often outperform general-purpose alternatives

- Data privacy: Organizations maintain complete control over their training data and model weights

- Customization flexibility: Teams can adjust model behavior for specific industry requirements or use cases

For everyday users, this means AI tools that understand industry-specific terminology, comply with organizational policies, and integrate seamlessly with existing workflows. A customer service team, for example, can fine-tune a model to understand their product catalog and company policies without exposing sensitive data to external providers.

Train-to-Test Scaling Revolutionizes Budget Allocation

Researchers have challenged conventional wisdom about AI model development with train-to-test scaling laws that optimize both training and inference costs. This approach proves that smaller models trained on larger datasets and deployed with multiple inference samples often outperform massive models on complex reasoning tasks.

The implications for enterprise budgets are substantial. Instead of spending millions on the largest available models, organizations can:

- Train compact models on comprehensive, domain-specific datasets

- Allocate saved resources to generate multiple reasoning samples during deployment

- Achieve better performance on complex tasks while maintaining manageable per-query costs

- Scale inference dynamically based on query complexity and accuracy requirements

This strategy particularly benefits organizations with specific use cases where general-purpose models struggle. A legal firm, for instance, might train a smaller model extensively on legal documents and case law, then use multiple inference samples to ensure accurate contract analysis.

Security Challenges Drive Open Source Adoption

Enterprise security incidents involving AI agents have accelerated the shift toward open-source models. Gravitee’s State of AI Agent Security 2026 survey found that while 82% of executives believe their policies protect against unauthorized agent actions, 88% experienced security incidents in the past year.

Critical security gaps include:

- Limited runtime visibility: Only 21% of organizations can monitor agent behavior in real-time

- Inadequate budget allocation: Just 6% of security budgets address AI agent risks

- Monitoring without enforcement: Organizations observe threats but lack isolation capabilities

- Supply chain vulnerabilities: Third-party model dependencies create new attack vectors

Open-source models address these concerns by providing complete transparency into model architecture, training data, and decision-making processes. Organizations can audit code, implement custom security measures, and maintain air-gapped deployments when necessary.

Platform Integration and Accessibility

The user experience around open-source AI models has improved dramatically. Hugging Face has become the de facto platform for model discovery, with intuitive interfaces that allow non-technical users to:

- Browse model collections with clear performance metrics and use case descriptions

- Download pre-trained weights with simple command-line tools or web interfaces

- Access fine-tuning tutorials that guide users through customization processes

- Share and collaborate on model improvements within organizational teams

Meanwhile, enterprise platforms are adapting to this new reality. Salesforce’s recent Headless 360 initiative exemplifies how traditional software companies are exposing their entire platforms as APIs and tools that AI agents can operate programmatically, eliminating the need for traditional graphical interfaces.

Real-World Implementation Success Stories

Organizations across industries are finding practical applications for open-source AI models. Healthcare providers use fine-tuned models for medical record analysis while maintaining HIPAA compliance. Financial institutions deploy custom models for fraud detection that understand their specific transaction patterns and risk profiles.

Common implementation patterns include:

- Hybrid approaches: Combining multiple smaller models for different tasks rather than relying on single large models

- Incremental deployment: Starting with low-risk use cases and gradually expanding as confidence grows

- Community collaboration: Sharing non-sensitive model improvements with industry peers

- Continuous refinement: Regularly updating models with new data and feedback

The key to success lies in treating open-source AI models as building blocks rather than complete solutions. Organizations that invest in understanding their specific needs and customizing models accordingly see the greatest returns.

What This Means

The open-source AI model ecosystem represents a fundamental shift from vendor dependence to organizational autonomy. Enterprises gain unprecedented control over their AI capabilities while reducing costs and improving security posture. However, this transition requires new skills and processes.

Organizations must develop internal expertise in model evaluation, fine-tuning, and deployment. The democratization of AI doesn’t eliminate the need for technical knowledge—it redistributes it from external vendors to internal teams.

For consumers, this trend promises more personalized, privacy-respecting AI applications. As organizations gain confidence with open-source models, we’ll see more AI-powered services that understand specific contexts and maintain user data locally rather than sending it to external providers.

The winners in this new landscape will be organizations that view open-source AI models not as cost-cutting measures, but as strategic assets that enable unique competitive advantages through customization and control.

FAQ

What’s the difference between open-source and proprietary AI models?

Open-source models provide complete access to code, weights, and training methodologies, allowing organizations to modify, audit, and deploy them independently. Proprietary models require ongoing licensing fees and offer limited customization options.

How much does it cost to fine-tune an open-source AI model?

Costs vary significantly based on model size and dataset complexity, but fine-tuning typically costs thousands rather than millions of dollars. Many organizations spend $10,000-$50,000 to customize models for specific use cases.

Are open-source AI models as capable as proprietary alternatives?

For specific use cases, fine-tuned open-source models often outperform general-purpose proprietary models. While they may require more initial setup, the ability to customize for exact requirements frequently delivers superior results.