Enterprise development teams are experiencing a fundamental shift in software delivery, with AI coding tools like Copilot and Cursor enabling project completion timelines to compress from weeks to days. According to VentureBeat, AWS engineering teams have completed 18-month rearchitecture projects originally scoped for 30 developers with just six people in 76 days, while the Kiro IDE team reduced feature builds from two weeks to two days using spec-driven development approaches.

This transformation represents more than incremental productivity gains—it signals the emergence of autonomous coding agents that operate through structured specifications rather than ad-hoc prompting. The technical architecture underlying these advances centers on specification-driven development methodologies that provide trust models for enterprise-scale AI code generation.

Spec-Driven Development Architecture for AI Agents

The core technical innovation enabling trustworthy AI coding tools lies in spec-driven development, which fundamentally differs from traditional documentation-after-the-fact approaches. This methodology requires AI agents to work from structured, context-rich specifications that define system requirements, properties, and correctness criteria before generating any code.

Unlike pre-agentic AI approaches, spec-driven development creates an artifact that agents reason against throughout the entire development process. This architectural pattern addresses the critical enterprise question of AI code trustworthiness by establishing clear verification boundaries.

The specification serves as both a constraint mechanism and a quality assurance framework. Enterprise teams implementing this approach report:

- Reduced debugging cycles through pre-defined correctness criteria

- Improved code consistency across large development teams

- Enhanced maintainability through structured documentation

- Measurable quality improvements in generated code

This technical foundation enables what VentureBeat describes as “trustworthy autonomous coding agents” that can operate at enterprise scale while maintaining code quality standards.

Local Inference Revolution in Developer Tools

A significant architectural shift is occurring in AI coding tools as inference capabilities move from cloud-based APIs to local execution environments. This transition, dubbed “Shadow AI 2.0” by security researchers, fundamentally changes how developers interact with AI coding assistants.

Three technical convergences enable practical local inference:

Hardware Acceleration Advances

Consumer-grade accelerators now support serious development workloads. A MacBook Pro with 64GB unified memory can run quantized 70B-class models at usable speeds, bringing capabilities that previously required multi-GPU server configurations to individual developer workstations.

Mainstream Quantization Techniques

Model compression techniques have advanced significantly, making it feasible to run large language models efficiently on laptop hardware. These quantization methods maintain model performance while dramatically reducing memory and computational requirements.

Optimized Model Architectures

Purpose-built coding models with architectural optimizations for development tasks enable faster inference on local hardware compared to general-purpose large language models.

This shift creates new challenges for enterprise security teams, as traditional data loss prevention (DLP) systems cannot monitor local inference interactions. When AI coding assistance happens entirely offline, security visibility decreases while developer productivity increases.

Hyperagents: Self-Improving AI Development Systems

Meta researchers have introduced a breakthrough approach called “hyperagents” that represents a significant advance in self-improving AI systems for coding and beyond. Unlike traditional self-improving models that rely on fixed meta-agents, hyperagents continuously rewrite and optimize their own problem-solving logic.

Key technical innovations include:

- Dynamic meta-agent architecture that evolves improvement mechanisms rather than using handcrafted approaches

- Cross-domain capability transfer enabling self-improvement in non-coding domains like robotics and document analysis

- Autonomous capability invention where agents independently develop features like persistent memory and performance tracking

- Compound learning cycles that accelerate progress by improving the self-improvement process itself

Jenny Zhang, co-author of the research, explains that “the core limitation of handcrafted meta-agents is that they can only improve as fast as humans can design and maintain them.” Hyperagents overcome this bottleneck by making the improvement mechanism itself learnable and adaptable.

This architecture enables AI coding tools to develop structured, reusable decision-making capabilities that compound over time, reducing the need for constant manual prompt engineering and domain-specific customization.

IDE Integration and Enterprise Deployment Patterns

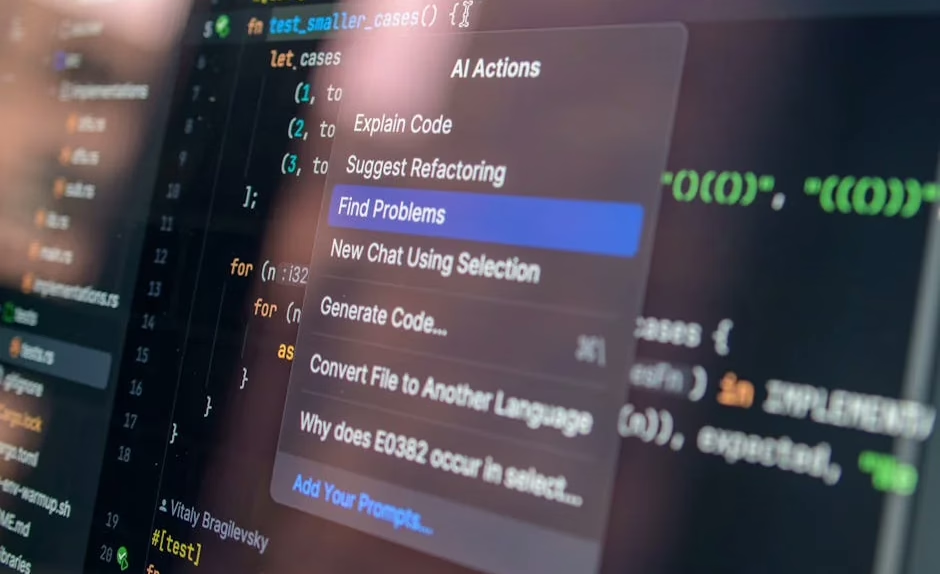

Modern AI coding tools are implementing sophisticated IDE integration patterns that go beyond simple code completion. These integrations leverage multiple technical approaches to provide comprehensive development assistance:

Context-Aware Code Generation: Advanced coding assistants analyze entire codebases, understanding project structure, coding patterns, and architectural decisions to generate contextually appropriate code.

Multi-Modal Development Support: Tools like Cursor integrate natural language processing with code analysis, enabling developers to describe functionality in plain English while the AI generates corresponding implementation code.

Real-Time Code Analysis: Continuous analysis of code quality, security vulnerabilities, and performance implications during the development process, with AI-powered suggestions for improvements.

Collaborative Agent Networks: Some platforms deploy multiple specialized AI agents that collaborate on different aspects of development, from initial design through testing and deployment.

Enterprise deployment requires careful consideration of model selection, security policies, and integration with existing development workflows. Organizations are implementing hybrid approaches that combine cloud-based capabilities for complex reasoning with local inference for sensitive code handling.

Performance Metrics and Technical Benchmarks

Evaluating AI coding tool effectiveness requires sophisticated metrics beyond simple code generation speed. Technical benchmarks focus on several key areas:

Code Quality Metrics:

- Cyclomatic complexity reduction in generated code

- Security vulnerability detection and prevention rates

- Adherence to established coding standards and patterns

- Maintainability scores based on code structure analysis

Development Velocity Improvements:

- Time-to-first-working-prototype measurements

- Debugging cycle reduction percentages

- Feature completion timeline compression ratios

- Developer cognitive load reduction through automation

Enterprise Integration Success:

- Adoption rates across development teams

- Integration success with existing CI/CD pipelines

- Compliance with security and governance requirements

- ROI measurements based on development cost reductions

Research indicates that organizations implementing spec-driven AI coding approaches see 5-10x improvements in certain development tasks, with the most significant gains in routine implementation work and system integration projects.

What This Means

The convergence of spec-driven development, local inference capabilities, and self-improving AI agents represents a fundamental architectural shift in software development tooling. Organizations that successfully implement these technologies are achieving dramatic productivity improvements while maintaining code quality and security standards.

The technical implications extend beyond individual developer productivity to encompass entire software delivery pipelines. As AI coding tools become more sophisticated and autonomous, the role of human developers is evolving toward higher-level system design and specification creation rather than low-level implementation.

Enterprise adoption requires careful attention to security implications, particularly as local inference capabilities reduce visibility into AI interactions. Organizations must balance the productivity benefits of advanced AI coding tools with governance requirements and risk management considerations.

FAQ

What makes spec-driven development different from traditional AI coding approaches?

Spec-driven development requires AI agents to work from structured specifications that define system requirements and correctness criteria before generating code, providing a trust model for autonomous development rather than relying on post-hoc documentation.

Why are developers moving to local AI inference for coding tasks?

Local inference eliminates latency, provides offline capabilities, addresses data security concerns, and leverages increasingly powerful consumer hardware that can run quantized large language models effectively on laptops.

How do hyperagents improve upon existing self-improving AI systems?

Hyperagents continuously rewrite their own improvement mechanisms rather than relying on fixed meta-agents, enabling self-improvement across non-coding domains and creating compound learning cycles that accelerate progress over time.