AI-powered coding assistants like GitHub Copilot and Cursor are transforming software development across enterprises, with Microsoft and Google reporting that approximately 25% of their code is now AI-generated. However, a comprehensive survey by Lightrun reveals that 43% of AI-generated code changes require manual debugging in production environments, highlighting critical gaps between AI coding capabilities and production reliability.

The findings emerge as the AIOps market reaches $18.95 billion in 2026, projected to hit $37.79 billion by 2031. Despite widespread adoption, zero percent of engineering leaders reported being able to verify AI-suggested fixes with a single redeploy cycle, with 88% requiring two to three cycles and 11% needing four to six attempts.

Enterprise-Scale AI Coding Architectures Drive Adoption

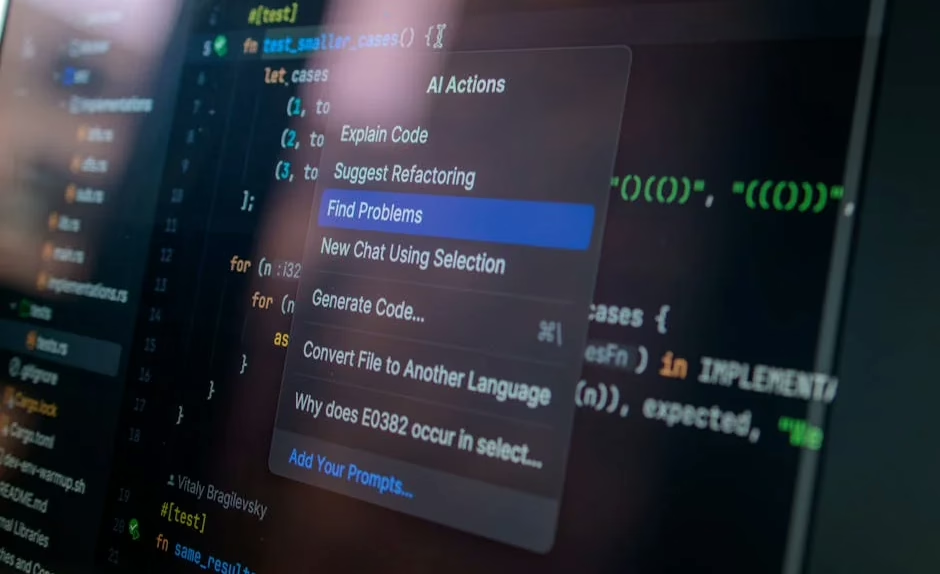

The technical foundation of modern AI coding tools relies on large language models trained specifically on code repositories. GitHub Copilot, powered by OpenAI’s Codex architecture, processes contextual information from the integrated development environment (IDE) to generate code suggestions in real-time. Similarly, Cursor implements advanced transformer architectures optimized for code completion and generation tasks.

Enterprise implementations are scaling rapidly through spec-driven development methodologies. According to VentureBeat, this approach requires AI agents to work from structured, context-rich specifications that define system requirements before generating code. The Kiro IDE team demonstrated this methodology’s effectiveness by reducing feature development cycles from two weeks to two days using their own agentic coding environment.

AWS engineering teams have achieved even more dramatic results, completing an 18-month rearchitecture project originally scoped for 30 developers with just six people in 76 days. These implementations leverage autonomous coding agents that reason against specifications throughout the entire development process, fundamentally different from traditional post-hoc documentation approaches.

Local Inference Creates New Security Paradigms

A significant shift toward on-device AI inference is reshaping enterprise security models for coding tools. VentureBeat reports that employees are increasingly running capable models locally on laptops, creating “Shadow AI 2.0” scenarios that bypass traditional cloud access security broker (CASB) policies.

Three technical convergences enable this transition:

- Consumer-grade accelerators: MacBook Pros with 64GB unified memory can run quantized 70B-class models at practical speeds for real workflows

- Mainstream quantization: Model compression techniques now make large language models accessible on standard hardware

- Improved model architectures: Optimized transformer variants require significantly less computational overhead

This shift poses challenges for traditional data loss prevention (DLP) systems, which cannot monitor local inference interactions. When security teams cannot observe AI-code interactions, governance frameworks built around “data exfiltration to the cloud” become insufficient for managing “unvetted inference inside the device.”

Technical Architecture Challenges in Production Environments

The production reliability gap stems from fundamental architectural limitations in current AI coding systems. The Lightrun survey of 200 senior site-reliability and DevOps leaders reveals systematic issues with AI-generated code quality assurance.

Key technical challenges include:

- Context window limitations: Current transformer architectures struggle with long-range dependencies across large codebases

- Training data bias: Models trained on public repositories may not reflect enterprise-specific coding standards and architectural patterns

- Hallucination in code generation: AI systems can generate syntactically correct but semantically flawed code that passes initial testing

The debugging cycle inefficiency—with zero organizations achieving single-cycle verification—indicates that current quality assurance methodologies are inadequate for AI-generated code. Traditional testing frameworks designed for human-written code may miss edge cases and logical errors characteristic of AI-generated implementations.

Spec-Driven Development as Trust Architecture

Spec-driven development emerges as a critical trust model for autonomous coding systems. This methodology establishes structured specifications as artifacts that AI agents reason against throughout development, creating verifiable checkpoints for code correctness.

The technical implementation involves:

- Formal specification languages: Machine-readable requirements that define system behavior and properties

- Continuous verification: Real-time checking of generated code against specifications during development

- Property-based testing: Automated verification that code maintains specified invariants and behaviors

This approach addresses the fundamental trust question in AI-generated code by shifting focus from “can AI write code” to “can we verify AI-generated code meets requirements.” Enterprise teams implementing spec-driven methodologies report significant improvements in code quality and deployment confidence.

Performance Metrics and Model Capabilities

Current AI coding tools demonstrate varying performance across different programming tasks and languages. Quantized 70B-parameter models running locally can achieve competitive code completion rates while maintaining acceptable latency for interactive development workflows.

Performance characteristics include:

- Code completion accuracy: 60-80% for common programming patterns in popular languages

- Context understanding: Effective within 4,000-8,000 token windows for most coding tasks

- Language coverage: Strong performance in Python, JavaScript, and Java; variable quality in domain-specific languages

However, the production debugging requirements indicate that accuracy metrics measured during development do not correlate strongly with production reliability. This suggests that current evaluation methodologies may inadequately assess real-world code quality.

What This Means

The enterprise adoption of AI coding tools represents a fundamental shift in software development methodologies, but current implementations reveal significant gaps between development-time capabilities and production reliability. The 43% production debugging rate indicates that while AI can accelerate initial code generation, the verification and quality assurance infrastructure has not kept pace with AI capabilities.

Spec-driven development methodologies offer a promising technical approach to address trust and verification challenges, but require significant architectural changes to existing development workflows. Organizations must balance the productivity gains from AI coding tools against the increased operational overhead of debugging and verification cycles.

The shift toward local inference models creates both opportunities for improved privacy and challenges for enterprise governance. Security frameworks must evolve beyond cloud-centric monitoring to address on-device AI inference scenarios while maintaining visibility into code generation activities.

FAQ

What percentage of enterprise code is now AI-generated?

Microsoft and Google report that approximately 25% of their code is now AI-generated, representing rapid adoption across major technology companies.

Why do 43% of AI-generated code changes need production debugging?

Current AI models struggle with context limitations, training data bias, and hallucination issues that create syntactically correct but semantically flawed code that passes initial testing but fails in production environments.

What is spec-driven development for AI coding?

Spec-driven development requires AI agents to work from structured, machine-readable specifications that define system requirements before generating code, creating verifiable checkpoints for code correctness throughout the development process.

Readers new to the underlying architecture can start with, see how large language models actually work.