A fundamental shift is occurring in how artificial intelligence systems acquire complex procedural knowledge. Rather than relying on traditional documentation or rule-based programming, researchers are developing visual imitation learning techniques that enable AI agents to master enterprise workflows by observing human expert demonstrations.

Technical Architecture of Visual Learning Systems

The core methodology behind visual imitation learning involves training neural networks on screen recordings and video demonstrations of human experts performing specific tasks. This approach represents a significant departure from conventional training paradigms that depend on structured data or explicit programming instructions.

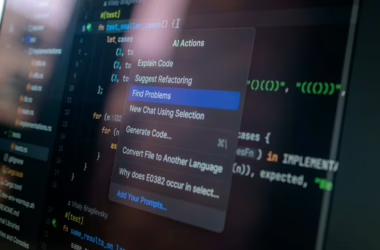

Companies like Guidde are pioneering this approach by capturing video walkthroughs of enterprise processes—from creating support tickets to processing invoices—and using these recordings as training data for AI agents. The technical challenge lies in developing computer vision models capable of understanding user interface elements, interpreting sequential actions, and generalizing these patterns to new contexts.

Multi-Modal AI Assistants in Production

The practical implementation of these techniques is already visible in production systems. Read AI’s recently launched “Ada” represents a sophisticated example of an AI-powered digital assistant that operates through email interfaces. The system demonstrates advanced natural language processing capabilities combined with calendar integration and knowledge base access.

Ada’s architecture enables it to parse email requests, access calendar APIs, and generate contextually appropriate responses—all while maintaining conversational coherence across extended email threads. The technical implementation likely involves transformer-based language models fine-tuned on scheduling and communication tasks, integrated with enterprise APIs through secure authentication protocols.

Neural Network Training Methodologies

The visual imitation learning approach addresses a critical bottleneck in AI deployment: the “last mile” problem where sophisticated software remains underutilized due to user interface complexity. Traditional training methods require extensive documentation and structured datasets, but visual learning systems can extract procedural knowledge directly from demonstration videos.

This methodology employs computer vision techniques to segment video frames, identify UI elements, and map user actions to interface changes. The resulting training data enables reinforcement learning algorithms to develop policies that can navigate complex enterprise software interfaces autonomously.

Security Implications and Model Robustness

Recent incidents highlight the critical importance of robust security measures in AI productivity systems. The successful exploitation of Anthropic’s Claude model against Mexican government systems demonstrates the potential for prompt injection attacks and unauthorized data access. This incident involved approximately 150 GB of sensitive data across multiple government agencies, emphasizing the need for enhanced security architectures.

These security challenges underscore the importance of implementing proper access controls, input validation, and monitoring systems when deploying AI assistants in enterprise environments. The technical requirements include robust authentication mechanisms, encrypted communication channels, and comprehensive audit logging.

Performance Metrics and Evaluation

Evaluating visual imitation learning systems requires novel metrics beyond traditional accuracy measures. Key performance indicators include task completion rates, interface navigation efficiency, and generalization capability across different software environments. These systems must demonstrate consistent performance across varying UI layouts, software versions, and user contexts.

The technical evaluation process involves creating standardized benchmark tasks, measuring execution time compared to human experts, and assessing error recovery capabilities when encountering unexpected interface changes or system responses.

Future Research Directions

The convergence of visual learning, natural language processing, and enterprise integration represents a significant advancement in AI productivity applications. Future developments will likely focus on improving model interpretability, enhancing security frameworks, and developing more sophisticated multi-modal architectures that can seamlessly integrate visual, textual, and contextual information.

As these systems mature, we can expect to see more sophisticated reasoning capabilities, improved handling of edge cases, and better integration with existing enterprise security and compliance frameworks. The technical challenge remains balancing system autonomy with human oversight while maintaining the security and reliability requirements of enterprise environments.

For a side-by-side look at the flagship models in play, see our full 2026 AI model comparison.