Enterprise software development is experiencing a fundamental shift as AI coding tools evolve beyond simple code completion to autonomous agents capable of handling complex architectural tasks. According to VentureBeat, AWS engineering teams have completed 18-month rearchitecture projects originally scoped for 30 developers using just six people in 76 days, while the Kiro IDE team reduced feature development cycles from two weeks to two days using spec-driven AI development approaches.

This transformation represents a critical evolution from early “vibe coding” tools that primarily assisted junior developers to sophisticated systems that enhance expert-level development workflows. The technical architecture underlying these advances centers on spec-driven development methodologies that provide structured, context-rich specifications for AI agents to reason against throughout the entire development process.

Spec-Driven Development Architecture Enables Trustworthy AI Agents

The core technical innovation driving enterprise AI coding adoption lies in spec-driven development – a methodology where AI agents work from structured specifications that define system requirements, properties, and correctness criteria before generating any code. This approach fundamentally differs from traditional post-hoc documentation practices by establishing specifications as reasoning artifacts throughout development.

Unlike earlier AI coding tools that focused primarily on code generation capabilities, spec-driven systems create a trust model for autonomous development. The specification serves as a continuous validation framework, allowing AI agents to maintain consistency with intended system behavior while reducing the hallucination risks commonly associated with large language model outputs.

This architectural approach addresses the critical enterprise question of AI code trustworthiness. Rather than simply evaluating whether AI can write code, spec-driven development establishes measurable criteria for code correctness and system compliance. The methodology has demonstrated significant productivity gains in enterprise environments, with development teams reporting 10x acceleration in feature delivery timelines.

Local Inference Capabilities Challenge Traditional Security Models

A significant technical shift is occurring as AI coding tools migrate from cloud-based APIs to local inference capabilities. VentureBeat reports that consumer-grade accelerators now enable practical local execution of quantized 70B-class models on high-end laptops, fundamentally changing enterprise security considerations for AI development tools.

Three converging technical factors enable this transition:

- Advanced hardware acceleration: MacBook Pro systems with 64GB unified memory can execute quantized large language models at usable speeds for real development workflows

- Mainstream quantization techniques: Model compression methods now routinely reduce memory requirements while maintaining inference quality

- Optimized inference frameworks: Purpose-built software stacks maximize hardware utilization for local model execution

This shift creates what security professionals term “Shadow AI 2.0” – a scenario where traditional data loss prevention systems cannot observe AI interactions occurring entirely within local devices. The implications extend beyond simple monitoring challenges to fundamental questions about bring-your-own-model (BYOM) governance in enterprise environments.

For development teams, local inference offers significant advantages including reduced latency, enhanced privacy, and independence from network connectivity. However, it also introduces new technical requirements for model management, version control, and performance optimization across diverse hardware configurations.

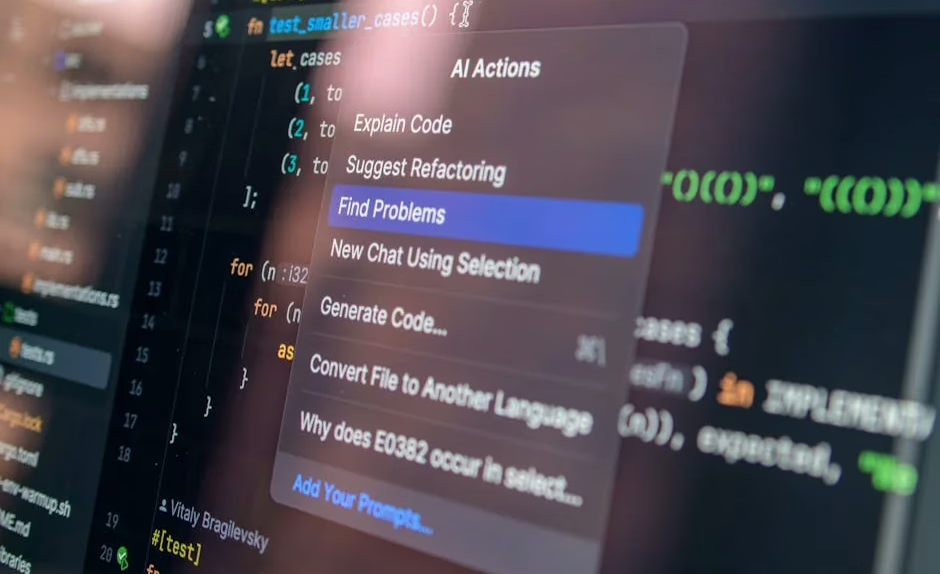

IDE Integration Patterns and Developer Experience Evolution

Modern AI coding tools demonstrate sophisticated integration patterns with development environments, moving beyond simple autocomplete functionality to comprehensive workflow enhancement. The technical architecture of these integrations involves multiple components working in concert:

Context-aware code analysis systems maintain understanding of entire codebases, including dependency graphs, architectural patterns, and coding conventions. These systems utilize transformer-based architectures fine-tuned on large code corpora to understand semantic relationships across files and modules.

Real-time collaboration interfaces enable seamless interaction between developers and AI agents within familiar IDE environments. Tools like Cursor and GitHub Copilot implement sophisticated context management systems that preserve conversation history while adapting to changing code contexts.

Multi-modal reasoning capabilities allow AI coding assistants to process various input types including natural language descriptions, existing code snippets, documentation, and even visual mockups. This technical capability stems from recent advances in vision-language models and cross-modal attention mechanisms.

The developer experience implications are substantial. Teams report that AI coding tools are most effective when integrated into existing workflows rather than requiring adoption of entirely new development paradigms. This finding has influenced the design of next-generation coding assistants toward seamless IDE integration rather than standalone applications.

Performance Metrics and Technical Benchmarks

Quantifying AI coding tool effectiveness requires sophisticated evaluation methodologies that go beyond simple code generation accuracy. Enterprise teams are developing new metrics focused on end-to-end development velocity, code quality maintenance, and technical debt reduction.

Key performance indicators include:

- Feature delivery acceleration: Measured as reduction in time from specification to production deployment

- Code review efficiency: Quantified through decreased review cycles and faster approval processes

- Defect reduction rates: Tracked through post-deployment bug reports and security vulnerability assessments

- Developer satisfaction metrics: Measured through productivity surveys and tool adoption rates

Technical benchmarks specifically evaluate AI coding tools on code correctness, security compliance, and architectural consistency. These assessments often involve domain-specific test suites that reflect real enterprise development challenges rather than generic programming tasks.

Early enterprise deployments report 60-80% reduction in routine coding tasks, allowing developers to focus on higher-level architectural decisions and complex problem-solving activities. However, teams emphasize that success depends heavily on proper tool configuration and developer training programs.

Enterprise Security and Governance Frameworks

The enterprise adoption of AI coding tools necessitates comprehensive security and governance frameworks addressing both traditional and emerging risks. Technical security considerations span multiple domains:

Code integrity verification systems ensure AI-generated code meets security standards through automated scanning and validation processes. These systems integrate with existing static analysis security testing (SAST) and dynamic application security testing (DAST) pipelines.

Data privacy protection mechanisms prevent sensitive information exposure during AI training or inference processes. Enterprise implementations often require on-premises deployment or private cloud configurations to maintain data sovereignty.

Access control frameworks manage AI tool permissions and usage patterns across development teams. These systems implement role-based access control (RBAC) and attribute-based access control (ABAC) models tailored to AI coding workflows.

Governance frameworks must also address model provenance tracking, ensuring teams understand which AI models generate specific code segments. This capability becomes critical for audit compliance and intellectual property management in enterprise environments.

What This Means

The evolution of AI coding tools toward spec-driven autonomous agents represents a fundamental shift in software development methodologies. Enterprise teams that successfully adopt these technologies are establishing new competitive advantages through dramatically accelerated development cycles and improved code quality.

The technical implications extend beyond productivity gains to encompass new paradigms for human-AI collaboration in complex software systems. As local inference capabilities mature, organizations must balance the benefits of enhanced privacy and performance against the challenges of distributed AI governance.

For development teams, the transition requires investment in new skills and processes. Success depends on understanding both the technical capabilities and limitations of AI coding tools, while establishing governance frameworks that enable safe scaling across enterprise environments.

FAQ

What is spec-driven development in AI coding tools?

Spec-driven development requires AI agents to work from structured, context-rich specifications that define system requirements and correctness criteria before generating code, creating a trust model for autonomous development.

How do local AI coding tools differ from cloud-based solutions?

Local inference tools run AI models directly on developer devices, offering enhanced privacy and reduced latency but requiring more powerful hardware and creating new security governance challenges for enterprises.

What performance improvements do enterprise teams see with AI coding tools?

Enterprise teams report 60-80% reduction in routine coding tasks and 10x acceleration in feature delivery timelines when using spec-driven AI development approaches with proper implementation.

For the broader 2026 landscape across research, industry, and policy, see our State of AI 2026 reference.

Related news

- India’s vibe-coding startup Emergent enters OpenClaw-like AI agent space – TechCrunch

- The next evolution of the Agents SDK – OpenAI Blog