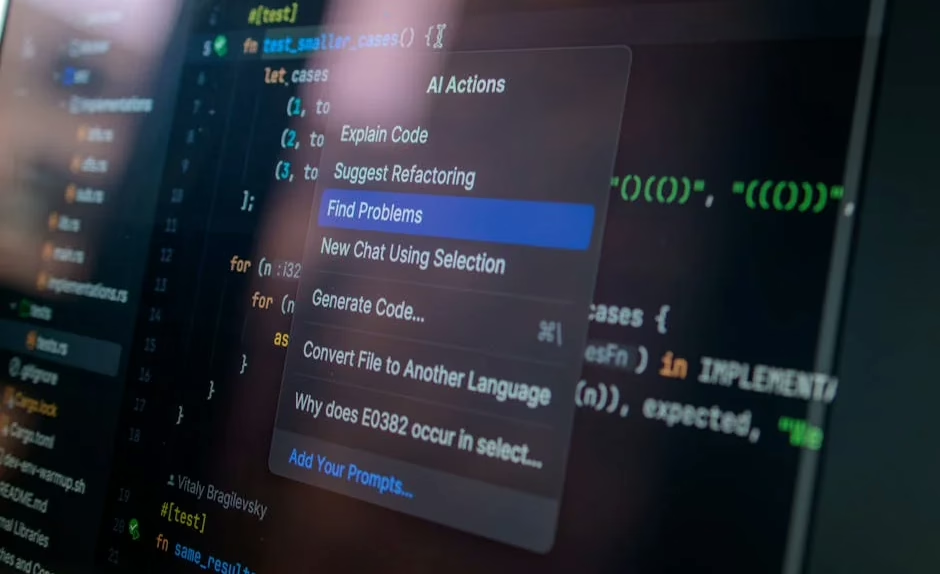

Artificial intelligence is transforming how companies write software, but new research reveals significant quality issues plaguing AI-generated code in production environments. According to Lightrun’s 2026 State of AI-Powered Engineering Report, 43% of AI-generated code changes require manual debugging in production even after passing quality assurance and staging tests.

The survey of 200 senior site-reliability and DevOps leaders across large enterprises in the US, UK, and EU exposes the hidden costs of the AI coding boom. Perhaps most striking: zero percent of engineering leaders reported their organizations could verify AI-suggested fixes with just one redeploy cycle. Instead, 88% needed two to three cycles, while 11% required four to six attempts.

These findings arrive as major tech companies embrace AI-generated code at unprecedented scale. Both Microsoft CEO Satya Nadella and Google CEO Sundar Pichai claim around 25% of their companies’ code now comes from AI systems.

Enterprise Adoption Outpaces Quality Controls

The rapid adoption of AI coding tools has created a significant gap between deployment speed and quality assurance capabilities. Companies are implementing AI-powered development workflows faster than they can build robust testing and validation systems.

Key adoption challenges include:

- Insufficient validation frameworks for AI-generated code

- Limited understanding of AI model limitations among development teams

- Pressure to accelerate development cycles using AI tools

- Inadequate debugging protocols for AI-assisted development

The AIOps market, encompassing platforms and services for managing AI-driven operations, reached $18.95 billion in 2026 and projects growth to $37.79 billion by 2031. However, the infrastructure designed to catch AI-generated mistakes significantly lags behind AI’s capacity to produce code.

“The 0% figure signals that engineering is hitting a trust wall with AI adoption,” said Or Maimon, Lightrun’s chief business officer, highlighting the survey’s finding about redeploy cycles.

Political Battles Over AI Regulation Heat Up

Meanwhile, the political landscape around AI governance is intensifying, with significant implications for workforce policies. New York Assembly member Alex Bores, a former Palantir employee turned politician, faces opposition from Silicon Valley’s most powerful figures due to his support for AI regulation.

A super PAC called Leading the Future, bankrolled by OpenAI’s Greg Brockman, Palantir cofounder Joe Lonsdale, and VC firm Andreessen Horowitz, launched an aggressive campaign against Bores’ congressional primary run. The group opposes his regulatory approach, describing it as “ideological and politically motivated legislation that would handcuff not only New York’s, but the entire country’s, ability to lead on AI jobs and innovation.”

Bores cosponsored New York’s RAISE Act, which became law in 2025 and requires major AI firms to implement and publish safety protocols for their models. This represents a growing trend of former tech workers advocating for stricter industry oversight.

Security Vulnerabilities Emerge in AI Platforms

Security concerns compound the workforce impact as new vulnerability classes emerge in AI systems. Microsoft recently patched CVE-2026-21520, a CVSS 7.5 indirect prompt injection vulnerability in Copilot Studio, discovered by Capsule Security.

The vulnerability, dubbed “ShareLeak,” exploited gaps between SharePoint form submissions and Copilot Studio’s context window. Attackers could inject malicious payloads through public comment fields, overriding the agent’s original instructions without input sanitization.

Critical security implications:

- New vulnerability classes specific to AI agent platforms

- Patches cannot fully eliminate prompt injection risks

- Enterprise AI deployments inherit additional security overhead

- Similar vulnerabilities discovered in Salesforce Agentforce

Capsule’s research calls Microsoft’s decision to assign a CVE to a prompt injection vulnerability “highly unusual,” potentially setting precedent for treating AI-specific security flaws as formal vulnerabilities requiring tracking and remediation.

Global AI Development Race Intensifies

The competitive landscape shows the US and China nearly tied in AI model performance, according to the 2026 AI Index from Stanford University. This tight race has significant implications for workforce development and job market dynamics globally.

Key competitive factors:

- Arena platform rankings show US-China parity in model performance

- OpenAI’s early ChatGPT lead narrowed significantly in 2024

- Geopolitical stakes drive massive infrastructure investments

- Supply chain vulnerabilities concentrate in Taiwan’s TSMC

AI data centers worldwide now consume 29.6 gigawatts of power—enough to run New York state at peak demand. Water usage from running OpenAI’s GPT-4o alone may exceed the drinking water needs of 12 million people annually.

Skills Gap Widens as Technology Advances

The rapid pace of AI development creates significant challenges for workforce adaptation and skills training. Traditional software development roles are evolving to require new competencies in AI system management, prompt engineering, and AI-human collaboration workflows.

Emerging skill requirements:

- AI model validation and testing methodologies

- Prompt injection vulnerability assessment

- AI-assisted debugging and quality assurance

- Hybrid human-AI development workflows

Companies struggle to train existing staff while recruiting talent with AI-specific expertise. The disconnect between AI capabilities and human understanding of these systems creates operational risks and quality control challenges.

What This Means

The AI workforce transformation reveals a technology advancing faster than supporting infrastructure, training programs, and governance frameworks can adapt. While companies rush to implement AI coding tools for competitive advantage, quality control systems lag significantly behind.

The 43% debugging rate for AI-generated code suggests enterprises need more robust validation frameworks before fully embracing AI-assisted development. Security vulnerabilities like ShareLeak demonstrate that AI platforms introduce new risk categories requiring specialized expertise.

Political battles over regulation reflect deeper tensions between innovation speed and responsible development practices. The involvement of major tech leaders in opposing regulatory advocates signals high stakes for industry autonomy versus public oversight.

For workers, this period demands proactive skill development in AI collaboration, quality assurance, and security assessment. Traditional roles aren’t disappearing but are evolving to require new competencies in managing AI systems effectively.

FAQ

How reliable is AI-generated code in production environments?

According to recent enterprise surveys, 43% of AI-generated code changes require manual debugging in production, even after passing initial testing phases. No organizations reported single-cycle verification success.

What security risks do AI coding platforms introduce?

AI platforms face new vulnerability classes like prompt injection attacks, where malicious inputs can override system instructions and potentially expose sensitive data. Microsoft recently patched such vulnerabilities in Copilot Studio.

How are companies addressing AI workforce transformation challenges?

Enterprises are investing in AIOps platforms (an $18.95 billion market) and developing new validation frameworks, though infrastructure for managing AI-generated code quality significantly lags behind adoption rates.

For the broader 2026 landscape across research, industry, and policy, see our State of AI 2026 reference.