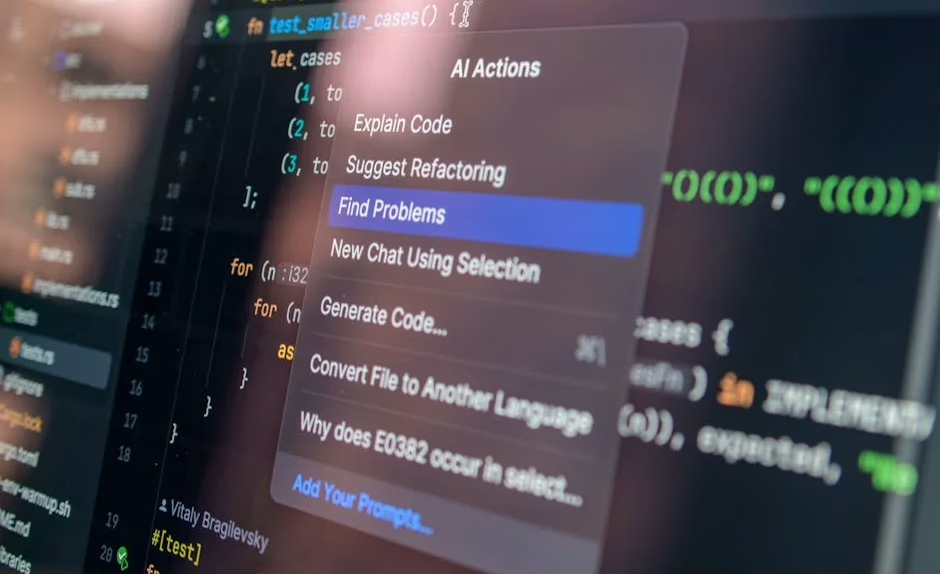

Google CEO Sundar Pichai recently revealed that over 25% of Google’s new code is now AI-generated, mirroring broader enterprise trends where artificial intelligence is rapidly transforming software development workflows. However, new research reveals significant operational challenges as organizations scale AI-powered development, with 43% of AI-generated code changes requiring manual debugging in production environments even after passing quality assurance testing.

The findings emerge as Google continues expanding its AI portfolio through DeepMind research initiatives and enterprise-focused Gemini deployments, while organizations grapple with the operational realities of AI-driven development at scale.

Enterprise AI Code Quality Challenges Surface

According to Lightrun’s 2026 State of AI-Powered Engineering Report, which surveyed 200 senior site-reliability and DevOps leaders across large enterprises in the United States, United Kingdom, and European Union, the infrastructure designed to catch AI-generated mistakes is significantly lagging behind AI’s capacity to produce code.

The survey revealed that zero percent of engineering leaders could verify an AI-suggested fix with just one redeploy cycle. Instead, 88% of organizations reported needing two to three deployment cycles, while 11% required four to six cycles to successfully implement AI-generated code changes.

“The 0% figure signals that engineering is hitting a trust wall with AI adoption,” said Or Maimon, Lightrun’s chief business officer. This trust deficit has significant implications for enterprise IT leaders evaluating AI development tools and establishing governance frameworks for AI-generated code.

The AIOps market, encompassing platforms and services designed to manage AI-driven operations, currently stands at $18.95 billion in 2026 and is projected to reach $37.79 billion by 2031, indicating substantial enterprise investment despite operational challenges.

Google’s Internal AI Adoption Patterns Questioned

Despite Google’s public emphasis on AI integration, internal adoption patterns may mirror broader industry trends according to veteran programmer Steve Yegge’s recent observations. A viral post on X suggested that Google’s engineering teams follow a typical 20%-60%-20% adoption split: 20% of engineers avoiding AI tools entirely, 60% using basic chat and coding assistants, and only 20% leveraging advanced agentic AI workflows.

Google DeepMind CEO Demis Hassabis and other company leaders have pushed back against these characterizations, though the debate highlights ongoing challenges in enterprise AI adoption even within organizations developing the technology.

For enterprise IT decision-makers, this internal dynamic reflects common organizational patterns where AI tool adoption varies significantly across teams and skill levels. Successful enterprise AI strategies require addressing this adoption curve through training, governance frameworks, and gradual implementation approaches.

DeepMind’s Strategic Enterprise Focus

Google DeepMind continues advancing research initiatives with potential enterprise applications, including recent hiring of philosophers to study machine consciousness and artificial general intelligence (AGI). While these research directions may seem abstract, they address fundamental questions about AI reliability and decision-making that directly impact enterprise deployment strategies.

The lab’s definition of AGI as “AI that’s at least as capable as humans at most cognitive tasks” differs from competitors like OpenAI, which focuses on economic productivity metrics. For enterprise leaders, these definitional differences matter when evaluating long-term AI investment strategies and vendor relationships.

Key enterprise considerations for DeepMind technologies include:

- Scalability frameworks for deploying advanced AI models across enterprise infrastructure

- Integration capabilities with existing enterprise software stacks

- Compliance and governance features for regulated industries

- Cost modeling for compute-intensive AI workloads

Gemini and Bard Enterprise Integration Challenges

Google’s Gemini platform represents the company’s primary enterprise AI offering, building on earlier Bard capabilities and PaLM language models. However, enterprise adoption faces technical and organizational hurdles beyond basic functionality.

Industry analysis suggests that AI agents—autonomous systems performing multi-step tasks—remain in early development stages despite significant marketing emphasis. Enterprise IT leaders must distinguish between current capabilities and future promises when evaluating Google’s AI portfolio.

Critical enterprise evaluation criteria include:

- Security and data privacy controls for sensitive enterprise data

- API reliability and uptime guarantees for business-critical applications

- Integration complexity with existing identity management and security systems

- Total cost of ownership including compute, licensing, and operational overhead

Waymo’s Enterprise Lessons for AI Deployment

Google’s Waymo autonomous vehicle initiative offers valuable lessons for enterprise AI deployment strategies. The project’s decade-long development timeline and emphasis on safety-critical validation processes provide a framework for enterprise AI governance.

Waymo’s approach emphasizes extensive testing, gradual rollouts, and robust monitoring systems—principles directly applicable to enterprise AI implementations. Organizations can apply similar methodologies when deploying AI-generated code or autonomous business processes.

Enterprise AI deployment best practices from Waymo include:

- Staged rollout processes with clear success metrics

- Comprehensive monitoring and rollback capabilities

- Cross-functional governance teams including legal, security, and operations

- Continuous validation against business requirements and compliance standards

What This Means

The current state of Google’s AI ecosystem reflects broader enterprise challenges in scaling artificial intelligence beyond pilot projects. While companies like Google and Microsoft report significant percentages of AI-generated code, operational realities reveal substantial debugging and validation overhead that organizations must factor into their AI strategies.

Enterprise IT leaders should approach AI adoption with realistic expectations about current limitations while building infrastructure for long-term success. The 43% debugging rate for AI-generated code suggests that organizations need robust testing, monitoring, and rollback capabilities before deploying AI tools at scale.

Google’s multi-faceted AI approach through DeepMind research, Gemini enterprise tools, and real-world applications like Waymo provides a comprehensive platform for enterprise evaluation. However, success requires careful assessment of organizational readiness, technical infrastructure, and governance frameworks rather than rushing to adopt the latest AI capabilities.

FAQ

Q: What percentage of Google’s code is AI-generated?

A: Google CEO Sundar Pichai stated that over 25% of Google’s new code is now AI-generated, similar to Microsoft’s reported 30% figure.

Q: How reliable is AI-generated code in production environments?

A: Recent surveys indicate that 43% of AI-generated code changes require manual debugging in production, even after passing quality assurance testing.

Q: What should enterprises consider when evaluating Google’s AI tools?

A: Key factors include security controls, API reliability, integration complexity, total cost of ownership, and organizational readiness for AI adoption at scale.

Related news

Sources

For the broader 2026 landscape across research, industry, and policy, see our State of AI 2026 reference.