DeepSeek released its V4 model on Tuesday, delivering near state-of-the-art performance at approximately one-sixth the cost of OpenAI’s GPT-5.5 and Anthropic’s Claude Opus 4.7. The 1.6-trillion-parameter Mixture-of-Experts (MoE) model is available free under the MIT License, marking what industry observers call the “second DeepSeek moment.”

According to VentureBeat, DeepSeek-V4 matches or exceeds the performance of leading closed-source systems while offering dramatic cost advantages through its API pricing structure.

Architecture Breakthrough: Mixture-of-Experts at Scale

DeepSeek-V4 employs a sophisticated Mixture-of-Experts architecture with 1.6 trillion total parameters, though only a subset activates for each inference request. This approach delivers the computational power of much larger models while maintaining efficiency during deployment.

DeepSeek AI researcher Deli Chen described the release on X as a “labor of love” 484 days after the V3 launch, emphasizing that “AGI belongs to everyone.” The model represents a significant leap from DeepSeek’s previous R1 model that gained international attention in January 2025.

The MoE design allows V4 to achieve frontier-level capabilities while using computational resources more efficiently than dense transformer models of equivalent performance. This architectural choice directly enables the dramatic cost reductions compared to proprietary alternatives.

Hardware Innovation Drives Efficiency Gains

Google unveiled its eighth-generation Tensor Processing Units (TPUs) designed specifically for the “agentic era” of AI development. According to Google’s blog, the TPU 8t focuses on massive model training while the TPU 8i specializes in high-speed inference.

These custom chips represent a decade of development aimed at handling the complex, iterative demands of AI agents. The TPU 8t delivers significant improvements in training efficiency for large language models, while the TPU 8i provides low-latency inference capabilities essential for real-time AI applications.

NVIDIA’s parallel development of Blackwell and Vera Rubin systems supports this hardware evolution. Forbes reported that NVIDIA CEO Jensen Huang projects at least $1 trillion in demand for these systems through 2027, doubling the company’s previous $500 billion estimate from 2025.

Training Efficiency Improvements

The new hardware architectures deliver measurable improvements in key metrics:

- Power efficiency: Custom silicon optimized for transformer operations

- Memory bandwidth: Enhanced for large parameter models like DeepSeek-V4

- Parallel processing: Designed for distributed training across thousands of chips

- Inference speed: Specialized chips for real-time AI agent interactions

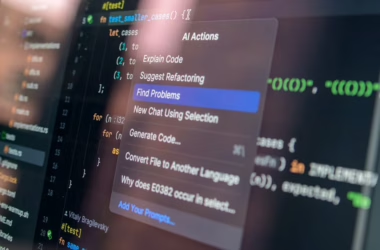

Privacy-First Architecture with OpenAI’s Filter

OpenAI launched Privacy Filter, a 1.5-billion-parameter open-source model that removes personally identifiable information from datasets before cloud processing. Released on Hugging Face under the Apache 2.0 license, the tool addresses enterprise concerns about data exposure during AI training and inference.

Privacy Filter represents a derivative of OpenAI’s gpt-oss family but employs a bidirectional token classifier that analyzes text from multiple directions. This architectural approach enables more accurate PII detection compared to traditional autoregressive models that process text sequentially.

The model runs on standard laptops or directly in web browsers, providing enterprises with local-first privacy infrastructure. This approach eliminates the need to send sensitive data to cloud servers for sanitization, addressing a critical bottleneck in enterprise AI adoption.

Enterprise Adoption Accelerates Across Industries

Google’s blog documented 1,302 real-world generative AI use cases from leading organizations, demonstrating widespread production deployment of agentic AI systems. The majority showcase applications built with tools like Gemini Enterprise, Gemini CLI, and Google’s AI Hypercomputer infrastructure.

This adoption surge reflects what Google describes as “the fastest technological transformation we’ve seen,” driven primarily by customer demand rather than vendor push. Organizations across virtually every industry vertical now deploy meaningful AI applications, from healthcare diagnostics to financial risk assessment.

The enterprise adoption data reveals several key trends:

- Agentic systems: AI that can reason and act autonomously

- Multi-modal applications: Combining text, image, and voice processing

- Real-time inference: Low-latency applications requiring specialized hardware

- Privacy-preserving workflows: On-device processing for sensitive data

Cost Economics Transform Competitive Landscape

DeepSeek-V4’s pricing model fundamentally alters the economics of frontier AI deployment. At one-sixth the cost of comparable proprietary models, organizations can now access state-of-the-art capabilities without the premium pricing that previously limited adoption to well-funded enterprises.

This cost advantage stems from several architectural decisions: the efficient MoE design, open-source distribution eliminating licensing fees, and optimization for standard hardware rather than requiring specialized cloud infrastructure.

The pricing pressure extends beyond DeepSeek’s direct competitors. Proprietary model providers like OpenAI and Anthropic now face pressure to justify premium pricing when open alternatives deliver comparable performance at dramatically lower costs.

What This Means

DeepSeek-V4’s release represents a fundamental shift in AI economics, proving that frontier-level performance no longer requires proprietary models or premium pricing. The combination of architectural innovation, specialized hardware, and open-source distribution creates a new baseline for AI capabilities.

This development accelerates enterprise adoption by removing cost barriers that previously limited advanced AI to well-funded organizations. The availability of privacy-preserving tools like OpenAI’s Filter further addresses enterprise concerns about data security.

The hardware innovations from Google and NVIDIA indicate that the industry is preparing for sustained growth in AI deployment, with specialized chips optimized for both training and inference workloads. This infrastructure investment supports the continued development of more capable and efficient AI systems.

FAQ

How does DeepSeek-V4 achieve comparable performance at lower cost?

DeepSeek-V4 uses a Mixture-of-Experts architecture that activates only a subset of its 1.6 trillion parameters for each request, reducing computational requirements while maintaining performance. The open-source distribution eliminates licensing fees, and optimization for standard hardware reduces infrastructure costs.

What makes Google’s TPU 8t and 8i different from previous generations?

The eighth-generation TPUs are purpose-built for agentic AI workloads, with the 8t specialized for massive model training and the 8i optimized for low-latency inference. They represent a decade of development specifically targeting the iterative, complex demands of AI agents rather than general-purpose computing.

Can OpenAI’s Privacy Filter handle enterprise-scale data processing?

Yes, Privacy Filter is designed to run on standard laptops or in web browsers, enabling local processing of sensitive data without cloud transmission. The 1.5-billion-parameter model provides enterprise-grade PII detection while maintaining the speed necessary for high-throughput data sanitization workflows.