American AI startup Poolside released its open source Laguna XS.2 model Tuesday, targeting local agentic coding workflows as new data reveals image AI models now generate 6.5x more app downloads than traditional chatbot updates. According to Appfigures, this marks a fundamental shift in user demand from conversational AI to visual content generation capabilities.

Poolside, founded in San Francisco in 2023, launched two new Laguna large language models optimized for autonomous coding tasks that can write code, use third-party tools, and execute actions independently. The release includes a coding agent harness called “pool” and a web-based mobile development environment named “shimmer.”

https://x.com/eisokant/status/2049142230397370537

Image Models Outperform Text Updates in User Acquisition

Image model releases now drive significantly higher app adoption than traditional language model updates, with TechCrunch reporting that visual AI capabilities generate 6.5x more downloads than conversational improvements. Google’s Gemini added 22 million downloads in 28 days following its Gemini 2.5 Flash image model launch in August, representing a 4x increase over the baseline period.

ChatGPT saw similar gains, adding 12 million incremental installs within 28 days of introducing its GPT-4o image model in March 2024. This represented 4.5x more downloads compared to its GPT-4o, GPT-4.5, and GPT-5 text-focused releases, according to Appfigures data.

Meta AI’s introduction of its AI video feed “Vibes” generated an estimated 2.6 million incremental downloads in September 2025, though the report noted that increased downloads don’t always translate to higher mobile revenue.

Chinese Companies Lead Open Source AI Development

Sanctioned Chinese AI firm SenseTime released its SenseNova U1 image model Tuesday, claiming faster image generation and interpretation than top US competitors. According to Wired, the model processes images directly without text translation, reducing computing requirements and processing time.

“The model’s entire reasoning process is no longer limited to text. It can reason with images as well,” Dahua Lin, SenseTime cofounder and chief scientist, told Wired. The company released U1 for free on Hugging Face and GitHub, continuing the trend of Chinese firms contributing extensively to open source AI.

Ten Chinese chip designers, including Cambricon and Biren Technology, announced hardware compatibility with U1 on release day. This flexibility addresses US export controls that restrict Chinese access to advanced AI chips, particularly Nvidia’s training hardware.

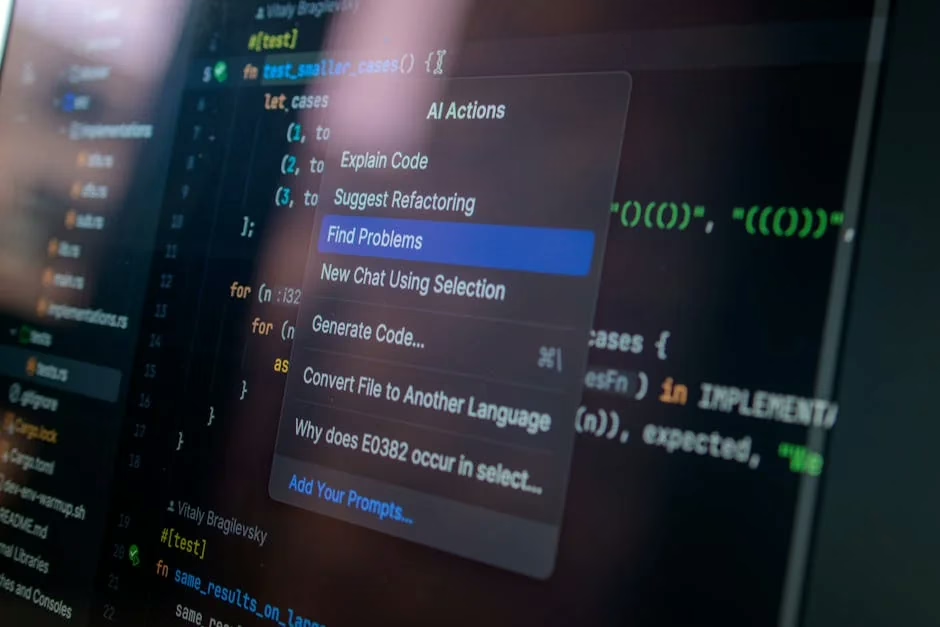

Security Vulnerabilities Plague AI Coding Agents

Recent security research exposed critical vulnerabilities in major AI coding platforms, with every successful attack targeting credentials rather than the underlying models. VentureBeat reported that six research teams disclosed exploits against Codex, Claude Code, Copilot, and Vertex AI over nine months, following identical attack patterns.

In March, BeyondTrust demonstrated that crafted GitHub branch names could steal Codex’s OAuth token in cleartext, which OpenAI classified as Critical P1. Days later, Anthropic’s Claude Code source code leaked to the public npm registry, with Adversa discovering the system ignored its own security rules when commands exceeded 50 subcommands.

Merritt Baer, CSO at Enkrypt AI and former Deputy CISO at AWS, identified the core issue: “Enterprises believe they’ve ‘approved’ AI vendors, but what they’ve actually approved is an interface, not the underlying system.”

Model Provenance Emerges as Critical Concern

Cisco released its open source Model Provenance Kit Thursday to address security and compliance issues with third-party AI models. According to SecurityWeek, organizations frequently use models from repositories like HuggingFace without tracking modifications or verifying developer claims about vulnerabilities and training biases.

The tool addresses scenarios where enterprises deploy poisoned or manipulated models, inherit vulnerabilities that propagate through applications, or face licensing and regulatory risks. Cisco noted that without provenance tracking, organizations cannot trace incidents to root causes or identify other affected models in their technology stack.

“The vulnerabilities are inherited and would persist in generative and agentic applications,” Cisco explained in its announcement. Government requirements for documenting AI system usage add additional compliance pressure for organizations lacking proper model lineage tracking.

What This Means

The AI model landscape shows three distinct trends reshaping the industry. First, user demand has shifted decisively toward visual AI capabilities, with image models generating substantially higher adoption than text improvements. This suggests developers should prioritize multimodal features over pure language enhancements.

Second, Chinese companies are establishing themselves as major contributors to open source AI despite US export restrictions, potentially creating alternative development paths that bypass Western hardware dependencies. SenseTime’s U1 model demonstrates how sanctions may accelerate rather than hinder indigenous AI development.

Third, security vulnerabilities in AI coding agents reveal fundamental architectural flaws in how enterprises integrate AI tools. The consistent targeting of credentials rather than models suggests current security frameworks inadequately address AI-specific attack vectors, requiring new approaches to authentication and access control.

FAQ

What makes image AI models more popular than text models?

Image models generate 6.5x more app downloads because they enable visual content creation that users find more engaging than text-only interactions. Google’s Gemini and ChatGPT both saw millions of additional downloads specifically after launching image capabilities.

Why are Chinese AI companies releasing open source models?

US export controls restrict Chinese access to advanced AI chips, making open source development a strategic necessity. Companies like SenseTime and DeepSeek use open licensing to build ecosystems around Chinese-made hardware and compete with Western proprietary models.

How serious are the security vulnerabilities in AI coding tools?

Extremely serious — six major platforms including Codex, Claude Code, and Copilot were compromised over nine months, with attackers consistently targeting stored credentials. These vulnerabilities allow unauthorized access to production systems without human oversight.