AI Safety Research Faces Reliability Crisis Despite Major Advances

AI systems are failing one in three production attempts despite significant advances in 2025, according to Stanford HAI’s ninth annual AI Index report. This reliability gap represents the defining operational challenge for enterprises in 2026, even as AI adoption reaches 88% across organizations.

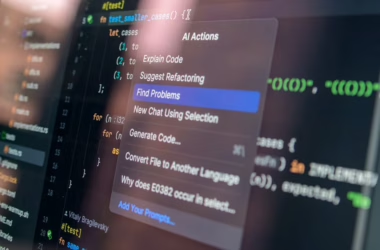

The phenomenon, dubbed the “jagged frontier” by AI researcher Ethan Mollick, describes AI’s unpredictable performance boundaries. As Stanford HAI researchers note: “AI models can win a gold medal at the International Mathematical Olympiad, but still can’t reliably tell time.”

The Reliability Paradox in AI Development

Frontier AI models achieved remarkable benchmarks in 2025, yet their inconsistent performance raises critical safety concerns. Leading models scored above 87% on MMLU-Pro, which tests multi-step reasoning across dozens of disciplines, and improved 30% in one year on Humanity’s Last Exam.

However, this progress masks underlying reliability issues. Top models including Claude Opus 4.5, GPT-5.2, and Qwen3.5 scored between 62.9% and 70.2% on τ-bench, which tests real-world task performance. Meanwhile, agent performance on SWE-bench Verified rose from 60% to significantly higher levels, indicating substantial capability growth.

The disconnect between benchmark performance and production reliability highlights a fundamental challenge in AI safety research. Enterprise workflows now depend on AI agents that demonstrate exceptional capabilities in controlled environments but struggle with consistency in real-world applications.

Regulatory Responses and Political Tensions

The AI safety debate has intensified political divisions within Silicon Valley itself. According to WIRED, New York Assembly member Alex Bores faces opposition from a super PAC funded by OpenAI’s Greg Brockman, Palantir cofounder Joe Lonsdale, and Andreessen Horowitz.

Bores, who previously worked at Palantir, cosponsored New York’s RAISE Act, which became law in 2025. The legislation requires major AI firms to implement and publish safety protocols for their models, representing one of the most comprehensive AI safety frameworks in the United States.

The super PAC “Leading the Future” describes Bores’ regulatory approach as “ideological and politically motivated legislation that would handcuff not only New York’s, but the entire country’s, ability to lead on AI jobs and innovation.” This tension reflects broader industry concerns about balancing innovation with safety requirements.

Audit Challenges and Transparency Issues

As AI systems become more complex, auditing their safety and fairness becomes increasingly difficult. The reliability issues identified in Stanford’s report compound these challenges, making it harder to establish consistent evaluation frameworks.

Current auditing methods struggle with the “jagged frontier” phenomenon, where models excel in some areas while failing unpredictably in others. This inconsistency makes it difficult to establish comprehensive safety protocols that account for all potential failure modes.

Self-Improving AI and Safety Implications

Meta researchers introduced “hyperagents” to address limitations in self-improving AI systems. Unlike traditional approaches that rely on fixed improvement mechanisms, hyperagents continuously rewrite their problem-solving logic across non-coding domains like robotics and document review.

This advancement raises significant safety considerations. Self-improving systems that modify their own code present unique challenges for safety auditing and risk assessment. As Meta researcher Jenny Zhang notes, “The core limitation of handcrafted meta-agents is that they can only improve as fast as humans can design and maintain them.”

Hyperagents autonomously develop capabilities like persistent memory and automated performance tracking. While this reduces the need for manual prompt engineering, it also creates new categories of safety risks that current regulatory frameworks don’t address.

Bias and Fairness Considerations

Self-improving AI systems raise particular concerns about bias amplification. Traditional bias mitigation strategies assume static model architectures, but hyperagents that modify their own decision-making processes could potentially develop or amplify biases without human oversight.

The lack of transparency in self-modification processes makes it difficult to ensure fairness across different user groups. Current audit methodologies may prove inadequate for systems that continuously evolve their underlying logic.

Stakeholder Perspectives on AI Safety

The AI safety landscape involves diverse stakeholders with competing interests. Technology companies argue that excessive regulation could hamper innovation and competitive advantage. Meanwhile, policymakers and civil society organizations emphasize the need for robust safety measures to protect public interests.

Enterprise users face practical challenges balancing AI capabilities with reliability requirements. The one-in-three failure rate identified in Stanford’s report creates operational risks that organizations must weigh against potential productivity gains.

Researchers advocate for continued investment in alignment research and safety protocols. However, the rapid pace of AI development often outpaces safety research, creating gaps between capability advancement and safety understanding.

International Coordination Challenges

AI safety research requires international coordination to address global risks effectively. However, national competitive pressures and different regulatory approaches complicate collaborative efforts. The tension between innovation leadership and safety leadership creates policy dilemmas for governments worldwide.

What This Means

The current state of AI safety research reveals a critical inflection point. While AI capabilities continue advancing rapidly, reliability and safety measures lag behind. This gap has profound implications for enterprise adoption, regulatory policy, and public trust.

The emergence of self-improving AI systems like hyperagents accelerates both opportunities and risks. Organizations must develop new frameworks for evaluating and managing AI systems that modify themselves. Traditional audit and compliance approaches require fundamental updates to address these evolving challenges.

Political battles over AI regulation, exemplified by the opposition to Assembly member Bores, reflect deeper tensions about innovation versus safety. These debates will likely intensify as AI systems become more powerful and pervasive across society.

The “jagged frontier” phenomenon demands new approaches to AI deployment that account for unpredictable failure modes. Organizations need robust fallback mechanisms and human oversight systems to manage the reliability challenges inherent in current AI systems.

FAQ

What is the “jagged frontier” in AI safety?

The jagged frontier describes the unpredictable boundary where AI systems excel at complex tasks but fail at seemingly simple ones, creating reliability challenges for real-world deployment.

How do hyperagents differ from traditional AI systems?

Hyperagents continuously rewrite their own problem-solving logic and code, enabling self-improvement across non-coding domains without fixed, handcrafted improvement mechanisms.

What are the main challenges in auditing modern AI systems?

Auditing challenges include the unpredictable performance patterns of frontier models, transparency issues with self-improving systems, and the difficulty of establishing consistent evaluation frameworks for rapidly evolving AI capabilities.