Frontier AI models are failing one in three production attempts despite significant performance improvements, creating a critical reliability gap that threatens enterprise adoption and highlights urgent safety concerns. According to Stanford HAI’s ninth annual AI Index report, this “jagged frontier” phenomenon represents the defining operational challenge for IT leaders in 2026, even as enterprise AI adoption reaches 88%.

The reliability crisis comes at a time when AI systems are increasingly embedded in real-world workflows, making safety research and responsible deployment practices more crucial than ever. Meanwhile, political tensions around AI regulation are intensifying, with Silicon Valley spending millions to oppose regulatory advocates like New York Assembly member Alex Bores, who champions rigorous AI safety protocols.

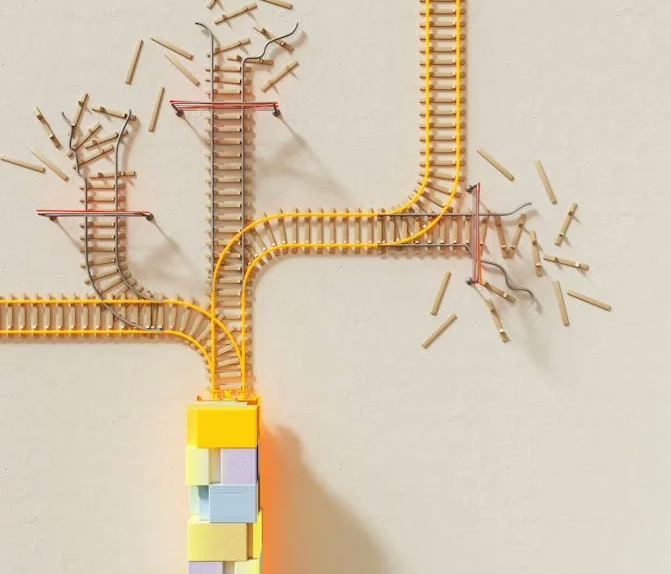

The Jagged Frontier Problem

The term “jagged frontier,” coined by AI researcher Ethan Mollick, describes the unpredictable boundary where AI excels and then suddenly fails. Stanford HAI researchers illustrate this paradox starkly: “AI models can win a gold medal at the International Mathematical Olympiad, but still can’t reliably tell time.”

This inconsistency poses significant safety and accountability challenges for organizations deploying AI systems. When models perform exceptionally on complex benchmarks but fail on seemingly simple tasks, it becomes difficult to predict where critical failures might occur in production environments.

The reliability gap is particularly concerning for high-stakes applications where consistent performance is essential. Enterprise leaders must now grapple with systems that demonstrate superhuman capabilities in some areas while exhibiting unpredictable failures in others, creating new categories of operational risk that traditional safety frameworks struggle to address.

Performance Gains Mask Fundamental Safety Issues

Despite the reliability concerns, frontier models achieved remarkable improvements in 2025. Leading models scored above 87% on MMLU-Pro, which tests multi-step reasoning across more than a dozen disciplines. Top models including Claude Opus 4.5, GPT-5.2, and Qwen3.5 scored between 62.9% and 70.2% on τ-bench, which evaluates real-world task performance.

However, these impressive benchmark scores obscure deeper bias and fairness concerns. The focus on performance metrics often overshadows critical questions about how these systems make decisions, who they serve equitably, and what happens when they fail in sensitive contexts.

Key performance improvements include:

- 30% improvement on Humanity’s Last Exam across specialized fields

- Agent performance on SWE-bench Verified rising from 60% to significant gains

- Model accuracy on GAIA benchmark increasing from 20% to 74.5%

Yet these advances come with increased opacity, making it harder to audit decision-making processes and ensure algorithmic accountability.

Regulatory Battles and Industry Resistance

The AI safety debate has moved beyond technical circles into heated political territory. Alex Bores, a former Palantir employee turned New York Assembly member, has become a lightning rod for industry opposition due to his support for rigorous AI regulation.

Bores cosponsored New York’s RAISE Act, which became law in 2025 and requires major AI firms to implement and publish safety protocols for their models. This legislation represents a significant step toward transparency and accountability in AI deployment.

However, a super PAC called Leading the Future—funded by OpenAI’s Greg Brockman, Palantir cofounder Joe Lonsdale, and Andreessen Horowitz—has launched an aggressive campaign against Bores’ congressional run. The group argues that his regulatory approach constitutes “ideological and politically motivated legislation that would handcuff the country’s ability to lead on AI jobs and innovation.”

This conflict illustrates the fundamental tension between rapid AI advancement and responsible deployment practices. Industry leaders often frame safety regulations as innovation barriers, while advocates argue that proper oversight is essential for long-term societal benefit.

Self-Improving AI and Amplified Risks

Meta researchers have introduced “hyperagents,” self-improving AI systems that continuously rewrite and optimize their problem-solving logic. While this technology promises more adaptable AI agents, it also raises profound safety and control questions.

Unlike previous self-improving systems limited to coding tasks, hyperagents can enhance themselves across domains like robotics and document review. They independently develop capabilities like persistent memory and automated performance tracking, potentially accelerating beyond human oversight.

Critical safety implications include:

- Reduced human control over AI behavior modification

- Difficulty predicting emergent capabilities

- Challenges in maintaining alignment with human values

- Potential for rapid capability escalation

The development of self-modifying AI systems without robust safety frameworks could exacerbate existing reliability issues while creating entirely new categories of risk that current regulatory approaches are unprepared to address.

Auditing Challenges and Transparency Gaps

As AI models become more sophisticated, they simultaneously become harder to audit and understand. The VentureBeat report highlights that frontier models are not only failing more frequently but becoming increasingly opaque in their decision-making processes.

This transparency crisis has several dimensions:

Technical opacity: Advanced models use complex architectures that resist traditional interpretability methods, making it difficult to understand why specific decisions are made.

Data bias concerns: Training data sources often remain proprietary, obscuring potential biases that could lead to unfair outcomes for different demographic groups.

Performance inconsistency: The jagged frontier phenomenon makes it challenging to predict when and where failures will occur, complicating risk assessment efforts.

Regulatory compliance: As models become harder to audit, ensuring compliance with emerging AI safety regulations becomes increasingly difficult and resource-intensive.

These challenges underscore the need for new auditing methodologies and transparency standards that can keep pace with rapidly evolving AI capabilities.

What This Means

The convergence of impressive AI capabilities with significant reliability issues creates a critical inflection point for the technology industry. Organizations must balance the competitive advantages of advanced AI systems against the substantial risks of unpredictable failures in production environments.

The political battle over AI regulation reflects deeper questions about technological governance in democratic societies. As AI systems become more powerful and autonomous, the stakes of regulatory decisions increase dramatically. The industry’s resistance to oversight, exemplified by the campaign against Alex Bores, suggests that voluntary self-regulation may be insufficient to address emerging risks.

For enterprise leaders, the reliability crisis demands new approaches to risk management that account for the jagged frontier phenomenon. Traditional quality assurance methods may be inadequate for systems that can excel at complex tasks while failing unpredictably on simpler ones.

The development of self-improving AI systems like hyperagents adds urgency to these concerns. Without robust safety frameworks and oversight mechanisms, the gap between AI capabilities and human understanding could widen rapidly, potentially leading to systems that operate beyond meaningful human control.

FAQ

Q: What is the “jagged frontier” in AI safety?

A: The jagged frontier describes the unpredictable boundary where AI systems excel at complex tasks but fail unexpectedly on simpler ones, creating reliability challenges that are difficult to predict or prevent.

Q: Why are tech companies opposing AI safety regulations?

A: Industry leaders argue that safety regulations could slow innovation and reduce competitive advantages, though critics contend that proper oversight is essential for preventing harmful outcomes and maintaining public trust.

Q: How do self-improving AI systems like hyperagents increase safety risks?

A: Self-modifying AI systems can develop capabilities beyond their original programming, potentially evolving faster than human oversight can track and creating new risks that current safety frameworks aren’t designed to handle.

For the broader 2026 landscape across research, industry, and policy, see our State of AI 2026 reference.