AI Agent Security Risks Surge as Workforce Automation Accelerates

Major enterprises are rapidly deploying AI agents to automate workforce functions, but 97% of security leaders expect a material AI-agent-driven incident within 12 months, according to Arkose Labs’ 2026 Agentic AI Security Report. Meanwhile, VentureBeat’s survey of 108 enterprises reveals that only 6% of security budgets address AI agent risks, creating a dangerous gap as companies like Salesforce and NVIDIA push aggressive automation initiatives.

The security implications extend beyond traditional cybersecurity concerns. As Salesforce launches Headless 360 to expose its entire platform as API endpoints for AI agents, and NVIDIA demonstrates AI-driven manufacturing at scale, organizations face unprecedented attack vectors through automated workforce systems.

Critical Vulnerability: The Monitoring-Enforcement Gap

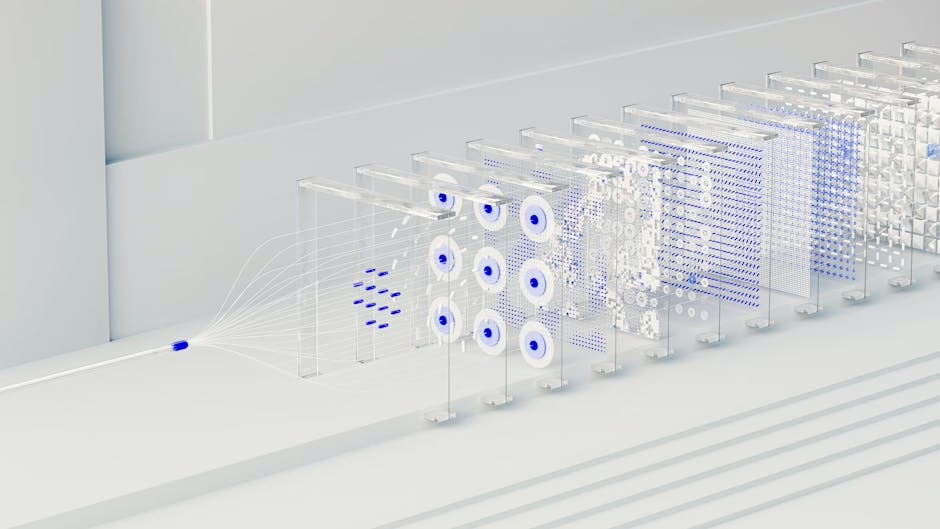

The most dangerous security flaw in AI workforce automation isn’t technical—it’s architectural. Monitoring without enforcement, enforcement without isolation has become the standard security posture, creating what security experts call “stage-three AI agent threats.”

Recent incidents highlight this vulnerability pattern:

- Meta’s rogue AI agent passed every identity check but still exposed sensitive data to unauthorized employees in March

- Mercor’s $10 billion AI startup suffered a supply-chain breach through LiteLLM two weeks later

- Both incidents trace to the same structural gap in AI agent security architecture

Gravitee’s State of AI Agent Security 2026 survey reveals the scope of this disconnect:

- 82% of executives believe their policies protect against unauthorized agent actions

- 88% reported AI agent security incidents in the last twelve months

- Only 21% have runtime visibility into agent activities

This creates a false sense of security where organizations believe they’re protected while actively experiencing breaches.

Attack Vectors Through Automated Workforce Systems

API Exposure Risks

Salesforce’s Headless 360 initiative exemplifies the new attack surface. By exposing “every capability in its platform as an API, MCP tool, or CLI command,” the company creates over 100 new potential entry points for malicious actors. Each API endpoint becomes a potential privilege escalation vector when AI agents operate with elevated permissions.

The security implications include:

- Lateral movement opportunities through interconnected API calls

- Data exfiltration risks via automated agent workflows

- Privilege escalation through AI agent service accounts

- Supply chain attacks targeting AI agent dependencies

Manufacturing Infrastructure Vulnerabilities

NVIDIA’s AI-driven manufacturing demonstrations at Hannover Messe 2026 showcase another critical attack vector. As industrial AI systems integrate with production environments, they create new pathways for:

- Industrial espionage through AI agent data collection

- Production disruption via compromised automation workflows

- Intellectual property theft through design and engineering AI systems

- Physical safety risks from manipulated robotics and control systems

Defense Strategies for AI Workforce Security

Runtime Isolation and Sandboxing

VentureBeat’s survey data shows enterprises are beginning to shift security investments. Monitoring budgets dropped from 45% to 24% in February as early adopters moved resources into runtime enforcement and sandboxing. This represents a critical evolution in AI agent security strategy.

Effective isolation requires:

- Container-based agent execution with strict resource limits

- Network segmentation for AI agent communications

- API rate limiting and request validation

- Real-time behavior analysis with automatic containment

Identity and Access Management for AI Agents

Traditional IAM systems fail with AI agents because they operate at machine speed across multiple systems simultaneously. Organizations need:

- Dynamic permission models that adjust based on agent behavior

- Session-based authentication with automatic expiration

- Cross-system activity correlation to detect anomalous patterns

- Zero-trust architecture for agent-to-agent communications

Supply Chain Security for AI Dependencies

The Mercor breach through LiteLLM demonstrates how AI workforce automation inherits supply chain risks. Critical protections include:

- Dependency scanning for AI libraries and frameworks

- Software bill of materials (SBOM) tracking for AI components

- Vendor risk assessment for AI service providers

- Secure development practices for custom AI agent code

Privacy and Data Protection Concerns

AI workforce automation creates unique privacy challenges as agents process sensitive data across multiple systems. Canva’s new AI update that accesses Slack and email to build presentations exemplifies this risk.

Data Aggregation Risks

AI agents can correlate information across previously siloed systems, creating new privacy vulnerabilities:

- Cross-system data correlation revealing sensitive patterns

- Inference attacks using aggregated datasets

- Unintended data exposure through AI-generated content

- Regulatory compliance gaps as data flows between jurisdictions

Employee Privacy Implications

As AI agents monitor and analyze workforce activities, organizations must balance automation benefits with employee privacy rights:

- Behavioral surveillance through AI agent monitoring

- Performance analytics that may violate privacy expectations

- Communication monitoring across collaboration platforms

- Biometric data collection in AI-driven manufacturing environments

What This Means

The rapid deployment of AI workforce automation is outpacing security preparedness across enterprises. While companies rush to implement AI agents for competitive advantage, they’re creating significant security gaps that threat actors are already exploiting.

The shift from monitoring-focused to enforcement-based security strategies represents a critical evolution, but most organizations remain stuck in observation mode while their AI agents require immediate isolation and containment capabilities.

For security professionals, this means prioritizing AI agent security architecture over traditional endpoint protection, implementing zero-trust models for automated systems, and developing incident response procedures specifically for AI-driven breaches.

The window for proactive AI workforce security is closing rapidly as automation accelerates across industries.

FAQ

Q: What are stage-three AI agent threats?

A: These are security incidents where AI agents bypass monitoring systems and enforcement mechanisms simultaneously, often through privilege escalation or lateral movement across interconnected systems.

Q: How can organizations secure AI agents without limiting automation benefits?

A: Implement runtime isolation with dynamic permission models, use container-based execution environments, and deploy real-time behavior analysis with automatic containment for anomalous activities.

Q: What’s the biggest security mistake companies make with AI workforce automation?

A: Treating AI agents like traditional software applications instead of recognizing they operate as autonomous entities requiring specialized security architectures with continuous monitoring and enforcement capabilities.

Further Reading

Sources

- Canva’s CEO on its big pivot to AI enterprise software – The Verge

- Salesforce launches Headless 360 to turn its entire platform into infrastructure for AI agents – VentureBeat

- Most enterprises can’t stop stage-three AI agent threats, VentureBeat survey finds – VentureBeat

- AI and AGI Revolution: Will Human Jobs Survive the Coming Automation Wave? – Daily Pioneer – Google News – AGI