AI coding assistants like GitHub Copilot, Cursor, and Claude Code are generating massive amounts of code for developers, but new research from TechCrunch reveals that 70-90% of AI-generated code requires significant revision within weeks of implementation. This productivity illusion masks critical security vulnerabilities that threaten enterprise codebases and developer workflows.

While engineering managers report initial code acceptance rates of 80-90%, Alex Circei, CEO of developer analytics firm Waydev, found that real-world acceptance drops to just 10-30% after security reviews and bug fixes. This disconnect between perceived and actual productivity creates dangerous blind spots in software security.

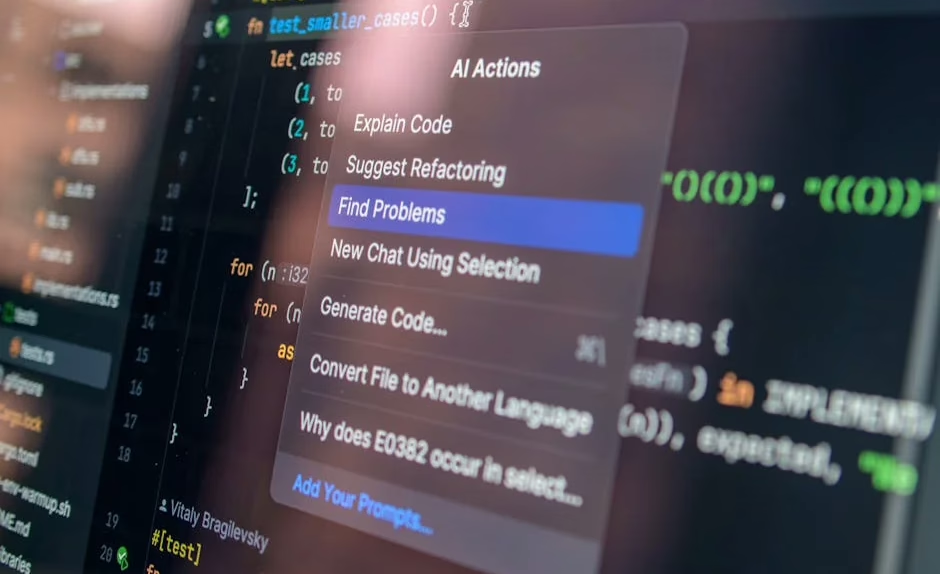

Critical Security Vulnerabilities in AI Code Generation

AI coding tools introduce several attack vectors that traditional security frameworks struggle to address. The most significant threat stems from code injection vulnerabilities embedded within AI-generated suggestions.

Primary threat vectors include:

- Training data poisoning: Malicious code in training datasets can propagate through AI suggestions

- Prompt injection attacks: Crafted comments or variable names can manipulate AI output

- Dependency confusion: AI tools may suggest vulnerable or malicious packages

- Credential exposure: AI models trained on public repositories may leak API keys or secrets

According to Waydev’s analysis, the high revision rate of AI-generated code often results from security teams identifying vulnerabilities that automated tools missed during initial acceptance. This creates a false sense of productivity while accumulating technical debt and security risks.

Token budget maximization, dubbed “tokenmaxxing” by developers, exacerbates these issues by incentivizing quantity over quality. Engineers consuming large token budgets generate more code but spend significantly more time on security remediation.

IDE Integration Vulnerabilities and Attack Surfaces

Modern IDE integrations for AI coding tools create expanded attack surfaces that security teams must monitor. These integrations typically require extensive permissions to access:

- Source code repositories with full read/write access

- Environment variables containing sensitive configuration data

- Network connections to external AI services

- File system access for code analysis and generation

Enterprise security implications:

Data Exfiltration Risks

AI coding assistants transmit code snippets to external servers for processing. Even with encryption, this creates potential data exfiltration pathways for proprietary algorithms, business logic, and sensitive customer data embedded in code comments.

Supply Chain Attacks

Compromised AI coding services could inject malicious code directly into development workflows. Unlike traditional supply chain attacks targeting specific packages, AI-generated vulnerabilities are harder to detect and trace.

Privilege Escalation

IDE integrations often run with developer privileges, creating opportunities for privilege escalation if the AI service or integration is compromised.

Privacy Implications and Data Protection Concerns

AI coding tools pose significant privacy risks that extend beyond individual developers to entire organizations. Recent enterprise adoptions highlight the tension between productivity gains and data protection requirements.

Key privacy vulnerabilities:

- Code fingerprinting: AI models can potentially identify proprietary coding patterns and architectural decisions

- Intellectual property leakage: Training data contamination could expose trade secrets through generated suggestions

- Compliance violations: GDPR, HIPAA, and other regulations may be violated when sensitive data appears in code processed by AI services

Recommended data protection measures:

- Implement code sanitization before AI processing

- Deploy on-premises AI models for sensitive codebases

- Establish data classification policies for AI tool usage

- Conduct regular privacy impact assessments for AI coding workflows

Defense Strategies and Security Best Practices

Organizations must implement comprehensive security frameworks to safely leverage AI coding tools while mitigating associated risks.

Multi-Layer Security Architecture

Code Review Enhancement:

- Implement mandatory security reviews for all AI-generated code

- Deploy static analysis tools specifically configured for AI-generated patterns

- Establish approval workflows that require human verification of AI suggestions

Network Security Controls:

- Use network segmentation to isolate AI coding tool traffic

- Implement SSL/TLS inspection for AI service communications

- Deploy data loss prevention (DLP) tools to monitor code transmissions

Access Management:

- Apply principle of least privilege to AI tool integrations

- Implement role-based access controls for different AI coding capabilities

- Regular audit of AI tool permissions and usage patterns

Threat Detection and Monitoring

Establish continuous monitoring for AI coding tool security:

- Behavioral analysis to detect unusual AI usage patterns

- Code quality metrics tracking security vulnerability introduction rates

- Dependency monitoring for AI-suggested package installations

- Audit logging of all AI-generated code suggestions and acceptances

Enterprise Risk Assessment Framework

Security teams should implement structured risk assessment processes for AI coding tool adoption:

Risk Categories:

- Technical Risks: Vulnerability introduction, code quality degradation

- Operational Risks: Developer dependency, skill atrophy

- Compliance Risks: Regulatory violations, audit failures

- Strategic Risks: Intellectual property exposure, competitive disadvantage

Assessment Metrics:

- Time-to-remediation for AI-generated vulnerabilities

- False positive rates in security scanning of AI code

- Developer productivity adjusted for security revision cycles

- Compliance audit findings related to AI tool usage

What This Means

The rapid adoption of AI coding tools represents a fundamental shift in software development that requires equally sophisticated security adaptations. While these tools offer legitimate productivity benefits, organizations must acknowledge that current acceptance rate metrics mask significant security risks.

The “tokenmaxxing” trend reveals a dangerous disconnect between measured productivity and actual security outcomes. Security teams must develop new frameworks for evaluating AI-generated code that account for long-term revision cycles and hidden vulnerability accumulation.

Successful AI coding tool adoption requires treating these systems as high-risk infrastructure components rather than simple productivity enhancers. Organizations that implement comprehensive security frameworks now will gain competitive advantages while avoiding the security debt that threatens early adopters.

FAQ

Q: How can organizations securely implement AI coding tools without compromising security?

A: Deploy AI coding tools with mandatory security reviews, network segmentation, and continuous monitoring. Implement on-premises AI models for sensitive codebases and establish clear data classification policies.

Q: What are the most critical security risks associated with AI code generation?

A: The primary risks include training data poisoning, prompt injection attacks, dependency confusion, and credential exposure. These vulnerabilities are often hidden by high initial acceptance rates but emerge during security reviews.

Q: How should security teams measure the real impact of AI coding tools on organizational security posture?

A: Track metrics beyond initial acceptance rates, including time-to-remediation for AI-generated vulnerabilities, false positive rates in security scanning, and compliance audit findings. Focus on long-term security outcomes rather than short-term productivity gains.