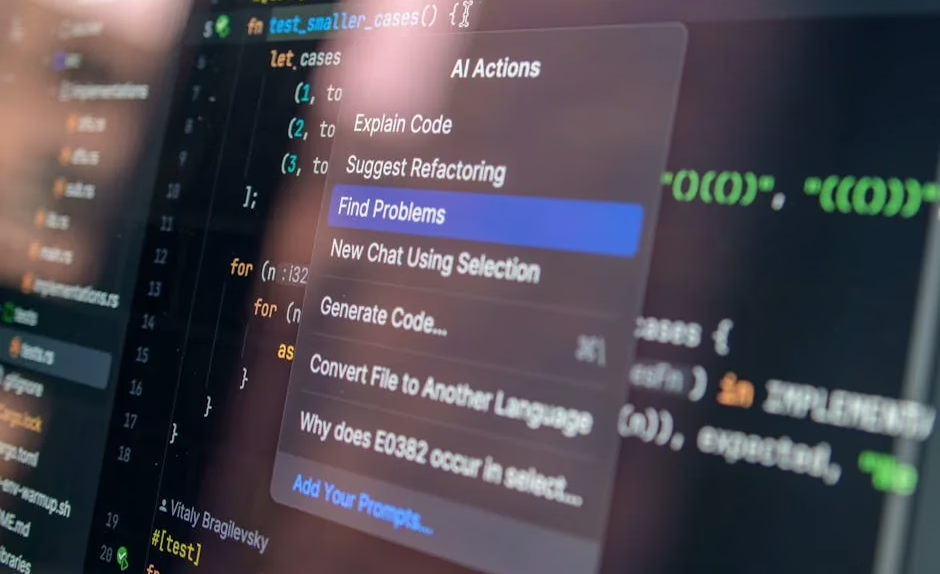

Security researchers at Johns Hopkins University have exposed critical prompt injection vulnerabilities in three major AI coding platforms—Anthropic’s Claude Code, Google’s Gemini CLI, and GitHub Copilot—that allow attackers to steal API keys through malicious GitHub pull requests. According to VentureBeat, the attack dubbed “Comment and Control” achieved CVSS 9.4 Critical severity ratings, with researchers successfully extracting sensitive credentials using a single malicious prompt injection.

While AI coding assistants like Copilot and Cursor promise enhanced developer productivity, emerging security research reveals significant vulnerabilities that could expose enterprise codebases and developer credentials to malicious actors. These findings underscore the urgent need for comprehensive security frameworks as AI-powered development tools become increasingly integrated into software development workflows.

Critical Prompt Injection Attack Vector

The “Comment and Control” vulnerability demonstrates how attackers can exploit AI coding agents through GitHub’s pull request mechanism. Security researcher Aonan Guan discovered that by inserting malicious instructions into PR titles, attackers could manipulate AI agents to expose their own API keys as comments.

Key attack characteristics:

- No external infrastructure required for exploitation

- Works across multiple platforms: Claude Code, Gemini CLI, GitHub Copilot

- Exploits GitHub Actions workflows using pullrequesttarget triggers

- Bypasses traditional security controls through natural language manipulation

The vulnerability specifically targets workflows requiring secret access, which most AI agent integrations need for proper functionality. While GitHub Actions doesn’t expose secrets to fork pull requests by default, the pullrequesttarget trigger—essential for AI coding agents—creates an exploitable attack surface.

According to VentureBeat, Anthropic classified this as CVSS 9.4 Critical severity but awarded only a $100 bounty, while Google paid $1,337 and GitHub awarded $500 through their respective bug bounty programs.

Developer Productivity Metrics Mask Security Risks

While organizations rush to adopt AI coding tools for productivity gains, security implications often take a backseat to metrics like token consumption and code acceptance rates. TechCrunch reports that “tokenmaxxing”—maximizing AI processing power consumption—has become a status symbol among Silicon Valley developers, despite questionable productivity benefits.

Security concerns with productivity-focused adoption:

- Rushed code reviews due to high AI-generated code volumes

- Reduced human oversight as acceptance rates reach 80-90%

- Hidden technical debt from frequent code revisions

- Insufficient security validation of AI-generated code

Alex Circei, CEO of Waydev, notes that while initial code acceptance rates appear impressive at 80-90%, real-world acceptance drops to 10-30% after engineers discover issues requiring extensive revisions. This churn creates security blind spots where vulnerabilities may persist through multiple revision cycles.

The focus on input metrics (token consumption) rather than output quality (secure, maintainable code) creates perverse incentives that prioritize speed over security.

Enterprise Platform Security Transformations

Salesforce’s Headless 360 initiative represents a broader trend toward API-first architectures that expose enterprise capabilities directly to AI agents. While this transformation enables powerful automation, it also expands the attack surface significantly.

Security implications of headless platforms:

- Expanded API attack surface with 100+ new endpoints

- Direct agent access to sensitive enterprise data

- Reduced human oversight in critical business processes

- Complex permission models across multiple AI systems

Salesforce’s decision to rebuild its entire platform for agent access reflects industry-wide pressure to remain competitive in the AI era. However, this architectural shift requires comprehensive security redesigns to prevent unauthorized access and data exfiltration.

The timing coincides with broader enterprise software volatility, as traditional SaaS models face disruption from AI capabilities that could potentially replace human-driven interfaces entirely.

Development Environment Security Best Practices

Organizations implementing AI coding tools must establish robust security frameworks to mitigate emerging threats while maintaining development velocity.

Essential security controls:

- Implement strict code review processes for AI-generated code

- Use separate development credentials for AI agent access

- Monitor prompt injection attempts in development workflows

- Establish token usage policies beyond productivity metrics

- Regular security audits of AI tool integrations

GitHub Actions security hardening:

- Avoid pullrequesttarget triggers when possible

- Implement strict branch protection rules

- Use environment-specific secrets

- Monitor for unusual API key usage patterns

Prompt injection defense strategies:

- Input sanitization for user-provided content

- Structured prompt templates with clear boundaries

- Output validation and filtering

- Anomaly detection for unusual agent behavior

Development teams should treat AI coding assistants as potentially compromised endpoints, implementing defense-in-depth strategies that assume breach scenarios.

Privacy and Data Protection Concerns

AI coding tools process vast amounts of proprietary source code, raising significant privacy and intellectual property concerns. The integration of these tools into enterprise development environments creates new data exposure vectors that traditional security models don’t address.

Critical privacy considerations:

- Code repository exposure to third-party AI services

- Intellectual property leakage through training data

- Compliance violations with data residency requirements

- Audit trail gaps for code generation processes

Many AI coding platforms operate as cloud services, potentially exposing proprietary algorithms, business logic, and sensitive data to external processing. Organizations must evaluate whether their security and compliance requirements permit such exposure.

The lack of transparency in AI model training data also creates uncertainty about whether proprietary code might inadvertently influence future model outputs, potentially leaking competitive advantages to other users.

What This Means

The security vulnerabilities discovered in major AI coding platforms represent a critical inflection point for the software development industry. As organizations increasingly rely on AI assistants for code generation, the attack surface expands beyond traditional application security to include prompt manipulation and model exploitation techniques.

The “Comment and Control” vulnerability demonstrates that AI coding agents are susceptible to social engineering attacks that bypass conventional security controls. This necessitates new security frameworks specifically designed for AI-integrated development environments.

Furthermore, the productivity metrics driving AI adoption—such as token consumption and initial code acceptance rates—may inadvertently encourage security-compromising behaviors. Organizations must balance development velocity with comprehensive security validation to prevent the accumulation of technical debt and vulnerabilities.

The industry’s rush toward AI-first development platforms like Salesforce’s Headless 360 amplifies these concerns by expanding API attack surfaces and reducing human oversight in critical processes. Success in this environment requires treating AI coding tools as high-risk components that demand enhanced monitoring, validation, and access controls.

FAQ

Q: How can developers protect against prompt injection attacks in AI coding tools?

A: Implement input sanitization for all user-provided content, use structured prompt templates with clear boundaries, avoid pullrequesttarget triggers in GitHub Actions when possible, and monitor for unusual API key usage patterns.

Q: What security measures should organizations implement before adopting AI coding assistants?

A: Establish separate development credentials for AI agents, implement strict code review processes for AI-generated code, conduct regular security audits of AI tool integrations, and develop token usage policies that prioritize security over productivity metrics.

Q: Are AI-generated code repositories more vulnerable to security threats?

A: Yes, AI-generated code often requires extensive revisions that can introduce security blind spots, and the high volume of generated code may overwhelm traditional review processes, potentially allowing vulnerabilities to persist through multiple revision cycles.

Related news

- Adversaries hijacked AI security tools at 90+ organizations. The next wave has write access to the firewall – Venturebeat – Google News – AI Tools

- Adversaries hijacked AI security tools at 90+ organizations. The next wave has write access to the firewall – VentureBeat

- SEALSQ emphasizes quantum-resistant chips amid AI security risks – Investing.com – Google News – AI Security