Enterprise AI agents are operating in production environments across healthcare, manufacturing, and cybersecurity, but 85% of organizations lack the identity and access management infrastructure to secure them at scale. According to Cisco President Jeetu Patel, while 85% of enterprises run agent pilots, only 5% have reached production deployment — an 80-point gap driven by fundamental security architecture limitations.

IANS Research found that most businesses still lack role-based access control mature enough for today’s human identities, and agents will make it significantly harder. The 2026 IBM X-Force Threat Intelligence Index reported a 44% increase in attacks exploiting public-facing applications, driven by missing authentication controls and AI-enabled vulnerability discovery.

The Four-Surface Attack Model for AI Agents

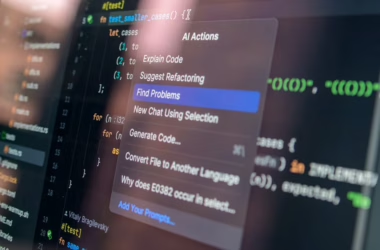

Traditional AI security focused on prompt injection attacks against standalone language models. Agentic systems expose four distinct attack surfaces that enterprise security teams must address simultaneously, according to Gravitee’s 2026 State of AI Agent Security report.

The prompt surface handles external inputs, similar to traditional LLM security. The tool surface executes backend actions — database queries, API calls, file operations — that can directly impact production systems. The memory surface stores information across sessions, creating persistent attack vectors. The orchestration surface coordinates between multiple agents, multiplying potential failure points.

Gravitee’s survey of 900+ executives found that 88% of organizations reported confirmed or suspected AI agent security incidents in the past year. Only 14.4% of agentic systems went live with full security and IT approval, creating a deployment-security gap where incidents cluster.

Production Deployments Show Security Maturity Gaps

Real-world agent deployments reveal the scope of the identity governance challenge. Medical transcription agents in hospital exam rooms update electronic health records, prompt prescription options, and surface patient history in real-time. Computer vision agents on manufacturing lines run quality control at speeds no human inspector can match.

Both scenarios generate non-human identities that most enterprises cannot inventory, scope, or revoke at machine speed. The first questions any CISO will ask: which agents have production access to sensitive systems, and who is accountable when one acts outside its scope?

Michael Dickman, SVP and GM of Cisco’s Campus Networking business, told VentureBeat that the trust gap is architectural, not just a tooling problem. Traditional IAM systems were designed around human authentication patterns — periodic logins, role-based permissions, manual provisioning cycles. Agents operate continuously, require dynamic tool access, and scale beyond human oversight capacity.

Autonomous Security Firms Lead Agent Adoption

Cybersecurity companies are among the earliest production adopters of autonomous agents, driven by the need to operate at machine speed against automated threats. XBOW raised $35 million in Series C extension funding on Wednesday, bringing total funding to over $270 million at a $1+ billion valuation.

XBOW’s platform leverages AI reasoning and adversarial workflows to continuously test applications for vulnerabilities, operating autonomously to identify and validate security holes. The platform executes targeted attacks autonomously, allowing security teams to explore deeper attack paths than traditional testing allows.

“Each XBOW agent operates like an extension of our in-house red team, allowing us to scale offensive testing with speed and depth that was previously out of reach,” said Alex Krongold, director of Corporate Development & Ventures at SentinelOne.

The cybersecurity use case demonstrates both the potential and the risk of autonomous agents. XBOW agents have privileged access to test production-like environments and execute actual attacks. The identity governance challenge: ensuring these powerful capabilities remain scoped to authorized testing while preventing lateral movement into production systems.

Enterprise Architecture Shifts from Bots to Orchestration

The evolution from robotic process automation to agentic systems requires fundamental changes in enterprise architecture. Sanjoy Sarkar, SVP at First Citizens Bank, argues that the next phase of enterprise transformation will be defined not by deploying more bots, but by how intelligently automation is architected, governed, and orchestrated.

Traditional automation follows a predictable pattern: identify repetitive processes, deploy robotic automation, scale quickly, celebrate efficiency gains. Over time, however, the architecture underneath that growth often becomes less cohesive. Different departments adopt tools independently. Governance practices vary. Credential management and monitoring may not be fully centralized.

This “automation sprawl” creates the foundation for agent security incidents. Multiple platforms performing similar functions, uneven governance models across business units, proliferated scripts and workflows, and fragmented visibility.

Learning Systems Create New Risk Categories

Anthropic on Tuesday introduced “dreaming” for its Claude Managed Agents platform — a capability that lets AI agents learn from their own past sessions and improve over time. The company moved two experimental features, outcomes and multi-agent orchestration, from research preview to public beta.

Early adopters report significant results. Legal AI company Harvey saw task completion rates increase roughly 6x after implementing dreaming. Medical document review company Wisedocs cut document review time by 50% using outcomes. Netflix processes logs from hundreds of builds simultaneously using multi-agent orchestration.

The learning capability addresses enterprise demands for self-correcting, self-improving AI systems before trusting agents with production workloads. However, it also creates new security considerations. Agents that modify their own behavior based on past sessions require governance frameworks that can track behavioral changes, validate learning outcomes, and prevent adversarial training data from corrupting agent behavior.

What This Means

The 80-point gap between AI agent pilots and production deployments reflects a fundamental mismatch between enterprise security architecture and agentic system requirements. Traditional IAM assumes human-speed operations, periodic authentication, and manual oversight. Agents operate continuously, require dynamic tool access, and scale beyond human governance capacity.

This is not a temporary adoption friction — it’s a structural limitation that will determine which organizations can operationalize AI agents at scale. Security teams that adapt their identity governance frameworks to handle non-human identities, continuous operation, and machine-speed decision-making will gain significant competitive advantages. Those that don’t will remain stuck in pilot purgatory.

The cybersecurity industry’s early adoption of autonomous agents provides a template for other sectors. These systems demonstrate both the potential of agentic AI and the governance frameworks required to deploy them safely in production environments.

FAQ

What makes AI agent security different from traditional AI security?

Traditional AI security focuses on prompt injection and model outputs. Agents expose four attack surfaces: prompts, tools (database/API access), persistent memory, and multi-agent orchestration. Each surface requires different security controls and monitoring approaches.

Why are most enterprises stuck in AI agent pilot programs?

Cisco reports that 85% of enterprises run agent pilots but only 5% reach production. The gap is primarily due to identity and access management systems that cannot inventory, scope, or revoke agent permissions at machine speed, creating unacceptable security risks for production deployment.

How do learning AI agents change enterprise security requirements?

Self-improving agents like Anthropic’s “dreaming” capability require governance frameworks that track behavioral changes, validate learning outcomes, and prevent adversarial training data corruption. Traditional security assumes static system behavior, while learning agents continuously modify their own operations.

Related news

- NVIDIA and SAP Bring Trust to Specialized Agents – NVIDIA AI Blog

- Why Agentic AI Is Security’s Next Blind Spot – The Hacker News

- Cisco Unveils Agentic AI Security Framework to Safeguard Autonomous AI Workforce – TechAfrica News – Google News – AI Security

Sources

- AI agents are running hospital records and factory inspections. Enterprise IAM was never built for them. – VentureBeat

- The AI Agent Security Surface: What Gets Exposed When You Add Tools and Memory – Towards Data Science

- Anthropic introduces “dreaming,” a system that lets AI agents learn from their own mistakes – VentureBeat