Open Source AI Models Transform Enterprise Computing Landscape

Open source AI models have fundamentally shifted how organizations deploy artificial intelligence, with Meta’s Llama series, Mistral AI’s models, and quantization techniques enabling powerful local inference capabilities that bypass traditional cloud-based API restrictions. According to VentureBeat, this transition represents “Shadow AI 2.0” where employees can run capable models locally on laptops without network signatures, creating new security challenges for enterprise IT teams.

The convergence of consumer-grade accelerators, mainstream quantization, and accessible model weights has made running 70B-parameter models on high-end laptops routine for technical teams. A MacBook Pro with 64GB unified memory can now execute quantized large language models at usable speeds, democratizing AI capabilities that previously required multi-GPU server infrastructure.

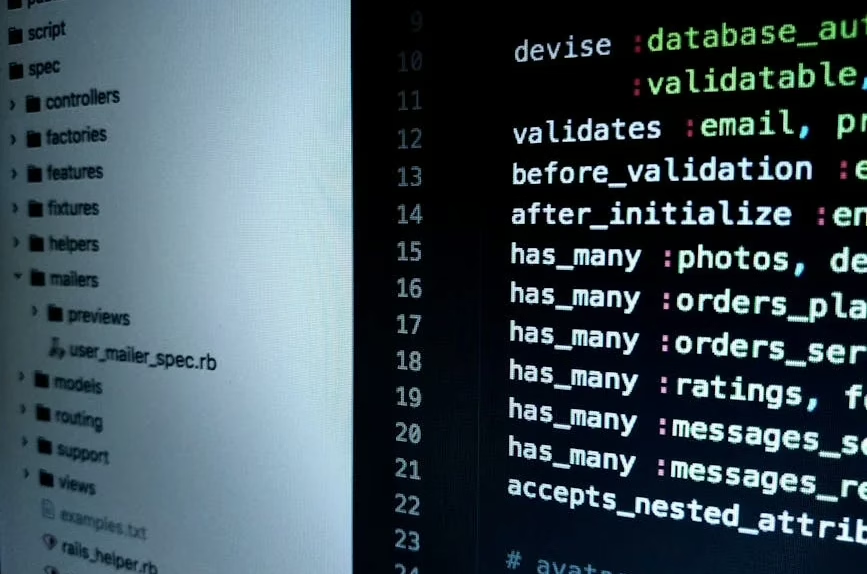

Technical Architecture Driving Local AI Deployment

The technical foundation enabling this shift centers on transformer architecture optimizations and model compression techniques. Quantization methods have evolved from research experiments to production-ready solutions, allowing models like Llama 2 70B and Mistral 7B to operate within consumer hardware constraints.

Key technical enablers include:

- Mixed-precision inference reducing memory bandwidth requirements

- Dynamic quantization maintaining model quality while reducing computational overhead

- Optimized attention mechanisms enabling longer context processing on limited hardware

- Efficient tokenization strategies minimizing preprocessing bottlenecks

According to Hugging Face’s technical documentation, fine-tuning methodologies have become increasingly sophisticated, with Parameter-Efficient Fine-Tuning (PEFT) techniques like LoRA (Low-Rank Adaptation) enabling domain-specific customization without full model retraining. These approaches reduce computational requirements while maintaining model performance across specialized tasks.

The PyTorch ecosystem has matured significantly, providing optimized inference engines and deployment frameworks that streamline local model execution. Hardware vendors have responded with specialized silicon designs optimizing transformer workloads, including Apple’s unified memory architecture and NVIDIA’s consumer GPUs with enhanced tensor processing capabilities.

Security Implications of Decentralized AI Infrastructure

The shift toward local inference creates unprecedented security challenges for enterprise environments. Traditional Cloud Access Security Broker (CASB) policies become ineffective when AI processing occurs entirely on-device without external API calls.

Critical security considerations include:

- Data Loss Prevention (DLP) systems cannot monitor local model interactions

- Model provenance becomes difficult to verify without centralized deployment

- Intellectual property protection requires new approaches when models run locally

- Compliance monitoring faces gaps in traditional network-based observation

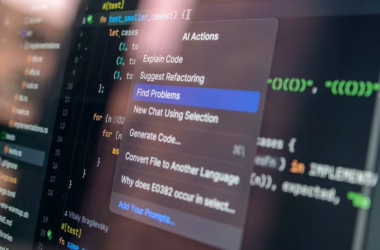

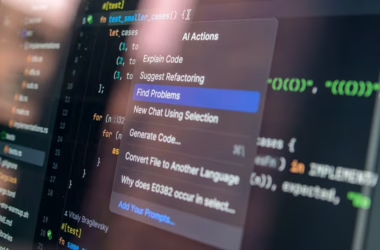

Security teams must adapt governance frameworks to address “unvetted inference inside the device” rather than focusing solely on “data exfiltration to the cloud.” This paradigm shift requires new monitoring tools capable of detecting local AI model execution and analyzing on-device data processing patterns.

The “Bring Your Own Model” (BYOM) trend compounds these challenges, as employees increasingly download and deploy open source models independently. Organizations need comprehensive asset management strategies to track model usage, versions, and potential security vulnerabilities across distributed computing environments.

Performance Optimization and Fine-Tuning Methodologies

Fine-tuning techniques have evolved to support diverse deployment scenarios, from cloud-based training to edge device optimization. The Hugging Face ecosystem provides comprehensive tooling for model customization, including automated hyperparameter optimization and distributed training frameworks.

Advanced fine-tuning approaches include:

- Instruction tuning for task-specific performance enhancement

- Constitutional AI training improving model alignment and safety

- Multi-task learning enabling single models to handle diverse workloads

- Reinforcement Learning from Human Feedback (RLHF) optimizing model behavior

Quantization strategies have become increasingly sophisticated, with dynamic quantization adapting precision levels based on computational context. Knowledge distillation techniques enable smaller models to achieve performance comparable to larger counterparts, making deployment feasible across resource-constrained environments.

Model compression research continues advancing, with pruning algorithms removing redundant parameters while maintaining accuracy. Neural architecture search automates model design optimization for specific hardware configurations, ensuring optimal performance across diverse deployment targets.

Enterprise Adoption Challenges and Solutions

Despite technical advances, enterprise adoption faces significant implementation challenges. Model governance requires new frameworks addressing version control, security scanning, and performance monitoring across distributed deployments.

Key implementation considerations include:

- Hardware standardization ensuring consistent performance across employee devices

- Model repository management controlling access to approved model weights

- Performance benchmarking establishing baseline metrics for local inference quality

- Integration strategies connecting local models with existing enterprise systems

Organizations must balance innovation velocity with risk management, implementing policies that enable productive AI usage while maintaining security standards. This requires collaboration between IT security, data science, and business teams to establish practical governance frameworks.

Change management becomes critical as traditional AI deployment models evolve. Training programs must address new technical skills while ensuring compliance with evolving regulatory requirements. Organizations need comprehensive strategies addressing both technical implementation and organizational adaptation.

What This Means

The open source AI revolution represents a fundamental shift from centralized, API-dependent AI services toward distributed, locally-executed intelligence. This transformation democratizes AI capabilities while creating new technical and security challenges requiring innovative solutions.

Technical implications include the need for new monitoring tools, security frameworks, and governance policies adapted to decentralized AI infrastructure. Organizations must develop capabilities for managing diverse model ecosystems while maintaining performance and compliance standards.

Strategic considerations involve balancing innovation acceleration with risk management, requiring new organizational structures and processes. The shift toward local inference enables greater data privacy and reduced latency while demanding more sophisticated technical expertise across enterprise teams.

The success of this transition depends on continued advances in model efficiency, hardware optimization, and security tooling. Organizations that effectively navigate this transformation will gain significant competitive advantages through enhanced AI capabilities and improved operational flexibility.

FAQ

Q: What hardware specifications are required for running open source LLMs locally?

A: Modern laptops with 32-64GB RAM and dedicated GPUs can run quantized 7B-13B parameter models effectively. For 70B models, 64GB+ unified memory (like Apple Silicon) or high-end NVIDIA GPUs with sufficient VRAM are recommended.

Q: How do organizations maintain security when employees run AI models locally?

A: Enterprise security requires new endpoint monitoring tools that detect local model execution, comprehensive model governance policies, and updated DLP solutions capable of analyzing on-device AI interactions rather than relying solely on network traffic monitoring.

Q: What are the key differences between Llama and Mistral model architectures?

A: Llama models use RMSNorm normalization and SwiGLU activation functions with rotary positional embeddings, while Mistral incorporates sliding window attention and grouped-query attention for improved efficiency. Both support similar fine-tuning approaches but have different optimal use cases based on computational requirements.

Further Reading

- OpenAI Calls Amazon.com (AMZN) Partnership as a Key Driver of Enterprise Growth – Yahoo Finance – Google News – Amazon

- Gemma 4: Byte for byte, the most capable open models – DeepMind Blog

- OpenAI’s former Sora boss is leaving – The Verge