AI productivity applications are showing strong adoption rates with 80-90% initial code acceptance among developers, but subsequent revision requirements are reducing real-world effectiveness to 10-30% of generated output, according to new research from developer analytics firm Waydev. The findings highlight a growing gap between AI tool adoption metrics and actual productivity gains across enterprise environments.

Waydev, which tracks productivity metrics for over 10,000 software engineers across 50 organizations, reports that while AI coding assistants like Claude Code and Cursor generate significantly more accepted code initially, engineers frequently return to revise AI-generated work within weeks of implementation.

Enterprise AI Agent Deployment Accelerates

Major enterprises are moving beyond experimental AI implementations to deploy autonomous agents across core business operations. Google Cloud documented 1,302 real-world AI use cases from leading organizations, with companies like Capcom, Home Depot, and Citi Wealth using agentic systems to automate complex tasks ranging from game testing to financial advisory services.

The deployment shift represents what Google calls “the agentic enterprise,” where AI agents handle everything from customer service automation to cloud infrastructure management. According to the company’s analysis, this technological transformation is occurring faster than previous enterprise software adoptions.

Key enterprise AI implementations include:

- Game development: Capcom uses AI agents for automated game testing and quality assurance

- Retail operations: Home Depot deploys agents for inventory management and customer support

- Financial services: Citi Wealth employs AI for personalized investment recommendations

- DevOps: Infrastructure agents propose cloud changes requiring human approval

Productivity Gains Vary by Worker Demographics

Research from Anthropic’s Economic Index analyzing 81,000 Claude users reveals significant productivity improvements, particularly among high-wage workers and entrepreneurs. However, the study also found that workers experiencing the largest productivity gains expressed the highest concerns about job displacement.

“Most respondents reported that Claude enhanced their capabilities in the form of broadening the scope of their work or speeding it up,” Anthropic researchers noted. The data shows that both high-wage technical workers and lower-wage employees with less formal education reported substantial productivity benefits.

Approximately 20% of survey respondents worried about AI-driven job displacement, even while acknowledging increased productivity and workplace empowerment. Early-career workers in AI-exposed roles showed the highest levels of displacement anxiety despite experiencing measurable efficiency gains.

Security and Approval Systems Address Agent Risks

The rise of autonomous AI agents has created new security challenges around granting broad system permissions versus maintaining operational safety. NanoClaw 2.0, developed by startup NanoCo in partnership with Vercel, introduces infrastructure-level approval systems that require explicit human consent for sensitive actions.

The framework addresses what NanoCo co-founder Gavriel Cohen describes as inherent flaws in application-level security, where AI models themselves request permissions. The new system integrates with 15 messaging platforms including Slack and WhatsApp, allowing teams to approve high-consequence actions like cloud infrastructure changes or financial transactions through familiar interfaces.

Critical use cases for human-in-the-loop approval:

- DevOps operations: Infrastructure changes requiring senior engineer approval

- Financial processes: Batch payments and invoice processing with human signatures

- Data management: Database modifications with explicit consent workflows

- Customer communications: Automated responses with escalation triggers

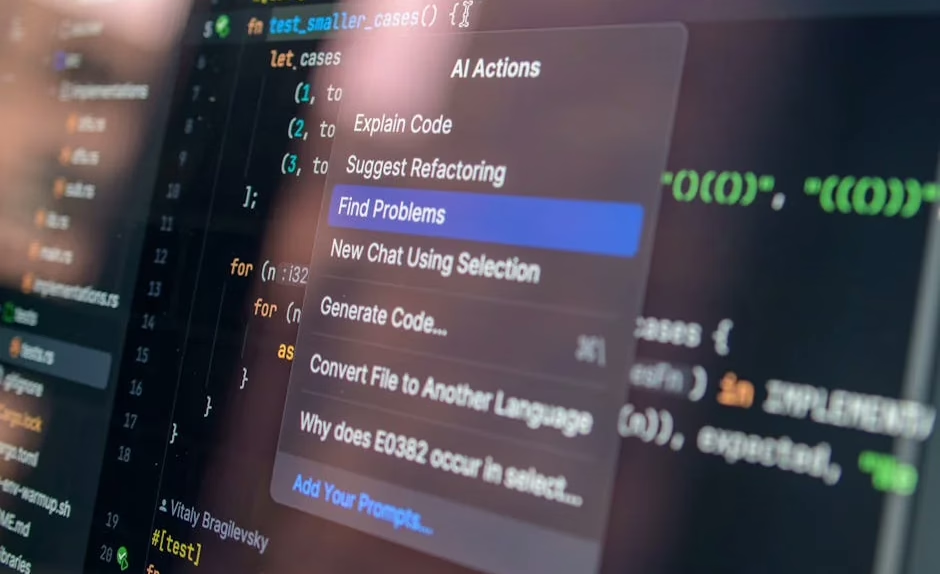

Developer Productivity Measurement Challenges

The proliferation of AI coding tools has complicated traditional software development productivity metrics. Alex Circei, CEO of developer analytics platform Waydev, reports that while AI-generated code shows high initial acceptance rates, the subsequent revision requirements significantly impact long-term productivity calculations.

“Engineering managers are seeing code acceptance rates of 80% to 90%, but they’re missing the churn that happens when engineers have to revise that code in the following weeks,” Circei explained. This revision cycle drives real-world acceptance rates down to between 10% and 30% of originally generated code.

The phenomenon has led to what some developers call “tokenmaxxing” — pursuing large AI token budgets as productivity indicators rather than focusing on actual output quality. Waydev has redesigned its analytics platform to track these post-acceptance revision patterns, providing engineering teams with more accurate productivity assessments.

What This Means

The enterprise AI productivity landscape reveals a complex picture of rapid adoption coupled with measurement challenges. While organizations are successfully deploying AI agents across diverse business functions, the gap between initial acceptance metrics and long-term productivity gains suggests that current evaluation methods may be insufficient.

The security frameworks emerging around AI agents indicate that enterprises recognize the need for human oversight in high-stakes decisions, even as they pursue automation benefits. This hybrid approach — combining AI efficiency with human judgment — appears to be the dominant pattern for sustainable AI productivity implementations.

For organizations evaluating AI productivity tools, the data suggests focusing on end-to-end workflow improvements rather than simple adoption metrics. The revision rates documented by Waydev highlight the importance of measuring sustained value creation over initial AI output acceptance.

FAQ

What is the real productivity impact of AI coding assistants?

While AI tools show 80-90% initial code acceptance rates, subsequent revisions reduce the effective acceptance rate to 10-30%. Organizations should measure long-term code stability rather than initial acceptance metrics.

How are enterprises ensuring AI agent security?

Companies are implementing infrastructure-level approval systems that require human consent for sensitive actions. Frameworks like NanoClaw 2.0 integrate with messaging platforms to provide approval workflows for high-consequence AI decisions.

Which workers benefit most from AI productivity tools?

High-wage technical workers and entrepreneurs see the largest productivity gains, though lower-wage workers with less formal education also report significant benefits. However, workers with the biggest gains also express the highest job displacement concerns.