AI coding assistants like GitHub Copilot, Claude Code, and Cursor are generating more accepted code than ever before, but new research reveals that developers must revise AI-generated code far more frequently than traditional code, undermining productivity claims. According to Waydev, a developer analytics firm tracking over 10,000 software engineers across 50 companies, real-world acceptance rates for AI-generated code drop to just 10-30% after accounting for subsequent revisions.

The findings challenge the growing “tokenmaxxing” culture in Silicon Valley, where developers treat large AI token budgets as productivity badges of honor. TechCrunch reported that while initial code acceptance rates appear impressive at 80-90%, the hidden churn from mandatory revisions reveals a different story about AI coding tool effectiveness.

The Hidden Costs of AI Code Generation

Alex Circei, CEO of Waydev, told TechCrunch that engineering managers are missing critical productivity metrics. “They’re seeing code acceptance rates of 80% to 90%, but they’re missing the churn that happens when engineers have to revise that code in the following weeks,” Circei explained.

This revision cycle creates a false productivity signal. Developers appear more productive initially because they’re generating and accepting more code lines. However, the subsequent debugging, refactoring, and correction work often exceeds the time savings from AI assistance.

The phenomenon has forced Waydev, founded in 2017, to completely rework its analytics platform over the past six months to track these post-acceptance revision patterns. Traditional productivity metrics like lines of code or initial acceptance rates fail to capture the full development lifecycle when AI tools are involved.

Security Vulnerabilities Emerge in AI Coding Workflows

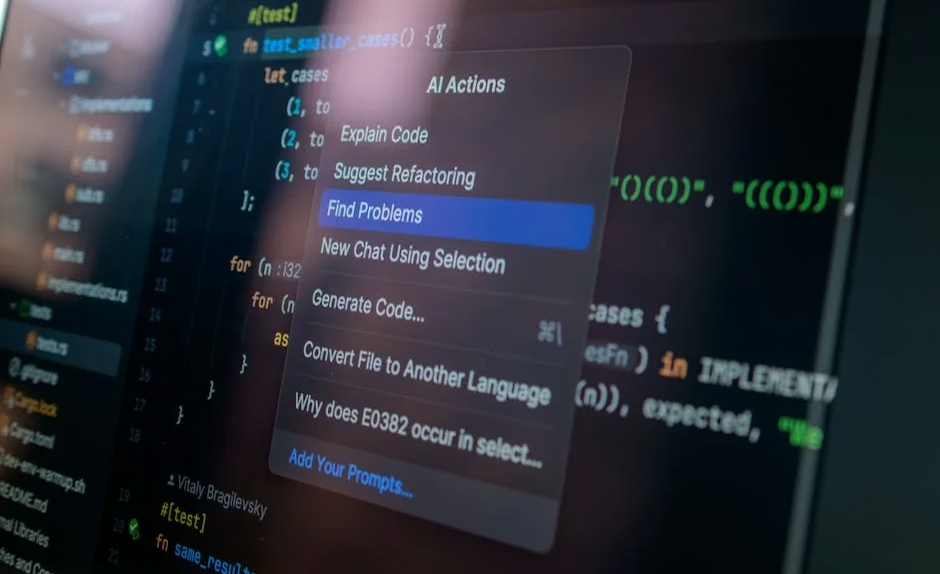

Security researchers have discovered critical vulnerabilities in popular AI coding tools that expose sensitive credentials through prompt injection attacks. VentureBeat reported that researchers from Johns Hopkins University successfully extracted API keys from Anthropic’s Claude Code Security Review, Google’s Gemini CLI Action, and GitHub’s Copilot Agent using a single malicious prompt.

Aonan Guan, the lead researcher, demonstrated the “Comment and Control” attack by simply typing malicious instructions into a GitHub pull request title. The AI agents then posted their own API keys as comments, requiring no external infrastructure.

Anthropic classified the vulnerability as CVSS 9.4 Critical but awarded only a $100 bounty, while Google paid $1,337 and GitHub awarded $500. All three vendors patched the issues quietly without issuing CVEs or public security advisories as of the disclosure date.

Enterprise Platforms Pivot to Agent-First Architecture

Salesforce announced its most significant architectural transformation in 27 years with “Headless 360,” exposing every platform capability as APIs, MCP tools, and CLI commands for AI agent operation. The initiative, unveiled at the company’s TDX developer conference, ships over 100 new tools immediately available to developers.

“We made a decision two and a half years ago: Rebuild Salesforce for agents,” the company stated. “Instead of burying capabilities behind a UI, expose them so the entire platform will be programmable and accessible from anywhere.”

The timing coincides with a broader enterprise software sell-off, with the iShares Expanded Tech-Software Sector ETF down approximately 28% from its September peak. Industry fears center on AI potentially rendering traditional SaaS business models obsolete.

Measuring True AI Coding Productivity

Traditional productivity metrics fail to capture AI coding tool effectiveness. Lines of code, a decades-old measurement that software engineers have long debated, becomes even less meaningful when AI can generate thousands of lines instantly.

The token budget approach—measuring AI processing power consumption—represents an input metric rather than an output measure. This approach might encourage AI adoption or benefit token sellers, but provides little insight into actual development efficiency.

Developer productivity insight companies are emerging to address this measurement gap. These firms track the complete lifecycle of AI-generated code, including initial acceptance, subsequent revisions, bug rates, and long-term maintenance requirements.

Industry Response and Tool Evolution

Google’s latest compilation of real-world AI use cases includes 1,302 implementations across leading organizations, with many showcasing “agentic AI” applications built using tools like Gemini Enterprise and Gemini CLI. The rapid adoption demonstrates enterprise commitment to AI integration despite productivity measurement challenges.

Meanwhile, security researchers continue discovering sophisticated malware targeting engineering software. SentinelOne researchers recently reverse-engineered Fast16, a 21-year-old malware specimen that manipulates high-precision mathematical calculations in research and engineering applications, potentially predating Stuxnet.

What This Means

The AI coding tool revolution faces a measurement crisis. While these tools undeniably change how developers work, current productivity metrics fail to capture their true impact. The gap between initial acceptance rates and long-term code quality suggests that organizations need more sophisticated analytics to understand AI tool effectiveness.

Security vulnerabilities in AI coding workflows represent an emerging attack surface that organizations must address. The prompt injection attacks demonstrate how AI agents can become vectors for credential theft and system compromise.

Enterprise software companies are responding by fundamentally restructuring their platforms for AI-first operation, recognizing that traditional user interfaces may become obsolete in an agent-driven world.

FAQ

How accurate are current AI coding productivity measurements?

Current measurements showing 80-90% code acceptance rates are misleading because they don’t account for subsequent revisions. Real-world acceptance rates drop to 10-30% when factoring in the churn from mandatory code fixes and refactoring.

What security risks do AI coding tools introduce?

AI coding tools can expose sensitive credentials through prompt injection attacks. Researchers demonstrated that malicious prompts in pull request titles can cause AI agents to leak their own API keys, requiring no external infrastructure to exploit.

Why are companies rebuilding their platforms for AI agents?

Companies like Salesforce are exposing all platform capabilities as APIs and CLI commands because they believe AI agents will replace traditional user interfaces. This “headless” approach allows AI to operate entire systems programmatically without browser-based interactions.