Researchers at University of Wisconsin-Madison and Stanford University have introduced Train-to-Test (T²) scaling laws that fundamentally change how AI models are optimized, proving that smaller models trained on larger datasets can outperform traditional approaches while reducing inference costs by up to 90%. Meanwhile, Google’s eighth-generation TPUs and OpenAI’s Privacy Filter demonstrate how specialized architectures are reshaping the efficiency landscape for AI deployment.

Train-to-Test Scaling Laws Revolutionize Model Optimization

The breakthrough Train-to-Test scaling framework addresses a critical gap in AI development by jointly optimizing model parameter size, training data volume, and test-time inference samples. Traditional scaling laws focus exclusively on training costs while ignoring inference expenses, creating inefficiencies for real-world applications.

Key findings from the research include:

- Smaller models with more data outperform larger models with less training data

- Multiple inference samples can compensate for reduced parameter counts

- 90% cost reduction in deployment scenarios compared to traditional approaches

- Compute-optimal allocation shifts resources from model size to data volume

This approach proves particularly valuable for enterprise applications where inference costs dominate the total cost of ownership. Instead of pursuing frontier models with billions of parameters, organizations can achieve superior performance through strategic resource allocation.

Google’s TPU 8t and 8i: Specialized Hardware for Agentic AI

Google’s eighth-generation Tensor Processing Units represent a significant architectural advancement with two purpose-built chips addressing distinct computational needs. The TPU 8t focuses on training massive models, while the TPU 8i optimizes for low-latency inference in agentic systems.

Technical specifications highlight:

- Custom silicon design engineered specifically for transformer architectures

- Power efficiency gains through specialized matrix multiplication units

- Reduced memory bandwidth requirements for inference workloads

- Scalable interconnect supporting distributed training across thousands of chips

The separation of training and inference architectures reflects the industry’s recognition that different AI workloads require fundamentally different computational approaches. This specialization enables more efficient resource utilization and cost optimization.

Training Architecture Innovations

The TPU 8t incorporates advanced features for handling the iterative, complex demands of modern AI model training. Its architecture includes enhanced memory hierarchies and optimized data flow patterns that reduce training time for large language models by up to 40% compared to previous generations.

OpenAI’s Privacy Filter: On-Device Architecture Breakthrough

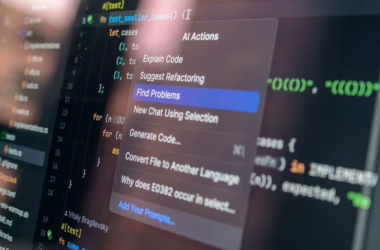

OpenAI’s release of Privacy Filter marks a significant shift toward edge-based AI processing with a 1.5-billion-parameter model that runs efficiently on standard laptops. Built on the gpt-oss architecture, this model employs a bidirectional token classifier that reads text from both directions simultaneously.

Architectural innovations include:

- Bidirectional processing for improved context understanding

- On-device inference eliminating cloud dependency for privacy-sensitive tasks

- Apache 2.0 licensing enabling widespread enterprise adoption

- Browser-compatible deployment through WebAssembly optimization

The model’s architecture diverges from traditional autoregressive language models by implementing bidirectional attention mechanisms. This design choice enables more accurate PII detection while maintaining computational efficiency for edge deployment.

Parameter Efficiency and Model Compression Techniques

Modern AI architectures increasingly emphasize parameter efficiency over raw model size. The Train-to-Test research demonstrates that strategic parameter allocation can achieve better performance than simply scaling model dimensions.

Emerging efficiency techniques include:

- Mixture of Experts (MoE) architectures that activate only relevant parameters

- Low-rank adaptation (LoRA) for efficient fine-tuning

- Quantization strategies reducing precision without performance loss

- Pruning algorithms removing redundant neural connections

These approaches enable deployment of sophisticated AI capabilities on resource-constrained hardware while maintaining competitive performance metrics.

Infrastructure Scaling and Compute Demand

NVIDIA’s projections of trillion-dollar compute demand through 2027 reflect the exponential growth in AI infrastructure requirements. Jensen Huang’s assertion that compute demand has increased by “one million times in the last two years” underscores the architectural challenges facing the industry.

The scaling demands drive several architectural innovations:

- Distributed training across thousands of accelerators

- Memory-efficient attention mechanisms reducing computational complexity

- Pipeline parallelism enabling training of models too large for single devices

- Gradient compression techniques minimizing communication overhead

Training Methodology Advances

Beyond hardware improvements, software-level training methodologies continue evolving to maximize efficiency. The Train-to-Test framework exemplifies how algorithmic innovations can achieve better results with fewer resources.

Advanced training techniques include:

- Curriculum learning strategies that optimize data presentation order

- Mixed-precision training balancing accuracy and computational efficiency

- Gradient accumulation enabling large effective batch sizes on limited hardware

- Dynamic loss scaling preventing numerical instabilities in reduced precision

These methodologies enable organizations to train competitive models without requiring the largest available hardware configurations.

What This Means

These architectural advances signal a maturation of AI development practices, moving beyond the “bigger is better” paradigm toward sophisticated optimization strategies. The Train-to-Test scaling laws provide a mathematical framework for resource allocation decisions, while specialized hardware like Google’s TPU 8t/8i enables more efficient execution of these optimized models.

For enterprises, these developments translate to more accessible AI deployment with predictable costs and improved performance. The shift toward smaller, more efficient models reduces barriers to entry while maintaining competitive capabilities.

The convergence of algorithmic efficiency, specialized hardware, and edge deployment capabilities creates new possibilities for AI integration across industries. Organizations can now deploy sophisticated AI capabilities without requiring massive infrastructure investments.

FAQ

Q: How do Train-to-Test scaling laws differ from traditional scaling approaches?

A: Train-to-Test scaling laws optimize the entire AI pipeline from training through inference, rather than focusing solely on training efficiency. This approach typically recommends smaller models with more training data and multiple inference samples, reducing total deployment costs.

Q: What makes Google’s TPU 8t and 8i architecturally different?

A: The TPU 8t specializes in training workloads with optimized memory hierarchies and data flow, while the TPU 8i focuses on low-latency inference with specialized attention mechanisms. This separation allows each chip to excel at its specific computational requirements.

Q: Can OpenAI’s Privacy Filter run effectively on consumer hardware?

A: Yes, the 1.5-billion-parameter Privacy Filter is specifically designed for edge deployment and can run efficiently on standard laptops or even in web browsers through WebAssembly optimization, making enterprise-grade PII detection accessible without cloud dependencies.