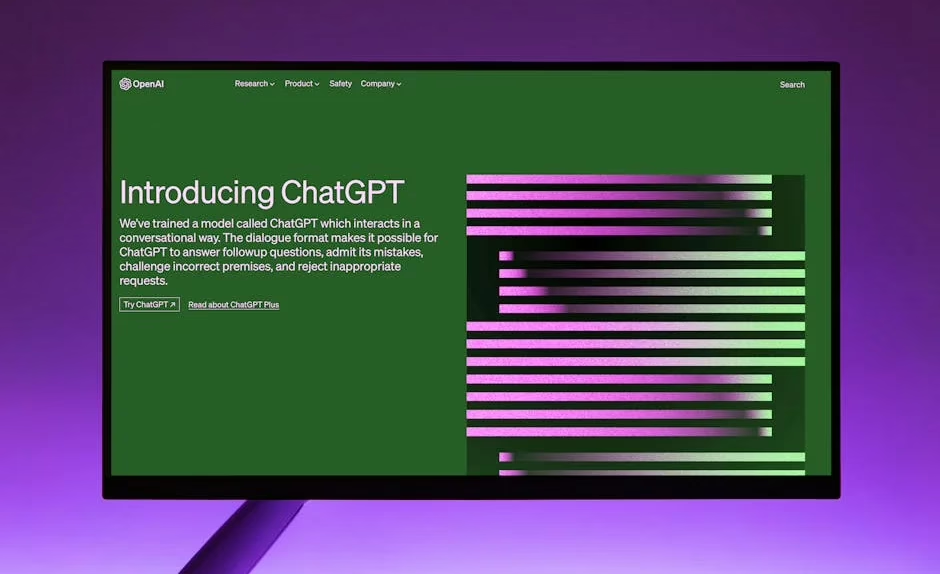

OpenAI on Monday officially launched ChatGPT Images 2.0, a dramatic upgrade to its image generation capabilities that can produce multilingual text, full infographics, user interface mockups, and complex visual content within a single image. The new `gpt-image-2` model, available through the API and ChatGPT, represents what the company calls “a fundamental shift in how we view visual media.”

The release follows weeks of testing on LM Arena AI under the codename “duct tape,” where early users reported impressive results generating long text blocks, realistic website screenshots, and research-backed infographics. According to VentureBeat’s coverage, the model can now produce floor plans, image grids, character models from multiple angles, and apply these features to user-uploaded imagery.

Google Counters With Deep Research Agents

Google responded the same day with its own multimodal advancement, unveiling Deep Research and Deep Research Max agents that combine web search with proprietary enterprise data through a single API call. Built on the Gemini 3.1 Pro model, these agents can generate native charts and infographics within research reports while connecting to third-party data sources via the Model Context Protocol.

“We are launching two powerful updates to Deep Research in the Gemini API, now with better quality, MCP support, and native chart/infographics generation,” Google CEO Sundar Pichai wrote on X. The agents target enterprise research workflows in finance, life sciences, and market intelligence where accuracy is critical.

Google’s approach differs from OpenAI’s consumer-focused rollout by emphasizing enterprise integration. The Deep Research agents can fuse open web data with proprietary company information, positioning Google’s AI infrastructure as the backbone for professional research tasks that traditionally required hours of human analyst time.

Multimodal AI Gains Enterprise Traction

The competing launches reflect broader adoption of multimodal AI across industries. Google’s blog highlighted 1,302 real-world generative AI use cases from leading organizations, with the majority showcasing “agentic AI” applications built with tools like Gemini Enterprise and Security Command Center.

At MIT, researchers are applying multimodal approaches to specialized domains. Associate Professor Sili Deng’s Energy and Nanotechnology Group used AI to develop “digital twins” that mirror energy device performance, creating digital replicas capable of predicting fuel combustion systems in real time. The work emerged from pandemic-driven lab shutdowns that forced researchers to explore machine learning alternatives.

Meanwhile, aerospace applications are expanding through collaboration between MIT’s Zachary Cordero and machine learning expert Faez Ahmed. Their DARPA-sponsored project developed an AI tool that optimizes material composition for blisks — bladed disks critical to jet and rocket turbine engines.

Clinical Applications Show Promise

Multimodal AI is also advancing in healthcare through sophisticated feature engineering approaches. Researchers published findings in arXiv showing automated detection of dosing errors in clinical trial narratives using gradient boosting with 3,451 features spanning traditional NLP, semantic embeddings, and transformer-based scores.

The system achieved 0.8725 test ROC-AUC on the CT-DEB benchmark dataset with severe class imbalance (4.9% positive rate). The research demonstrated that sentence embeddings provided critical performance gains despite contributing only 37.07% of total feature importance, highlighting the complexity of optimizing multimodal systems.

Feature Selection Proves Critical

The clinical research revealed that selecting the top 500-1000 features yielded optimal performance (0.886-0.887 AUC), outperforming the full 3,451-feature set through effective noise reduction. This finding emphasizes feature selection as a crucial regularization technique for specialized text classification under severe class imbalance.

Technical Capabilities Expand Rapidly

OpenAI’s ChatGPT Images 2.0 showcases several breakthrough capabilities that distinguish it from previous image generation models. The system can generate realistic reproductions of public figures, perform web research and incorporate results directly into images, and handle complex multilingual text generation within visual content.

The model’s ability to create user interface mockups and website screenshots with high fidelity suggests potential applications in design prototyping and software development. Floor plan generation and character modeling from multiple angles indicate expansion into architecture and 3D design workflows.

Google’s Deep Research agents complement these visual capabilities with analytical depth, combining multiple data sources to produce comprehensive research reports with native visualization. The Model Context Protocol integration allows connection to arbitrary third-party data sources, expanding the potential scope of automated research tasks.

What This Means

The simultaneous launch of advanced multimodal capabilities from OpenAI and Google signals a new phase in AI competition focused on practical enterprise applications. While OpenAI emphasizes creative and consumer use cases with ChatGPT Images 2.0, Google targets professional research workflows with its Deep Research agents.

Both approaches address the growing demand for AI systems that can process and generate content across multiple modalities — text, images, data visualization, and structured analysis. The enterprise focus suggests companies are moving beyond experimental AI deployments toward production systems that handle mission-critical tasks.

The MIT research examples demonstrate how academic institutions are integrating multimodal AI into specialized domains like combustion engineering and aerospace materials. This cross-pollination between consumer AI advances and specialized applications indicates a maturing ecosystem where foundational models enable domain-specific innovations.

The clinical trial research highlights both the potential and challenges of multimodal AI in regulated industries. While the 87.25% accuracy in dosing error detection shows promise, the need for extensive feature engineering and careful validation reflects the high stakes of healthcare applications.

FAQ

How does ChatGPT Images 2.0 differ from previous image generation models?

ChatGPT Images 2.0 can generate extensive text within images, create realistic UI mockups, perform web research and incorporate findings into visuals, and handle multilingual content. Previous models typically struggled with text generation and complex visual layouts.

What makes Google’s Deep Research agents unique compared to other AI research tools?

Deep Research agents can combine public web data with private enterprise information through a single API call, generate native charts and infographics, and connect to third-party data sources via the Model Context Protocol. This integration of multiple data sources distinguishes them from tools that rely solely on public information.

Are these multimodal AI systems ready for enterprise deployment?

Both OpenAI and Google are targeting enterprise customers, with Google specifically emphasizing regulated industries like finance and life sciences. However, the clinical research shows that specialized applications may require extensive validation and feature engineering before deployment in high-stakes environments.