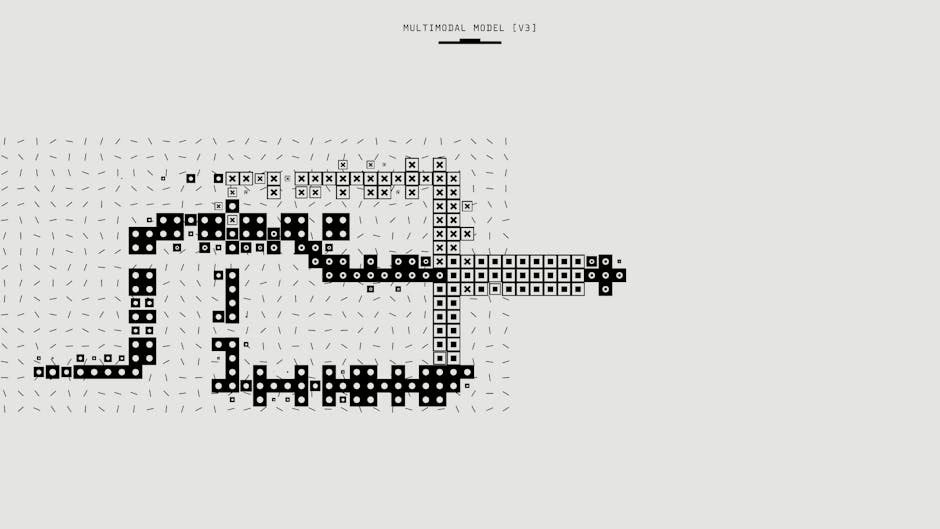

Multimodal AI systems combining vision, language, and audio capabilities are rapidly expanding across enterprise environments, with Google reporting over 1,302 real-world implementations and OpenAI launching ChatGPT Images 2.0 with advanced visual generation capabilities. However, this proliferation introduces critical security vulnerabilities that organizations must address to protect sensitive data and maintain operational integrity.

Expanding Attack Surface Through Multimodal Integration

The integration of vision-language models (VLMs) significantly expands the attack surface compared to traditional text-only AI systems. Adversarial attacks can now target multiple input modalities simultaneously, creating complex threat vectors that traditional security measures struggle to detect.

Key vulnerability areas include:

- Visual prompt injection through manipulated images containing hidden instructions

- Cross-modal data leakage where sensitive information transfers between visual and textual outputs

- Steganographic attacks embedding malicious code within seemingly benign visual content

- Model poisoning through contaminated training data across multiple modalities

According to research published on arXiv, multimodal systems processing clinical trial data demonstrate the complexity of securing systems that handle both structured text and visual elements. The study’s analysis of 42,112 clinical narratives reveals how feature engineering across multiple modalities creates new opportunities for adversarial manipulation.

Enterprise Data Exposure Risks

Google’s Deep Research and Deep Research Max agents exemplify the dual-edged nature of multimodal AI capabilities. While these systems can fuse open web data with proprietary enterprise information through a single API call, they also create unprecedented data exposure risks.

Critical security concerns include:

- Uncontrolled data fusion mixing public and private information in unpredictable ways

- API-based attack vectors exploiting single points of failure across multiple data sources

- Model Context Protocol (MCP) vulnerabilities allowing unauthorized access to third-party data sources

- Native chart generation risks potentially exposing sensitive business intelligence through visual outputs

Organizations implementing these systems must establish strict data classification protocols and implement zero-trust architectures that verify every data access request, regardless of the AI system’s apparent legitimacy.

Visual Generation Security Implications

OpenAI’s ChatGPT Images 2.0 demonstrates the sophisticated capabilities of modern multimodal AI, including multilingual text generation, full infographics creation, and realistic reproduction of public figures. These capabilities introduce novel security challenges that extend beyond traditional deepfake concerns.

Emerging threat vectors include:

- Sophisticated social engineering through AI-generated visual content that appears authentic

- Corporate impersonation using AI-generated logos, documents, and user interfaces

- Information warfare through realistic but fabricated charts, maps, and infographics

- Intellectual property theft through AI systems that can reverse-engineer proprietary visual designs

The system’s ability to perform web research and integrate results directly into generated images creates additional risks around information integrity and source verification. Security teams must implement content provenance tracking and digital watermarking to maintain audit trails for AI-generated visual content.

Clinical and Healthcare Vulnerabilities

Healthcare applications of multimodal AI present particularly severe security implications due to the sensitive nature of medical data. The clinical trial narrative analysis research reveals how severe class imbalance in medical datasets can be exploited to hide malicious patterns within legitimate clinical data.

Healthcare-specific risks include:

- HIPAA violations through inadvertent patient data exposure in multimodal outputs

- Medical misinformation generated through compromised vision-language models

- Clinical decision manipulation via adversarial attacks on diagnostic imaging AI

- Research data integrity compromised through poisoned training datasets

The study’s finding that sentence embeddings contribute critically to model performance while representing only 37% of feature importance highlights how attackers might target specific components of multimodal systems to maximize impact while minimizing detection.

Defense Strategies and Best Practices

Implementing robust security measures for multimodal AI systems requires a comprehensive approach addressing each input modality and their interactions.

Essential security controls include:

Input Validation and Sanitization

- Multi-modal input filtering scanning images, audio, and text for malicious content

- Adversarial detection systems identifying manipulated inputs across all modalities

- Content-aware firewalls blocking suspicious multimodal data patterns

Model Security Hardening

- Differential privacy implementation to prevent data extraction attacks

- Federated learning architectures reducing centralized data exposure

- Model versioning and rollback capabilities for rapid response to compromised systems

Monitoring and Detection

- Anomaly detection across multimodal outputs identifying unusual generation patterns

- Behavioral analysis monitoring AI system interactions for signs of compromise

- Real-time threat intelligence integration for emerging multimodal attack signatures

Regulatory Compliance and Governance

As multimodal AI systems become more prevalent, organizations must navigate an evolving regulatory landscape while maintaining security posture. The MIT AI research initiatives demonstrate how academic institutions are grappling with similar challenges in balancing innovation with security requirements.

Compliance considerations include:

- Data residency requirements for multimodal AI processing across jurisdictions

- Algorithmic auditing mandates for vision-language model decision-making

- Transparency reporting obligations for AI-generated visual content

- Incident response protocols specific to multimodal AI security breaches

https://x.com/sundarpichai/status/2046627545333080316

What This Means

The rapid advancement of multimodal AI capabilities presents both unprecedented opportunities and significant security challenges. Organizations must proactively address these risks through comprehensive security frameworks that account for the unique characteristics of vision-language models and their complex attack surfaces.

Success requires balancing innovation with security, implementing defense-in-depth strategies, and maintaining continuous monitoring of evolving threat landscapes. As these systems become more sophisticated and widely deployed, the security implications will only grow in complexity and importance.

FAQ

Q: What makes multimodal AI systems more vulnerable than traditional AI?

A: Multimodal AI systems process multiple input types (vision, text, audio) simultaneously, creating more attack vectors and complex interactions that traditional security measures weren’t designed to handle.

Q: How can organizations protect sensitive data when using multimodal AI services?

A: Implement strict data classification, use zero-trust architectures, deploy content filtering across all modalities, and maintain audit trails for all AI-generated outputs.

Q: What are the most critical security controls for vision-language models?

A: Multi-modal input validation, adversarial detection systems, differential privacy implementation, and real-time monitoring of AI outputs for anomalous patterns or data leakage.