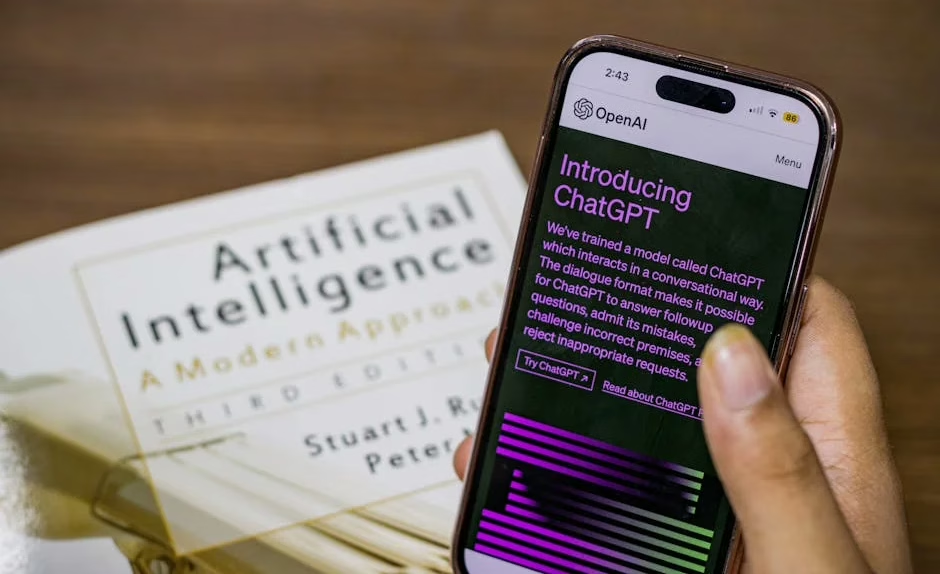

OpenAI on Monday officially launched ChatGPT Images 2.0, a major upgrade to its image generation capabilities that can produce multilingual text blocks, full infographics, slides, maps, and manga-style artwork within a single image. The new `gpt-image-2` model, which has been available for testing on LM Arena AI under the codename “duct tape” for several weeks, now rolls out to all ChatGPT tiers and API users.

According to OpenAI’s announcement, the system represents “a fundamental shift in how the company views visual media.” The update can generate floor plans, character models from multiple angles, image grids with smaller sub-images, and apply these features to user-uploaded imagery. Early testers reported the model’s ability to reproduce real-life figures like CEO Sam Altman and generate realistic user interface screenshots from popular websites.

Google Counters With Deep Research Agents

Google simultaneously unveiled two competing multimodal research agents — Deep Research and Deep Research Max — built on its Gemini 3.1 Pro model. These agents can fuse open web data with proprietary enterprise information through a single API call, according to VentureBeat reporting.

“We are launching two powerful updates to Deep Research in the Gemini API, now with better quality, MCP support, and native chart/infographics generation,” Google CEO Sundar Pichai wrote on X. The agents connect to third-party data sources through the Model Context Protocol (MCP) and can produce native charts and infographics inside research reports.

Google positions these tools for enterprise research workflows in finance, life sciences, and market intelligence — sectors where information accuracy carries high stakes. The agents aim to automate multi-source research that traditionally required hours or days of human analyst time.

Clinical AI Applications Show Multimodal Promise

Research applications demonstrate multimodal AI‘s expanding reach beyond consumer tools. A new study published on arXiv details an automated system for detecting dosing errors in clinical trial narratives using gradient boosting with comprehensive multi-modal feature engineering.

The system combines 3,451 features spanning traditional NLP techniques, dense semantic embeddings, domain-specific medical patterns, and transformer-based scores to train a LightGBM model. According to the research paper, the approach achieved 0.8725 test ROC-AUC on the CT-DEB benchmark dataset with severe class imbalance (4.9% positive rate).

Feature efficiency analysis revealed that selecting the top 500-1000 features yielded optimal performance (0.886-0.887 AUC), outperforming the full 3,451-feature set through effective noise reduction. The study processed 42,112 clinical trial narratives with median 5,400 characters per sample.

Enterprise Adoption Accelerates Across Industries

Enterprise adoption of multimodal AI has accelerated rapidly, with Google reporting 1,302 real-world generative AI use cases from leading organizations as of April 2026. This represents significant growth from the initial 101 use cases documented at Next ’24 two years ago.

According to Google’s blog post, the vast majority showcase agentic AI applications built with tools like Gemini Enterprise, Gemini CLI, Security Command Center, and AI Hypercomputer infrastructure. The company describes this as “the fastest technological transformation we’ve seen,” driven by customer enthusiasm.

Academic institutions are also embracing multimodal AI. At MIT, researchers across departments have integrated AI into combustion kinetics, aerospace materials, and energy systems. Associate professor Sili Deng developed a “digital twin” that mirrors energy/flow device performance, while aerospace professor Zachary Cordero created an AI tool to optimize blisk material composition for jet and rocket turbine engines.

Technical Capabilities Expand Rapidly

ChatGPT Images 2.0’s technical capabilities span multiple modalities within image generation. The system can perform web research and incorporate results directly into generated images, create long text blocks or disparate text panels within single images, and generate realistic screenshots of popular platforms and websites.

OpenAI’s update includes new “Thinking” features for ChatGPT subscribers alongside the core model improvements. The company frames images as a fundamental communication medium rather than just visual content, enabling complex information visualization that combines text, graphics, and visual elements.

Google’s competing Deep Research agents focus on information synthesis rather than image creation. The tools can autonomously conduct exhaustive research across multiple sources, connecting web data with enterprise databases to produce comprehensive reports with embedded visualizations.

What This Means

The simultaneous release of advanced multimodal AI tools from OpenAI and Google signals a new phase in AI development where text, image, and data synthesis converge into unified platforms. These systems move beyond simple content generation to become research and analysis tools capable of processing multiple information types simultaneously.

For enterprises, this convergence enables more sophisticated workflows that combine visual communication with data analysis. Clinical research, financial modeling, and market intelligence can now leverage AI systems that understand context across text, images, and structured data.

The competition between OpenAI’s creative-focused approach and Google’s enterprise-research orientation suggests the multimodal AI market will bifurcate into specialized use cases rather than one-size-fits-all solutions. Organizations will likely adopt multiple tools depending on whether they prioritize visual content creation or analytical research capabilities.

FAQ

What makes ChatGPT Images 2.0 different from previous versions?

ChatGPT Images 2.0 can generate multilingual text blocks, infographics, slides, and maps within single images, plus perform web research and incorporate results into visual content. It represents a shift from simple image generation to complex visual communication tools.

How do Google’s Deep Research agents compare to ChatGPT Images 2.0?

Google’s agents focus on research and data synthesis rather than image creation. They can combine web data with enterprise information through APIs and generate charts/infographics, targeting business intelligence workflows rather than creative content.

Are these multimodal AI tools available to consumers or just enterprises?

ChatGPT Images 2.0 rolls out to all ChatGPT tiers including consumer users, while Google’s Deep Research agents are currently available only through the Gemini API for developers and enterprise customers, not in the consumer Gemini app.