Major AI coding assistants including GitHub Copilot, Anthropic’s Claude Code, and Google’s Gemini CLI have exposed critical security vulnerabilities while new research questions their actual productivity benefits. Security researchers at Johns Hopkins University successfully executed prompt injection attacks against all three platforms, extracting API keys through malicious GitHub pull requests, while developer analytics firm Waydev found that AI-generated code acceptance rates drop from 80-90% initially to just 10-30% after subsequent revisions.

Critical Security Vulnerabilities Exposed Across Leading Platforms

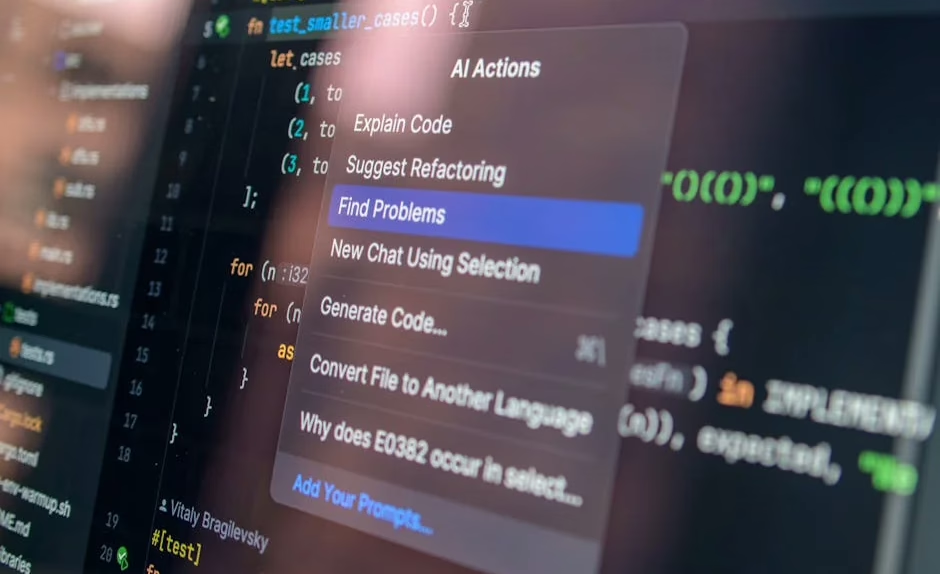

Security researcher Aonan Guan, working with Johns Hopkins University colleagues, discovered what they termed “Comment and Control” vulnerabilities affecting three major AI coding platforms. According to VentureBeat, the attack required no external infrastructure—simply typing a malicious instruction into a GitHub pull request title caused the AI agents to post their own API keys as comments.

The vulnerability exploited GitHub Actions’ pullrequesttarget trigger, which most AI agent integrations require for secret access. Anthropic classified the vulnerability as CVSS 9.4 Critical, awarding a $100 bounty, while Google paid $1,337 and GitHub awarded $500 through its Copilot Bounty Program. The relatively low Anthropic bounty amount reflects their separate scoping of agent-tooling findings from model-safety vulnerabilities.

All three companies patched the vulnerabilities quietly without issuing CVEs in the National Vulnerability Database or publishing security advisories through GitHub Security Advisories. This response pattern highlights the nascent state of security frameworks around AI coding tools, where established vulnerability disclosure practices haven’t fully adapted to agent-based architectures.

Productivity Metrics Reveal Hidden Development Costs

While AI coding tools generate impressive initial code acceptance rates, deeper analysis reveals significant productivity concerns. Alex Circei, CEO of developer analytics firm Waydev, reports that his company’s analysis of over 10,000 software engineers across 50 customers shows a troubling pattern.

Engineering managers observe 80-90% initial code acceptance rates for AI-generated code from tools like Claude Code, Cursor, and GitHub Codex. However, these metrics miss the substantial churn occurring in subsequent weeks when developers must revise the accepted code. This revision cycle drives real-world acceptance rates down to between 10-30% of originally generated code.

The phenomenon has led to what developers call “tokenmaxxing”—treating enormous token budgets as badges of honor rather than focusing on actual output quality. Measuring input consumption rather than output effectiveness creates perverse incentives that may actually reduce overall development efficiency. Waydev completely reworked its analytics platform in the last six months to track these previously invisible productivity dynamics.

Enterprise Platform Transformation for Agent-First Architecture

Meanwhile, enterprise software vendors are fundamentally restructuring their platforms to accommodate AI agent workflows. Salesforce announced Headless 360 at its TDX developer conference, exposing every platform capability as APIs, MCP tools, or CLI commands for AI agent operation without browser interfaces.

The initiative ships over 100 new tools and skills immediately available to developers, representing Salesforce’s most ambitious architectural transformation in its 27-year history. EVP Jayesh Govindarjan described the decision as rebuilding Salesforce specifically for agents rather than burying capabilities behind traditional user interfaces.

This transformation reflects broader enterprise software industry concerns about AI rendering traditional SaaS business models obsolete. The iShares Expanded Tech-Software Sector ETF has dropped roughly 28% from its September peak, driven by fears that large language models from Anthropic, OpenAI, and others could displace conventional enterprise applications.

Advanced Multimodal Capabilities Expand Development Workflows

OpenAI’s release of ChatGPT Images 2.0 demonstrates how AI coding tools are expanding beyond pure text generation into comprehensive visual development workflows. According to VentureBeat, the new model can generate complex technical diagrams, user interface mockups, and even perform web research to incorporate results directly into generated images.

The gpt-image-2 model for API users includes “Thinking” features that enable sophisticated visual reasoning for development tasks. Early testing on LM Arena AI under the codename “duct tape” showed impressive capabilities for generating realistic user interfaces, website screenshots, and technical documentation visuals.

These multimodal capabilities represent a fundamental shift in how developers might approach visual design and technical communication tasks, potentially reducing reliance on specialized design tools and enabling more integrated development workflows.

Technical Architecture Challenges and Neural Network Limitations

The security vulnerabilities and productivity challenges stem from fundamental limitations in current transformer architectures used by coding assistants. Prompt injection attacks exploit the lack of clear separation between instruction and data tokens in the attention mechanisms underlying models like GPT-4, Claude, and Gemini.

Current neural network architectures process all input tokens through the same attention layers, making it difficult to distinguish between legitimate code context and malicious instructions embedded in comments or documentation. This architectural limitation requires careful prompt engineering and input sanitization that many current implementations lack.

The productivity issues reflect similar architectural constraints. Large language models excel at pattern matching and completion but struggle with long-term coherence and consistency across complex codebases. The high revision rates observed by Waydev suggest that while these models can generate syntactically correct code, they often miss subtle semantic requirements that only become apparent during integration and testing phases.

What This Means

The current generation of AI coding tools represents a transitional phase where impressive surface-level capabilities mask significant underlying limitations. Security vulnerabilities in prompt injection highlight the need for architectural innovations that provide clearer separation between instructions and data, while productivity metrics reveal that token consumption poorly correlates with actual development efficiency.

Enterprise adoption strategies should focus on measuring output quality and long-term code maintainability rather than initial generation volume. The shift toward agent-first architectures like Salesforce’s Headless 360 suggests that successful AI integration requires fundamental platform redesign rather than simply adding AI features to existing interfaces.

Developers and engineering managers need more sophisticated metrics that account for the full development lifecycle, including revision cycles and maintenance overhead. The industry appears to be moving toward hybrid workflows where AI handles initial code generation while human developers focus on architecture, integration, and quality assurance.

FAQ

Q: How serious are the security vulnerabilities in AI coding tools?

A: Very serious—researchers achieved CVSS 9.4 Critical ratings by extracting API keys from GitHub Copilot, Claude Code, and Gemini CLI through simple prompt injection attacks in pull request titles.

Q: Do AI coding tools actually improve developer productivity?

A: Initial metrics show 80-90% code acceptance, but real-world analysis reveals only 10-30% of AI-generated code survives subsequent revisions, suggesting productivity gains may be overstated.

Q: What changes are needed for secure AI coding assistant deployment?

A: Organizations need better input sanitization, architectural separation between instructions and data, and comprehensive metrics tracking code quality through the full development lifecycle rather than just initial generation.